Review:

The Stars, Like Dust, by Isaac Asimov

| Series: |

Galactic Empire #2 |

| Publisher: |

Fawcett Crest |

| Copyright: |

1950, 1951 |

| Printing: |

June 1972 |

| Format: |

Mass market |

| Pages: |

192 |

The Stars, Like Dust is usually listed as the first book in

Asimov's lesser-known Galactic Empire Trilogy since it takes place before

Pebble in the Sky.

Pebble in the

Sky was published first, though, so I count it as the second book. It is

very early science fiction with a few mystery overtones.

Buying books produces about 5% of the pleasure of reading them while

taking much less than 5% of the time. There was a time in my life when I

thoroughly enjoyed methodically working through a used book store, list in

hand, tracking down cheap copies to fill in holes in series. This means

that I own a lot of books that I thought at some point that I would want

to read but never got around to, often because, at the time, I was feeling

completionist about some series or piece of world-building. From time to

time, I get the urge to try to read some of them.

Sometimes this is a poor use of my time.

The Galactic Empire series is from Asimov's first science fiction period,

after the

Foundation series but contemporaneous with their

collection into novels. They're set long, long before

Foundation,

but after humans have inhabited numerous star systems and Earth has become

something of a backwater. That process is just starting in

The

Stars, Like Dust: Earth is still somewhere where an upper-class son might

be sent for an education, but it has been devastated by nuclear wars and

is well on its way to becoming an inward-looking relic on the edge of

galactic society.

Biron Farrill is the son of the Lord Rancher of Widemos, a wealthy noble

whose world is one of those conquered by the Tyranni. In many other SF

novels, the Tyranni would be an alien race; here, it's a hierarchical and

authoritarian human civilization. The book opens with Biron discovering a

radiation bomb planted in his dorm room. Shortly after, he learns that

his father had been arrested. One of his fellow students claims to be in

Biron's side against the Tyranni and gives him false papers to travel to

Rhodia, a wealthy world run by a Tyranni sycophant.

Like most books of this era,

The Stars, Like Dust is a short novel

full of plot twists. Unlike some of its contemporaries, it's not devoid

of characterization, but I might have liked it better if it were. Biron

behaves like an obnoxious teenager when he's not being an arrogant ass.

There is a female character who does a few plot-relevant things and at no

point is sexually assaulted, so I'll give Asimov that much, but the gender

stereotypes are ironclad and there is an entire subplot focused on what I

can only describe as seduction via petty jealousy.

The writing... well, let me quote a typical passage:

There was no way of telling when the threshold would be reached.

Perhaps not for hours, and perhaps the next moment. Biron remained

standing helplessly, flashlight held loosely in his damp hands. Half

an hour before, the visiphone had awakened him, and he had been at

peace then. Now he knew he was going to die.

Biron didn't want to die, but he was penned in hopelessly, and there

was no place to hide.

Needless to say, Biron doesn't die. Even if your tolerance for pulp

melodrama is high, 192 small-print pages of this sort of thing is

wearying.

Like a lot of Asimov plots,

The Stars, Like Dust has some of the

shape of a mystery novel. Biron, with the aid of some newfound companions

on Rhodia, learns of a secret rebellion against the Tyranni and attempts

to track down its base to join them. There are false leads, disguised

identities, clues that are difficult to interpret, and similar classic

mystery trappings, all covered with a patina of early 1950s imaginary

science. To me, it felt constructed and artificial in ways that made the

strings Asimov was pulling obvious. I don't know if someone who likes

mystery construction would feel differently about it.

The worst part of the plot thankfully doesn't come up much. We learn

early in the story that Biron was on Earth to search for a long-lost

document believed to be vital to defeating the Tyranni. The nature of

that document is revealed on the final page, so I won't spoil it, but if

you try to think of the stupidest possible document someone could have

built this plot around, I suspect you will only need one guess. (In

Asimov's defense, he blamed

Galaxy editor H.L. Gold for persuading

him to include this plot, and disavowed it a few years later.)

The Stars, Like Dust is one of the worst books I have ever read.

The characters are overwrought, the politics are slapdash and build on

broad stereotypes, the romantic subplot is dire and plays out mainly via

the Biron egregiously manipulating his petulant love interest, and the

writing is annoying. Sometimes pulp fiction makes up for those common

flaws through larger-than-life feats of daring, sweeping visions of future

societies, and ever-escalating stakes. There is little to none of that

here. Asimov instead provides tedious political maneuvering among a class

of elitist bankers and land owners who consider themselves natural

leaders. The only places where the power structures of this future

government make sense are where Asimov blatantly steals them from either

the Roman Empire or the Doge of Venice.

The one thing this book has going for it the thing, apart from

bloody-minded completionism, that kept me reading is that the technology

is hilariously weird in that way that only 1940s and 1950s science fiction

can be. The characters have access to communication via some sort of

interstellar telepathy (messages coded to a specific person's "brain

waves") and can travel between stars through hyperspace jumps, but each

jump is manually calculated by referring to the pilot's (paper!) volumes of

the

Standard Galactic Ephemeris. Communication between ships (via

"etheric radio") requires manually aiming a radio beam at the area in

space where one thinks the other ship is. It's an unintentionally

entertaining combination of technology that now looks absurdly primitive

and science that is so advanced and hand-waved that it's obviously made

up.

I also have to give Asimov some points for using spherical coordinates.

It's a small thing, but the coordinate systems in most SF novels and TV

shows are obviously not fit for purpose.

I spent about a month and a half of this year barely reading, and while

some of that is because I finally tackled a few projects I'd been putting

off for years, a lot of it was because of this book. It was only 192

pages, and I'm still curious about the glue between Asimov's

Foundation and

Robot series, both of which I devoured as a

teenager. But every time I picked it up to finally finish it and start

another book, I made it about ten pages and then couldn't take any more.

Learn from my error: don't try this at home, or at least give up if the

same thing starts happening to you.

Followed by

The Currents of Space.

Rating: 2 out of 10

Now that we are back from our six month period in Argentina, we

decided to adopt a kitten, to bring more diversity into our

lives. Perhaps this little girl will teach us to think outside the

box!

Now that we are back from our six month period in Argentina, we

decided to adopt a kitten, to bring more diversity into our

lives. Perhaps this little girl will teach us to think outside the

box!

Our retiring room at the Old Delhi Railway Station.

Our retiring room at the Old Delhi Railway Station.

Security outside the Taj Mahal complex.

Security outside the Taj Mahal complex.

This red colored building is entrance to where you can see the Taj Mahal.

This red colored building is entrance to where you can see the Taj Mahal.

Taj Mahal.

Taj Mahal.

Shoe covers for going inside the mausoleum.

Shoe covers for going inside the mausoleum.

Taj Mahal from side angle.

Taj Mahal from side angle.

My brain is currently suffering from an overload caused by grading student

assignments.

In search of a somewhat productive way to procrastinate, I thought I

would share a small script I wrote sometime in 2023 to facilitate my grading

work.

I use Moodle for all the classes I teach and students use it to hand me out

their papers. When I'm ready to grade them, I download the ZIP archive Moodle

provides containing all their PDF files and comment them

My brain is currently suffering from an overload caused by grading student

assignments.

In search of a somewhat productive way to procrastinate, I thought I

would share a small script I wrote sometime in 2023 to facilitate my grading

work.

I use Moodle for all the classes I teach and students use it to hand me out

their papers. When I'm ready to grade them, I download the ZIP archive Moodle

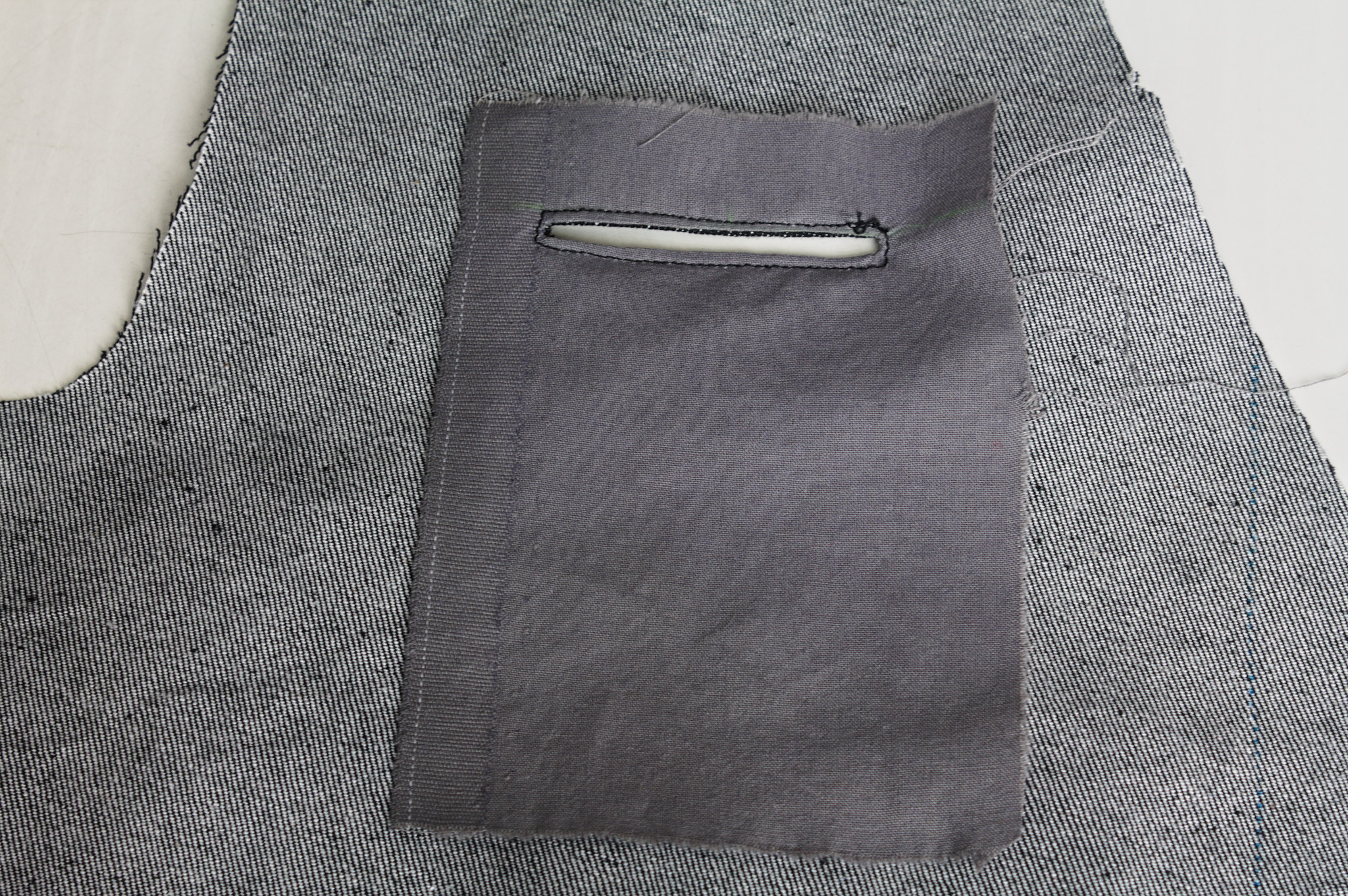

provides containing all their PDF files and comment them  I had finished sewing my jeans, I had a scant 50 cm of elastic denim

left.

Unrelated to that, I had just finished drafting a vest with Valentina,

after

I had finished sewing my jeans, I had a scant 50 cm of elastic denim

left.

Unrelated to that, I had just finished drafting a vest with Valentina,

after

The other thing that wasn t exactly as expected is the back: the pattern

splits the bottom part of the back to give it sufficient spring over

the hips . The book is probably published in 1892, but I had already

found when drafting the foundation skirt that its idea of hips

includes a bit of structure. The enough steel to carry a book or a cup

of tea kind of structure. I should have expected a lot of spring, and

indeed that s what I got.

To fit the bottom part of the back on the limited amount of fabric I had

to piece it, and I suspect that the flat felled seam in the center is

helping it sticking out; I don t think it s exactly bad, but it is

a peculiar look.

Also, I had to cut the back on the fold, rather than having a seam in

the middle and the grain on a different angle.

Anyway, my next waistcoat project is going to have a linen-cotton lining

and silk fashion fabric, and I d say that the pattern is good enough

that I can do a few small fixes and cut it directly in the lining, using

it as a second mockup.

As for the wrinkles, there is quite a bit, but it looks something that

will be solved by a bit of lightweight boning in the side seams and in

the front; it will be seen in the second mockup and the finished

waistcoat.

As for this one, it s definitely going to get some wear as is, in casual

contexts. Except. Well, it s a denim waistcoat, right? With a very

different cut from the get a denim jacket and rip out the sleeves , but

still a denim waistcoat, right? The kind that you cover in patches,

right?

The other thing that wasn t exactly as expected is the back: the pattern

splits the bottom part of the back to give it sufficient spring over

the hips . The book is probably published in 1892, but I had already

found when drafting the foundation skirt that its idea of hips

includes a bit of structure. The enough steel to carry a book or a cup

of tea kind of structure. I should have expected a lot of spring, and

indeed that s what I got.

To fit the bottom part of the back on the limited amount of fabric I had

to piece it, and I suspect that the flat felled seam in the center is

helping it sticking out; I don t think it s exactly bad, but it is

a peculiar look.

Also, I had to cut the back on the fold, rather than having a seam in

the middle and the grain on a different angle.

Anyway, my next waistcoat project is going to have a linen-cotton lining

and silk fashion fabric, and I d say that the pattern is good enough

that I can do a few small fixes and cut it directly in the lining, using

it as a second mockup.

As for the wrinkles, there is quite a bit, but it looks something that

will be solved by a bit of lightweight boning in the side seams and in

the front; it will be seen in the second mockup and the finished

waistcoat.

As for this one, it s definitely going to get some wear as is, in casual

contexts. Except. Well, it s a denim waistcoat, right? With a very

different cut from the get a denim jacket and rip out the sleeves , but

still a denim waistcoat, right? The kind that you cover in patches,

right?

Mee Goreng, a dish made of noodles in Malaysia.

Mee Goreng, a dish made of noodles in Malaysia.

Me at Petronas Towers.

Me at Petronas Towers.

Photo with Malaysians.

Photo with Malaysians.

For all interested people who are reasonably close to central Argentina, or can

be persuaded to come here in a month s time You are all welcome!

It seems I managed to convince my good friend Mart n Bayo (some Debian people

will remember him, as he was present in DebConf19 in Curitiba, Brazil) to get

some facilities for us to have a nice Debian get-together in Central Argentina.

For all interested people who are reasonably close to central Argentina, or can

be persuaded to come here in a month s time You are all welcome!

It seems I managed to convince my good friend Mart n Bayo (some Debian people

will remember him, as he was present in DebConf19 in Curitiba, Brazil) to get

some facilities for us to have a nice Debian get-together in Central Argentina.

CW for body size change mentions

I needed a corset, badly.

Years ago I had a chance to have my measurements taken by a former

professional corset maker and then a lesson in how to draft an underbust

corset, and that lead to me learning how nice wearing a well-fitted

corset feels.

Later I tried to extend that pattern up for a midbust corset, with

success.

And then my body changed suddenly, and I was no longer able to wear

either of those, and after a while I started missing them.

Since my body was still changing (if no longer drastically so), and I

didn t want to use expensive materials for something that had a risk of

not fitting after too little time, I decided to start by making myself a

summer lightweight corset in aida cloth and plastic boning (for which I

had already bought materials). It fitted, but not as well as the first

two ones, and I ve worn it quite a bit.

I still wanted back the feeling of wearing a comfy, heavy contraption of

coutil and steel, however.

After a lot of procrastination I redrafted a new pattern, scrapped

everything, tried again, had my measurements taken by a dressmaker

[#dressmaker], put them in the draft, cut a first mock-up in cheap

cotton, fixed the position of a seam, did a second mock-up in denim

[#jeans] from an old pair of jeans, and then cut into the cheap

herringbone coutil I was planning to use.

And that s when I went to see which one of the busks in my stash would

work, and realized that I had used a wrong vertical measurement and the

front of the corset was way too long for a midbust corset.

CW for body size change mentions

I needed a corset, badly.

Years ago I had a chance to have my measurements taken by a former

professional corset maker and then a lesson in how to draft an underbust

corset, and that lead to me learning how nice wearing a well-fitted

corset feels.

Later I tried to extend that pattern up for a midbust corset, with

success.

And then my body changed suddenly, and I was no longer able to wear

either of those, and after a while I started missing them.

Since my body was still changing (if no longer drastically so), and I

didn t want to use expensive materials for something that had a risk of

not fitting after too little time, I decided to start by making myself a

summer lightweight corset in aida cloth and plastic boning (for which I

had already bought materials). It fitted, but not as well as the first

two ones, and I ve worn it quite a bit.

I still wanted back the feeling of wearing a comfy, heavy contraption of

coutil and steel, however.

After a lot of procrastination I redrafted a new pattern, scrapped

everything, tried again, had my measurements taken by a dressmaker

[#dressmaker], put them in the draft, cut a first mock-up in cheap

cotton, fixed the position of a seam, did a second mock-up in denim

[#jeans] from an old pair of jeans, and then cut into the cheap

herringbone coutil I was planning to use.

And that s when I went to see which one of the busks in my stash would

work, and realized that I had used a wrong vertical measurement and the

front of the corset was way too long for a midbust corset.

Luckily I also had a few longer busks, I basted one to the denim mock up

and tried to wear it for a few hours, to see if it was too long to be

comfortable. It was just a bit, on the bottom, which could be easily

fixed with the Power Tools

Luckily I also had a few longer busks, I basted one to the denim mock up

and tried to wear it for a few hours, to see if it was too long to be

comfortable. It was just a bit, on the bottom, which could be easily

fixed with the Power Tools I could have been a bit more precise with the binding, as it doesn t

align precisely at the front edge, but then again, it s underwear,

nobody other than me and everybody who reads this post is going to see

it and I was in a hurry to see it finished. I will be more careful with

the next one.

I could have been a bit more precise with the binding, as it doesn t

align precisely at the front edge, but then again, it s underwear,

nobody other than me and everybody who reads this post is going to see

it and I was in a hurry to see it finished. I will be more careful with

the next one.

I also think that I haven t been careful enough when pressing the seams

and applying the tape, and I ve lost about a cm of width per part, so

I m using a lacing gap that is a bit wider than I planned for, but that

may change as the corset gets worn, and is still within tolerance.

Also, on the morning after I had finished the corset I woke up and

realized that I had forgotten to add garter tabs at the bottom edge.

I don t know whether I will ever use them, but I wanted the option, so

maybe I ll try to add them later on, especially if I can do it without

undoing the binding.

The next step would have been flossing, which I proceeded to postpone

until I ve worn the corset for a while: not because there is any reason

for it, but because I still don t know how I want to do it :)

What was left was finishing and uploading the

I also think that I haven t been careful enough when pressing the seams

and applying the tape, and I ve lost about a cm of width per part, so

I m using a lacing gap that is a bit wider than I planned for, but that

may change as the corset gets worn, and is still within tolerance.

Also, on the morning after I had finished the corset I woke up and

realized that I had forgotten to add garter tabs at the bottom edge.

I don t know whether I will ever use them, but I wanted the option, so

maybe I ll try to add them later on, especially if I can do it without

undoing the binding.

The next step would have been flossing, which I proceeded to postpone

until I ve worn the corset for a while: not because there is any reason

for it, but because I still don t know how I want to do it :)

What was left was finishing and uploading the

This post describes how I m using

This post describes how I m using

Over roughly the last year and a half I have been participating as a reviewer in

ACM s

Over roughly the last year and a half I have been participating as a reviewer in

ACM s  Now, I think it's safe to assume this program is dead and buried, and

anyways I'm running

Now, I think it's safe to assume this program is dead and buried, and

anyways I'm running