Review:

Babel, by R.F. Kuang

| Publisher: |

Harper Voyage |

| Copyright: |

August 2022 |

| ISBN: |

0-06-302144-7 |

| Format: |

Kindle |

| Pages: |

544 |

Babel, or the Necessity of Violence: An Arcane History of the Oxford

Translators' Revolution, to give it its full title, is a standalone

dark academia fantasy

set in the 1830s and 1840s, primarily in Oxford, England. The first book

of R.F. Kuang's previous trilogy,

The Poppy War, was nominated for

multiple awards and won the Compton Crook Award for best first novel.

Babel is her fourth book.

Robin Swift, although that was not his name at the time, was born and

raised in Canton and educated by an inexplicable English tutor his family

could not have afforded. After his entire family dies of cholera, he is

plucked from China by a British professor and offered a life in England as

his ward. What follows is a paradise of books, a hell of relentless and

demanding instruction, and an unpredictably abusive emotional environment,

all aiming him towards admission to Oxford University. Robin will join

University College and the Royal Institute of Translation.

The politics of this imperial Britain are almost precisely the same as in

our history, but one of the engines is profoundly different. This world

has magic. If words from two different languages are engraved on a metal

bar (silver is best), the meaning and nuance lost in translation becomes

magical power. With a careful choice of translation pairs, and sometimes

additional help from other related words and techniques, the silver bar

becomes a persistent spell. Britain's industrial revolution is in

overdrive thanks to the country's vast stores of silver and the applied

translation prowess of Babel.

This means Babel is also the only part of very racist Oxford that accepts

non-white students and women. They need translators (barely) more than

they care about maintaining social hierarchy; translation pairs only work

when the translator is fluent in both languages. The magic is also

stronger when meanings are more distinct, which is creating serious

worries about classical and European languages. Those are still the bulk

of Babel's work, but increased trade and communication within Europe is

eroding the meaning distinctions and thus the amount of magical power.

More remote languages, such as Chinese and Urdu, are full of untapped

promise that Britain's colonial empire wants to capture. Professor

Lowell, Robin's dubious benefactor, is a specialist in Chinese languages;

Robin is a potential tool for his plans.

As Robin discovers shortly after arriving in Oxford, he is not the first

of Lowell's tools. His predecessor turned against Babel and is trying to

break its chokehold on translation magic. He wants Robin to help.

This is one of those books that is hard to review because it does some

things exceptionally well and other things that did not work for me. It's

not obvious if the latter are flaws in the book or a mismatch between book

and reader (or, frankly, flaws in the reader). I'll try to explain as

best I can so that you can draw your own conclusions.

First, this is one of the all-time great magical system hooks. The way

words are tapped for power is fully fleshed out and exceptionally

well-done. Kuang is a professional translator, which shows in the

attention to detail on translation pairs. I think this is the

best-constructed and explained word-based magic system I've read in

fantasy. Many word-based systems treat magic as its own separate language

that is weirdly universal. Here, Kuang does the exact opposite, and the

result is immensely satisfying.

A fantasy reader may expect exploration of this magic system to be the

primary point of the book, however, and this is not the case. It is an

important part of the book, and its implications are essential to the plot

resolution, but this is not the type of fantasy novel where the plot is

driven by character exploration of the magic system. The magic system

exists, the characters use it, and we do get some crunchy details, but the

heart of the book is elsewhere. If you were expecting the typical

relationship of a fantasy novel to its magic system, you may get a bit

wrong-footed.

Similarly, this is historical fantasy, but it is the type of historical

fantasy where the existence of magic causes no significant differences.

For some people, this is a pet peeve; personally, I don't mind that choice

in the abstract, but some of the specifics bugged me.

The villains of this book assert that any country could have done what

Britain did in developing translation magic, and thus their hoarding of it

is not immoral. They are obviously partly lying (this is a classic

justification for imperialism), but it's not clear from the book how they

are lying. Technologies (and magic here works like a technology) tend to

concentrate power when they require significant capital investment, and

tend to dilute power when they are portable and easy to teach.

Translation magic feels like the latter, but its effect in the book is

clearly the former, and I was never sure why.

England is not an obvious choice to be a translation superpower. Yes,

it's a colonial empire, but India, southeast Asia, and most certainly

Africa (the continent largely not appearing in this book) are home to

considerably more languages from more wildly disparate families than

western Europe. Translation is not a peculiarly European idea, and this

magic system does not seem hard to stumble across. It's not clear why

England, and Oxford in particular, is so dramatically far ahead. There is

some sign that Babel is keeping the mechanics of translation magic secret,

but that secret has leaked, seems easy to develop independently, and is

simple enough that a new student can perform basic magic with a few hours

of instruction. This does not feel like the kind of power that would be

easy to concentrate, let alone to the extreme extent required by the last

quarter of this book.

The demand for silver as a base material for translation magic provides a

justification for mercantilism that avoids the confusing complexities of

currency economics in our actual history, so fine, I guess, but it was a

bit disappointing for this great of an idea for a magic system to have

this small of an impact on politics.

I'll come to the actual thrust of this book in a moment, but first

something else

Babel does exceptionally well: dark academia.

The remainder of Robin's cohort at Oxford is Remy, a dark-skinned Muslim

from Calcutta; Victoire, a Haitian woman raised in France; and Letty, the

daughter of a British admiral. All of them are non-white except Letty,

and Letty and Victoire additionally have to deal with the blatant sexism

of the time. (For example, they have to live several miles from Oxford

because women living near the college would be a "distraction.")

The interpersonal dynamics between the four are exceptionally well done.

Kuang captures the dislocation of going away to college, the unsettled

life upheaval that makes it both easy and vital to form suddenly tight

friendships, and the way that the immense pressure from classes and exams

leaves one so devoid of spare emotional capacity that those friendships

become both unbreakable and badly strained. Robin and Remy almost

immediately become inseparable in that type of college friendship in which

profound trust and constant companionship happen first and learning about

the other person happens afterwards.

It's tricky to talk about this without spoilers, but one of the things

Kuang sets up with this friend group is a pointed look at

intersectionality.

Babel has gotten a lot of positive review buzz,

and I think this is one of the reasons why. Kuang does not pass over or

make excuses for characters in a place where many other books do. This

mostly worked for me, but with a substantial caveat that I think you may

want to be aware of before you dive into this book.

Babel is set in the 1830s, but it is very much about the politics

of 2022. That does not necessarily mean that the politics are off for the

1830s; I haven't done the research to know, and it's possible I'm seeing

the Tiffany problem (Jo Walton's observation that Tiffany is a historical

12th century women's name, but an author can't use it as a medieval name

because readers think it sounds too modern). But I found it hard to shake

the feeling that the characters make sense of their world using modern

analytical frameworks of imperialism, racism, sexism, and intersectional

feminism, although without using modern terminology, and characters from

the 1830s would react somewhat differently. This is a valid authorial

choice; all books are written for the readers of the time when they're

published. But as with magical systems that don't change history, it's a

pet peeve for some readers. If that's you, be aware that's the feel I got

from it.

The true center of this book is not the magic system or the history. It's

advertised directly in the title the necessity of violence although

it's not until well into the book before the reader knows what that means.

This is a book about revolution, what revolution means, what decisions you

have to make along the way, how the personal affects the political, and

the inadequacy of reform politics. It is hard, uncomfortable, and not

gentle on its characters.

The last quarter of this book was exceptional, and I understand why it's

getting so much attention. Kuang directly confronts the desire for

someone else to do the necessary work, the hope that surely the people

with power will see reason, and the feeling of despair when there are no

good plans and every reason to wait and do nothing when atrocities are

about to happen. If you are familiar with radical politics, these aren't

new questions, but this is not the sort of thing that normally shows up in

fantasy. It does not surprise me that

Babel struck a nerve with

readers a generation or two younger than me. It captures that heady

feeling on the cusp of adulthood when everything is in flux and one is

assembling an independent politics for the first time. Once I neared the

end of the book, I could not put it down. The ending is brutal, but I

think it was the right ending for this book.

There are two things, though, that I did not like about the political arc.

The first is that Victoire is a much more interesting character than

Robin, but is sidelined for most of the book. The difference of

perspectives between her and Robin is the heart of what makes the end of

this book so good, and I wish that had started 300 pages earlier. Or,

even better, I wish Victoire has been the protagonist; I liked Robin, but

he's a very predictable character for most of the book. Victoire is not;

even the conflicts she had earlier in the book, when she didn't get much

attention in the story, felt more dynamic and more thoughtful than Robin's

mix of guilt and anxiety.

The second is that I wish Kuang had shown more of Robin's intellectual

evolution. All of the pieces of why he makes the decisions that he does

are present in this book, and Kuang shows his emotional state (sometimes

in agonizing detail) at each step, but the sense-making, the development

of theory and ideology beneath the actions, is hinted at but not shown.

This is a stylistic choice with no one right answer, but it felt odd

because so much of the rest of the plot is obvious and telegraphed. If

the reader shares Robin's perspective, I think it's easy to fill in the

gaps, but it felt odd to read Robin giving clearly thought-out political

analyses at the end of the book without seeing the hashing-out and

argument with friends required to develop those analyses. I felt like I

had to do a lot of heavy lifting as the reader, work that I wish had been

done directly by the book.

My final note about this book is that I found much of it extremely

predictable. I think that's part of why reviewers describe it as

accessible and easy to read; accessibility and predictability can be two

sides of the same coin. Kuang did not intend for this book to be subtle,

and I think that's part of the appeal. But very few of Robin's actions

for the first three-quarters of the book surprised me, and that's not

always the reading experience I want. The end of the book is different,

and I therefore found it much more gripping, but it takes a while to get

there.

Babel is, for better or worse, the type of fantasy where the

politics, economics, and magic system exist primarily to justify the plot

the author wanted. I don't think the societal position of the Institute

of Translation that makes the ending possible is that believable given the

nature of the technology in question and the politics of the time, and if

you are inclined to dig into the specifics of the world-building, I think

you will find it frustrating. Where it succeeds brilliantly is in

capturing the social dynamics of hothouse academic cohorts, and in making

a sharp and unfortunately timely argument about the role of violence in

political change, in a way that the traditionally conservative setting of

fantasy rarely does.

I can't say

Babel blew me away, but I can see why others liked it

so much. If I had to guess, I'd say that the closer one is in age to the

characters in the book and to that moment of political identity

construction, the more it's likely to appeal.

Rating: 7 out of 10

Check it out

Check it out

QNAP TS-453mini product photo

QNAP TS-453mini product photo The logo for QNAP HappyGet 2 and Blizzard s StarCraft 2 side by side

The logo for QNAP HappyGet 2 and Blizzard s StarCraft 2 side by side Thermalright AXP120-X67, AMD Ryzen 5 PRO 5650GE, ASRock Rack X570D4I-2T, all assembled and running on a flat surface

Thermalright AXP120-X67, AMD Ryzen 5 PRO 5650GE, ASRock Rack X570D4I-2T, all assembled and running on a flat surface Memtest86 showing test progress, taken from IPMI remote control window

Memtest86 showing test progress, taken from IPMI remote control window Screenshot of PCIe 16x slot bifurcation options in UEFI settings, taken from IPMI remote control window

Screenshot of PCIe 16x slot bifurcation options in UEFI settings, taken from IPMI remote control window Internal image of Silverstone CS280 NAS build. Image stolen from

Internal image of Silverstone CS280 NAS build. Image stolen from  Internal image of Silverstone CS280 NAS build. Image stolen from

Internal image of Silverstone CS280 NAS build. Image stolen from  NAS build in Silverstone SUGO 14, mid build, panels removed

NAS build in Silverstone SUGO 14, mid build, panels removed Silverstone SUGO 14 from the front, with hot swap bay installed

Silverstone SUGO 14 from the front, with hot swap bay installed Storage SSD loaded into hot swap sled

Storage SSD loaded into hot swap sled TrueNAS Dashboard screenshot in browser window

TrueNAS Dashboard screenshot in browser window The final system, powered up

The final system, powered up About a week back Jio launched a

About a week back Jio launched a

Unlike the Americans who chose the path to have more competition, we have chosen the path to have more monopolies. So even though, I very much liked Louis es

Unlike the Americans who chose the path to have more competition, we have chosen the path to have more monopolies. So even though, I very much liked Louis es

I have had the pleasure to have Poha in many ways. One of my favorite ones is when people have actually put tadka on top of Poha. You do everything else but in a slight reverse order. The tadka has all the spices mixed and is concentrated and is put on top of Poha and then mixed. Done right, it tastes out of this world. For those who might not have had the Indian culinary experience, most of which is actually borrowed from the Mughals, you are in for a treat.

One of the other things I would suggest to people is to ask people where there can get five types of rice. This is a specialty of South India and a sort of street food. I know where you can get it Hyderabad, Bangalore, Chennai but not in Kerala, although am dead sure there is, just somehow have missed it. If asked, am sure the Kerala team should be able to guide.

That s all for now, feeling hungry, having dinner as have been sharing about cooking.

I have had the pleasure to have Poha in many ways. One of my favorite ones is when people have actually put tadka on top of Poha. You do everything else but in a slight reverse order. The tadka has all the spices mixed and is concentrated and is put on top of Poha and then mixed. Done right, it tastes out of this world. For those who might not have had the Indian culinary experience, most of which is actually borrowed from the Mughals, you are in for a treat.

One of the other things I would suggest to people is to ask people where there can get five types of rice. This is a specialty of South India and a sort of street food. I know where you can get it Hyderabad, Bangalore, Chennai but not in Kerala, although am dead sure there is, just somehow have missed it. If asked, am sure the Kerala team should be able to guide.

That s all for now, feeling hungry, having dinner as have been sharing about cooking.

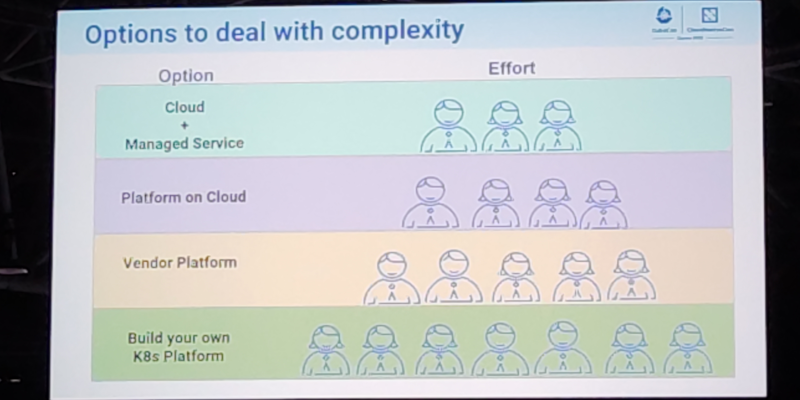

This post serves as a report from my attendance to Kubecon and CloudNativeCon 2023 Europe that took place in

Amsterdam in April 2023. It was my second time physically attending this conference, the first one was in

Austin, Texas (USA) in 2017. I also attended once in a virtual fashion.

The content here is mostly generated for the sake of my own recollection and learnings, and is written from

the notes I took during the event.

The very first session was the opening keynote, which reunited the whole crowd to bootstrap the event and

share the excitement about the days ahead. Some astonishing numbers were announced: there were more than

10.000 people attending, and apparently it could confidently be said that it was the largest open source

technology conference taking place in Europe in recent times.

It was also communicated that the next couple iteration of the event will be run in China in September 2023

and Paris in March 2024.

More numbers, the CNCF was hosting about 159 projects, involving 1300 maintainers and about 200.000

contributors. The cloud-native community is ever-increasing, and there seems to be a strong trend in the

industry for cloud-native technology adoption and all-things related to PaaS and IaaS.

The event program had different tracks, and in each one there was an interesting mix of low-level and higher

level talks for a variety of audience. On many occasions I found that reading the talk title alone was not

enough to know in advance if a talk was a 101 kind of thing or for experienced engineers. But unlike in

previous editions, I didn t have the feeling that the purpose of the conference was to try selling me

anything. Obviously, speakers would make sure to mention, or highlight in a subtle way, the involvement of a

given company in a given solution or piece of the ecosystem. But it was non-invasive and fair enough for me.

On a different note, I found the breakout rooms to be often small. I think there were only a couple of rooms

that could accommodate more than 500 people, which is a fairly small allowance for 10k attendees. I realized

with frustration that the more interesting talks were immediately fully booked, with people waiting in line

some 45 minutes before the session time. Because of this, I missed a few important sessions that I ll

hopefully watch online later.

Finally, on a more technical side, I ve learned many things, that instead of grouping by session I ll group

by topic, given how some subjects were mentioned in several talks.

On gitops and CI/CD pipelines

Most of the mentions went to

This post serves as a report from my attendance to Kubecon and CloudNativeCon 2023 Europe that took place in

Amsterdam in April 2023. It was my second time physically attending this conference, the first one was in

Austin, Texas (USA) in 2017. I also attended once in a virtual fashion.

The content here is mostly generated for the sake of my own recollection and learnings, and is written from

the notes I took during the event.

The very first session was the opening keynote, which reunited the whole crowd to bootstrap the event and

share the excitement about the days ahead. Some astonishing numbers were announced: there were more than

10.000 people attending, and apparently it could confidently be said that it was the largest open source

technology conference taking place in Europe in recent times.

It was also communicated that the next couple iteration of the event will be run in China in September 2023

and Paris in March 2024.

More numbers, the CNCF was hosting about 159 projects, involving 1300 maintainers and about 200.000

contributors. The cloud-native community is ever-increasing, and there seems to be a strong trend in the

industry for cloud-native technology adoption and all-things related to PaaS and IaaS.

The event program had different tracks, and in each one there was an interesting mix of low-level and higher

level talks for a variety of audience. On many occasions I found that reading the talk title alone was not

enough to know in advance if a talk was a 101 kind of thing or for experienced engineers. But unlike in

previous editions, I didn t have the feeling that the purpose of the conference was to try selling me

anything. Obviously, speakers would make sure to mention, or highlight in a subtle way, the involvement of a

given company in a given solution or piece of the ecosystem. But it was non-invasive and fair enough for me.

On a different note, I found the breakout rooms to be often small. I think there were only a couple of rooms

that could accommodate more than 500 people, which is a fairly small allowance for 10k attendees. I realized

with frustration that the more interesting talks were immediately fully booked, with people waiting in line

some 45 minutes before the session time. Because of this, I missed a few important sessions that I ll

hopefully watch online later.

Finally, on a more technical side, I ve learned many things, that instead of grouping by session I ll group

by topic, given how some subjects were mentioned in several talks.

On gitops and CI/CD pipelines

Most of the mentions went to  On etcd, performance and resource management

I attended a talk focused on etcd performance tuning that was very encouraging. They were basically talking

about the

On etcd, performance and resource management

I attended a talk focused on etcd performance tuning that was very encouraging. They were basically talking

about the  On jobs

I attended a couple of talks that were related to HPC/grid-like usages of Kubernetes. I was truly impressed

by some folks out there who were using Kubernetes Jobs on massive scales, such as to train machine learning

models and other fancy AI projects.

It is acknowledged in the community that the early implementation of things like Jobs and CronJobs had some

limitations that are now gone, or at least greatly improved. Some new functionalities have been added as

well. Indexed Jobs, for example, enables each Job to have a number (index) and process a chunk of a larger

batch of data based on that index. It would allow for full grid-like features like sequential (or again,

indexed) processing, coordination between Job and more graceful Job restarts. My first reaction was: Is that

something we would like to enable in

On jobs

I attended a couple of talks that were related to HPC/grid-like usages of Kubernetes. I was truly impressed

by some folks out there who were using Kubernetes Jobs on massive scales, such as to train machine learning

models and other fancy AI projects.

It is acknowledged in the community that the early implementation of things like Jobs and CronJobs had some

limitations that are now gone, or at least greatly improved. Some new functionalities have been added as

well. Indexed Jobs, for example, enables each Job to have a number (index) and process a chunk of a larger

batch of data based on that index. It would allow for full grid-like features like sequential (or again,

indexed) processing, coordination between Job and more graceful Job restarts. My first reaction was: Is that

something we would like to enable in  In 2022 I read 34 books (-19% on last year).

In 2021 roughly a quarter of the books I read were written by women. I was

determined to push that ratio in 2022, so I made an effort to try and only

read books by women. I knew that I wouldn't manage that, but by trying to, I

did get the ratio up to 58% (by page count).

I'm not sure what will happen in 2023. My to-read pile has some back-pressure

from books by male authors I postponed reading in 2022 (in particular new works

by Christopher Priest and Adam Roberts). It's possible the ratio will swing

back the other way, which would mean it would not be worth repeating the

experiment. At least if the ratio is the point of the exercise. But perhaps it

isn't: perhaps the useful outcome is more qualitative than quantitative.

I tried to read some new (to me) authors. I really enjoyed Shirley Jackson (The

Haunting of Hill House, We Have Always Lived In The Castle). I Struggled with

Angela Carter's Heroes and Villains although

I plan to return to her other work, in particular, The Bloody Chamber. I

also got through Donna Tartt's The Secret History on the recommendation of a

friend. I had to push through the first 15% or so but it turned out to be worth

it.

In 2022 I read 34 books (-19% on last year).

In 2021 roughly a quarter of the books I read were written by women. I was

determined to push that ratio in 2022, so I made an effort to try and only

read books by women. I knew that I wouldn't manage that, but by trying to, I

did get the ratio up to 58% (by page count).

I'm not sure what will happen in 2023. My to-read pile has some back-pressure

from books by male authors I postponed reading in 2022 (in particular new works

by Christopher Priest and Adam Roberts). It's possible the ratio will swing

back the other way, which would mean it would not be worth repeating the

experiment. At least if the ratio is the point of the exercise. But perhaps it

isn't: perhaps the useful outcome is more qualitative than quantitative.

I tried to read some new (to me) authors. I really enjoyed Shirley Jackson (The

Haunting of Hill House, We Have Always Lived In The Castle). I Struggled with

Angela Carter's Heroes and Villains although

I plan to return to her other work, in particular, The Bloody Chamber. I

also got through Donna Tartt's The Secret History on the recommendation of a

friend. I had to push through the first 15% or so but it turned out to be worth

it.