In this blog post, I will cover how

debputy parses its manifest and the

conceptual improvements I did to make parsing of the manifest easier.

All instructions to

debputy are provided via the

debian/debputy.manifest file and

said manifest is written in the YAML format. After the YAML parser has read the

basic file structure,

debputy does another pass over the data to extract the

information from the basic structure. As an example, the following YAML file:

manifest-version: "0.1"

installations:

- install:

source: foo

dest-dir: usr/bin

would be transformed by the YAML parser into a structure resembling:

"manifest-version": "0.1",

"installations": [

"install":

"source": "foo",

"dest-dir": "usr/bin",

]

This structure is then what

debputy does a pass on to translate this into

an even higher level format where the

"install" part is translated into

an

InstallRule.

In the original prototype of

debputy, I would hand-write functions to extract

the data that should be transformed into the internal in-memory high level format.

However, it was quite tedious. Especially because I wanted to catch every possible

error condition and report "You are missing the required field X at Y" rather

than the opaque

KeyError: X message that would have been the default.

Beyond being tedious, it was also quite error prone. As an example, in

debputy/0.1.4 I added support for the

install rule and you should allegedly

have been able to add a

dest-dir: or an

as: inside it. Except I crewed up the

code and

debputy was attempting to look up these keywords from a dict that

could never have them.

Hand-writing these parsers were so annoying that it demotivated me from making

manifest related changes to

debputy simply because I did not want to code

the parsing logic. When I got this realization, I figured I had to solve this

problem better.

While reflecting on this, I also considered that I eventually wanted plugins

to be able to add vocabulary to the manifest. If the API was "provide a

callback to extract the details of whatever the user provided here", then the

result would be bad.

- Most plugins would probably throw KeyError: X or ValueError style

errors for quite a while. Worst case, they would end on my table because

the user would have a hard time telling where debputy ends and where

the plugins starts. "Best" case, I would teach debputy to say "This

poor error message was brought to you by plugin foo. Go complain to

them". Either way, it would be a bad user experience.

- This even assumes plugin providers would actually bother writing manifest

parsing code. If it is that difficult, then just providing a custom file

in debian might tempt plugin providers and that would undermine the idea

of having the manifest be the sole input for debputy.

So beyond me being unsatisfied with the current situation, it was also clear

to me that I needed to come up with a better solution if I wanted externally

provided plugins for

debputy. To put a bit more perspective on what I

expected from the end result:

- It had to cover as many parsing errors as possible. An error case this

code would handle for you, would be an error where I could ensure it

sufficient degree of detail and context for the user.

- It should be type-safe / provide typing support such that IDEs/mypy could

help you when you work on the parsed result.

- It had to support "normalization" of the input, such as

# User provides

- install: "foo"

# Which is normalized into:

- install:

source: "foo"

4) It must be simple to tell debputy what input you expected.

At this point, I remembered that I had seen a Python (PYPI) package where

you could give it a

TypedDict and an arbitrary input (Sadly, I do not

remember the name). The package would then validate the said input against the

TypedDict. If the match was successful, you would get the result back

casted as the

TypedDict. If the match was unsuccessful, the code would

raise an error for you. Conceptually, this seemed to be a good starting

point for where I wanted to be.

Then I looked a bit on the normalization requirement (point 3).

What is really going on here is that you have two "schemas" for the input.

One is what the programmer will see (the normalized form) and the other is

what the user can input (the manifest form). The problem is providing an

automatic normalization from the user input to the simplified programmer

structure. To expand a bit on the following example:

# User provides

- install: "foo"

# Which is normalized into:

- install:

source: "foo"

Given that

install has the attributes

source,

sources,

dest-dir,

as,

into, and

when, how exactly would you automatically normalize

"foo" (str) into

source: "foo"? Even if the code filtered by "type" for these

attributes, you would end up with at least

source,

dest-dir, and

as

as candidates. Turns out that

TypedDict actually got this covered. But

the Python package was not going in this direction, so I parked it here and

started looking into doing my own.

At this point, I had a general idea of what I wanted. When defining an extension

to the manifest, the plugin would provide

debputy with one or two

definitions of

TypedDict. The first one would be the "parsed" or "target" format, which

would be the normalized form that plugin provider wanted to work on. For this

example, lets look at an earlier version of the

install-examples rule:

# Example input matching this typed dict.

#

# "source": ["foo"]

# "into": ["pkg"]

#

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[List[str]]

In this form, the

install-examples has two attributes - both are list of

strings. On the flip side, what the user can input would look something like

this:

# Example input matching this typed dict.

#

# "source": "foo"

# "into": "pkg"

#

#

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[str]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[str, List[str]]

FullInstallExamplesManifestFormat = Union[

InstallExamplesManifestFormat,

List[str],

str,

]

The idea was that the plugin provider would use these two definitions to tell

debputy how to parse

install-examples. Pseudo-registration code could

look something like:

def _handler(

normalized_form: InstallExamplesTargetFormat,

) -> InstallRule:

... # Do something with the normalized form and return an InstallRule.

concept_debputy_api.add_install_rule(

keyword="install-examples",

target_form=InstallExamplesTargetFormat,

manifest_form=FullInstallExamplesManifestFormat,

handler=_handler,

)

This was my conceptual target and while the current actual API ended up being slightly different,

the core concept remains the same.

From concept to basic implementation

Building this code is kind like swallowing an elephant. There was no way I would just sit down

and write it from one end to the other. So the first prototype of this did not have all the

features it has now.

Spoiler warning, these next couple of sections will contain some Python typing details.

When reading this, it might be helpful to know things such as

Union[str, List[str]] being

the Python type for either a

str (string) or a

List[str] (list of strings). If typing

makes your head spin, these sections might less interesting for you.

To build this required a lot of playing around with Python's introspection and

typing APIs. My very first draft only had one "schema" (the normalized form) and

had the following features:

- Read TypedDict.__required_attributes__ and TypedDict.__optional_attributes__ to

determine which attributes where present and which were required. This was used for

reporting errors when the input did not match.

- Read the types of the provided TypedDict, strip the Required / NotRequired

markers and use basic isinstance checks based on the resulting type for str and

List[str]. Again, used for reporting errors when the input did not match.

This prototype did not take a long (I remember it being within a day) and worked surprisingly

well though with some poor error messages here and there. Now came the first challenge,

adding the manifest format schema plus relevant normalization rules. The very first

normalization I did was transforming

into: Union[str, List[str]] into

into: List[str].

At that time,

source was not a separate attribute. Instead,

sources was a

Union[str, List[str]], so it was the only normalization I needed for all my

use-cases at the time.

There are two problems when writing a normalization. First is determining what the "source"

type is, what the target type is and how they relate. The second is providing a runtime

rule for normalizing from the manifest format into the target format. Keeping it simple,

the runtime normalizer for

Union[str, List[str]] -> List[str] was written as:

def normalize_into_list(x: Union[str, List[str]]) -> List[str]:

return x if isinstance(x, list) else [x]

This basic form basically works for all types (assuming none of the types will have

List[List[...]]).

The logic for determining when this rule is applicable is slightly more involved. My current code

is about 100 lines of Python code that would probably lose most of the casual readers.

For the interested, you are looking for _union_narrowing in declarative_parser.py

With this, when the manifest format had

Union[str, List[str]] and the target format

had

List[str] the generated parser would silently map a string into a list of strings

for the plugin provider.

But with that in place, I had covered the basics of what I needed to get started. I was

quite excited about this milestone of having my first keyword parsed without handwriting

the parser logic (at the expense of writing a more generic parse-generator framework).

Adding the first parse hint

With the basic implementation done, I looked at what to do next. As mentioned, at the time

sources in the manifest format was

Union[str, List[str]] and I considered to split

into a

source: str and a

sources: List[str] on the manifest side while keeping

the normalized form as

sources: List[str]. I ended up committing to this change and

that meant I had to solve the problem getting my parser generator to understand the

situation:

# Map from

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[str]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[str, List[str]]

# ... into

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[List[str]]

There are two related problems to solve here:

- How will the parser generator understand that source should be normalized

and then mapped into sources?

- Once that is solved, the parser generator has to understand that while source

and sources are declared as NotRequired, they are part of a exactly one of

rule (since sources in the target form is Required). This mainly came down

to extra book keeping and an extra layer of validation once the previous step is solved.

While working on all of this type introspection for Python, I had noted the

Annotated[X, ...]

type. It is basically a fake type that enables you to attach metadata into the type system.

A very random example:

# For all intents and purposes, foo is a string despite all the Annotated stuff.

foo: Annotated[str, "hello world"] = "my string here"

The exciting thing is that you can put arbitrary details into the type field and read it

out again in your introspection code. Which meant, I could add "parse hints" into the type.

Some "quick" prototyping later (a day or so), I got the following to work:

# Map from

#

# "source": "foo" # (or "sources": ["foo"])

# "into": "pkg"

#

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[

Annotated[

str,

DebputyParseHint.target_attribute("sources")

]

]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[str, List[str]]

# ... into

#

# "source": ["foo"]

# "into": ["pkg"]

#

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[List[str]]

Without me (as a plugin provider) writing a line of code, I can have

debputy rename

or "merge" attributes from the manifest form into the normalized form. Obviously, this

required me (as the

debputy maintainer) to write a lot code so other me and future

plugin providers did not have to write it.

High level typing

At this point, basic normalization between one mapping to another mapping form worked.

But one thing irked me with these install rules. The

into was a list of strings

when the parser handed them over to me. However, I needed to map them to the actual

BinaryPackage (for technical reasons). While I felt I was careful with my manual

mapping, I knew this was exactly the kind of case where a busy programmer would skip

the "is this a known package name" check and some user would typo their package

resulting in an opaque

KeyError: foo.

Side note: "Some user" was me today and I was super glad to see

debputy tell me

that I had typoed a package name (I would have been more happy if I had remembered to

use

debputy check-manifest, so I did not have to wait through the upstream part

of the build that happened before

debhelper passed control to

debputy...)

I thought adding this feature would be simple enough. It basically needs two things:

- Conversion table where the parser generator can tell that BinaryPackage requires

an input of str and a callback to map from str to BinaryPackage.

(That is probably lie. I think the conversion table came later, but honestly I do

remember and I am not digging into the git history for this one)

- At runtime, said callback needed access to the list of known packages, so it could

resolve the provided string.

It was not super difficult given the existing infrastructure, but it did take some hours

of coding and debugging. Additionally, I added a parse hint to support making the

into conditional based on whether it was a single binary package. With this done,

you could now write something like:

# Map from

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[

Annotated[

str,

DebputyParseHint.target_attribute("sources")

]

]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[BinaryPackage, List[BinaryPackage]]

# ... into

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[

Annotated[

List[BinaryPackage],

DebputyParseHint.required_when_multi_binary()

]

]

Code-wise, I still had to check for

into being absent and providing a default for that

case (that is still true in the current codebase - I will hopefully fix that eventually). But

I now had less room for mistakes and a standardized error message when you misspell the package

name, which was a plus.

The added side-effect - Introspection

A lovely side-effect of all the parsing logic being provided to

debputy in a declarative

form was that the generated parser snippets had fields containing all expected attributes

with their types, which attributes were required, etc. This meant that adding an

introspection feature where you can ask

debputy "What does an

install rule look like?"

was quite easy. The code base already knew all of this, so the "hard" part was resolving the

input the to concrete rule and then rendering it to the user.

I added this feature recently along with the ability to provide online documentation for

parser rules. I covered that in more details in my blog post

Providing online reference documentation for debputy

in case you are interested. :)

Wrapping it up

This was a short insight into how

debputy parses your input. With this declarative

technique:

- The parser engine handles most of the error reporting meaning users get most of the errors

in a standard format without the plugin provider having to spend any effort on it.

There will be some effort in more complex cases. But the common cases are done for you.

- It is easy to provide flexibility to users while avoiding having to write code to normalize

the user input into a simplified programmer oriented format.

- The parser handles mapping from basic types into higher forms for you. These days, we have

high level types like FileSystemMode (either an octal or a symbolic mode), different

kind of file system matches depending on whether globs should be performed, etc. These

types includes their own validation and parsing rules that debputy handles for you.

- Introspection and support for providing online reference documentation. Also, debputy

checks that the provided attribute documentation covers all the attributes in the manifest

form. If you add a new attribute, debputy will remind you if you forget to document

it as well. :)

In this way everybody wins. Yes, writing this parser generator code was more enjoyable than

writing the ad-hoc manual parsers it replaced. :)

The Debian Project Developers will shortly vote for a new Debian Project Leader

known as the DPL.

The Project Leader is the official representative of The Debian Project tasked with

managing the overall project, its vision, direction, and finances.

The DPL is also responsible for the selection of Delegates, defining areas of

responsibility within the project, the coordination of Developers, and making

decisions required for the project.

Our outgoing and present DPL Jonathan Carter served 4 terms, from 2020

through 2024. Jonathan shared his last

The Debian Project Developers will shortly vote for a new Debian Project Leader

known as the DPL.

The Project Leader is the official representative of The Debian Project tasked with

managing the overall project, its vision, direction, and finances.

The DPL is also responsible for the selection of Delegates, defining areas of

responsibility within the project, the coordination of Developers, and making

decisions required for the project.

Our outgoing and present DPL Jonathan Carter served 4 terms, from 2020

through 2024. Jonathan shared his last

I have been working on the rather massive Apparmor bug

I have been working on the rather massive Apparmor bug  In late 2022 I prepared a batch of

In late 2022 I prepared a batch of  I started by looping 5 m of cord, making iirc 2 rounds of a loop about

the right size to go around the book with a bit of ease, then used the

ends as filler cords for a handle, wrapped them around the loop and

worked square knots all over them to make a handle.

Then I cut the rest of the cord into 40 pieces, each 4 m long, because I

had no idea how much I was going to need (spoiler: I successfully got it

wrong :D )

I joined the cords to the handle with lark head knots, 20 per side, and

then I started knotting without a plan or anything, alternating between

hitches and square knots, sometimes close together and sometimes leaving

some free cord between them.

And apparently I also completely forgot to take in-progress pictures.

I kept working on this for a few months, knotting a row or two now and

then, until the bag was long enough for the book, then I closed the

bottom by taking one cord from the front and the corresponding on the

back, knotting them together (I don t remember how) and finally I made a

rigid triangle of tight square knots with all of the cords,

progressively leaving out a cord from each side, and cutting it in a

fringe.

I then measured the remaining cords, and saw that the shortest ones were

about a meter long, but the longest ones were up to 3 meters, I could

have cut them much shorter at the beginning (and maybe added a couple

more cords). The leftovers will be used, in some way.

And then I postponed taking pictures of the finished object for a few

months.

I started by looping 5 m of cord, making iirc 2 rounds of a loop about

the right size to go around the book with a bit of ease, then used the

ends as filler cords for a handle, wrapped them around the loop and

worked square knots all over them to make a handle.

Then I cut the rest of the cord into 40 pieces, each 4 m long, because I

had no idea how much I was going to need (spoiler: I successfully got it

wrong :D )

I joined the cords to the handle with lark head knots, 20 per side, and

then I started knotting without a plan or anything, alternating between

hitches and square knots, sometimes close together and sometimes leaving

some free cord between them.

And apparently I also completely forgot to take in-progress pictures.

I kept working on this for a few months, knotting a row or two now and

then, until the bag was long enough for the book, then I closed the

bottom by taking one cord from the front and the corresponding on the

back, knotting them together (I don t remember how) and finally I made a

rigid triangle of tight square knots with all of the cords,

progressively leaving out a cord from each side, and cutting it in a

fringe.

I then measured the remaining cords, and saw that the shortest ones were

about a meter long, but the longest ones were up to 3 meters, I could

have cut them much shorter at the beginning (and maybe added a couple

more cords). The leftovers will be used, in some way.

And then I postponed taking pictures of the finished object for a few

months.

Now the result is functional, but I have to admit it is somewhat ugly:

not as much for the lack of a pattern (that I think came out quite fine)

but because of how irregular the knots are; I m not confident that the

next time I will be happy with their regularity, either, but I hope I

will improve, and that s one important thing.

And the other important thing is: I enjoyed making this, even if I kept

interrupting the work, and I think that there may be some other macrame

in my future.

Now the result is functional, but I have to admit it is somewhat ugly:

not as much for the lack of a pattern (that I think came out quite fine)

but because of how irregular the knots are; I m not confident that the

next time I will be happy with their regularity, either, but I hope I

will improve, and that s one important thing.

And the other important thing is: I enjoyed making this, even if I kept

interrupting the work, and I think that there may be some other macrame

in my future.

After seven years of service as member and secretary on the GHC Steering Committee, I have resigned from that role. So this is a good time to look back and retrace the formation of the GHC proposal process and committee.

In my memory, I helped define and shape the proposal process, optimizing it for effectiveness and throughput, but memory can be misleading, and judging from the paper trail in my email archives, this was indeed mostly Ben Gamari s and Richard Eisenberg s achievement: Already in Summer of 2016, Ben Gamari set up the

After seven years of service as member and secretary on the GHC Steering Committee, I have resigned from that role. So this is a good time to look back and retrace the formation of the GHC proposal process and committee.

In my memory, I helped define and shape the proposal process, optimizing it for effectiveness and throughput, but memory can be misleading, and judging from the paper trail in my email archives, this was indeed mostly Ben Gamari s and Richard Eisenberg s achievement: Already in Summer of 2016, Ben Gamari set up the  In 2022 I read a post on the fediverse by somebody who mentioned that

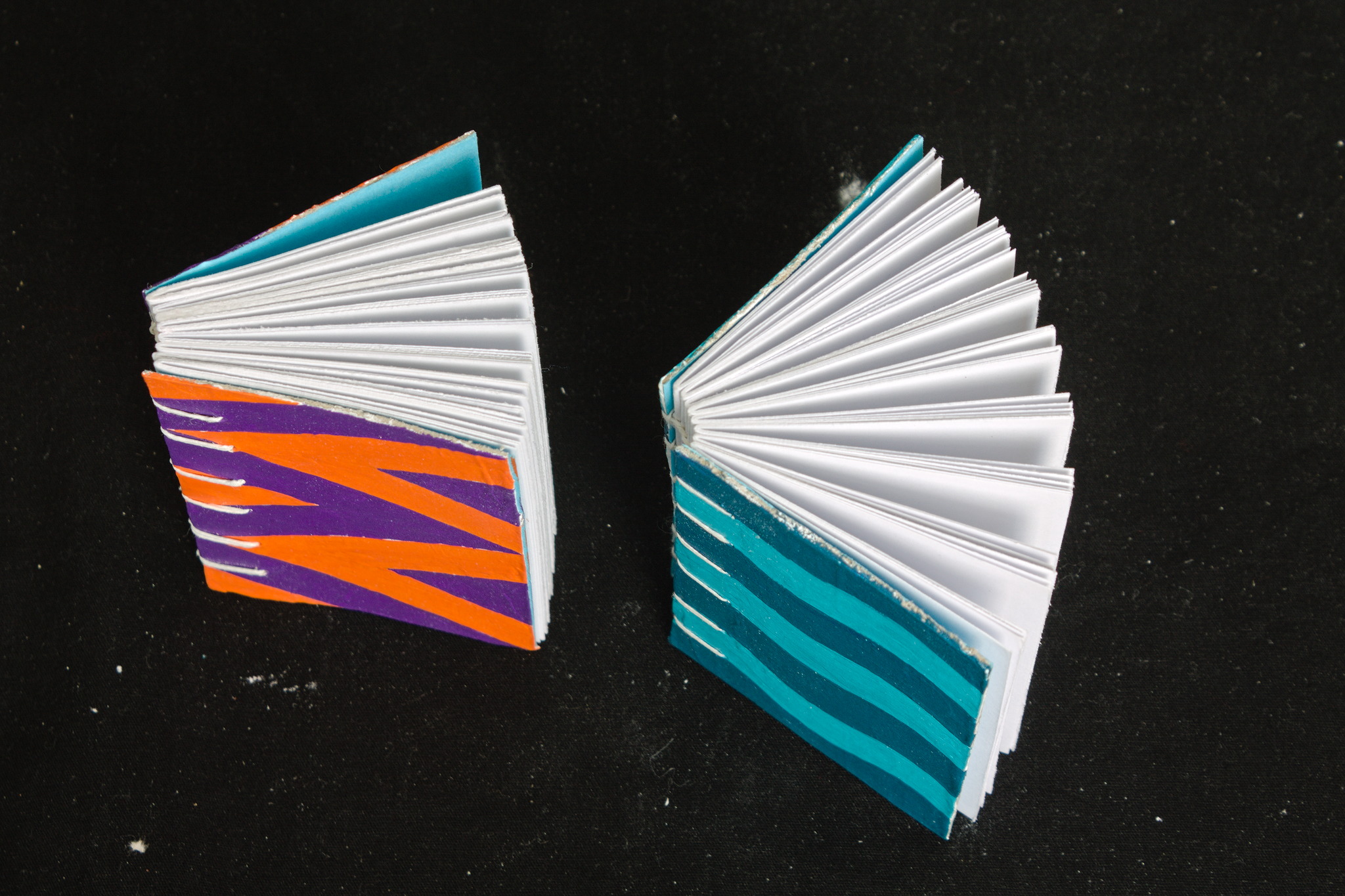

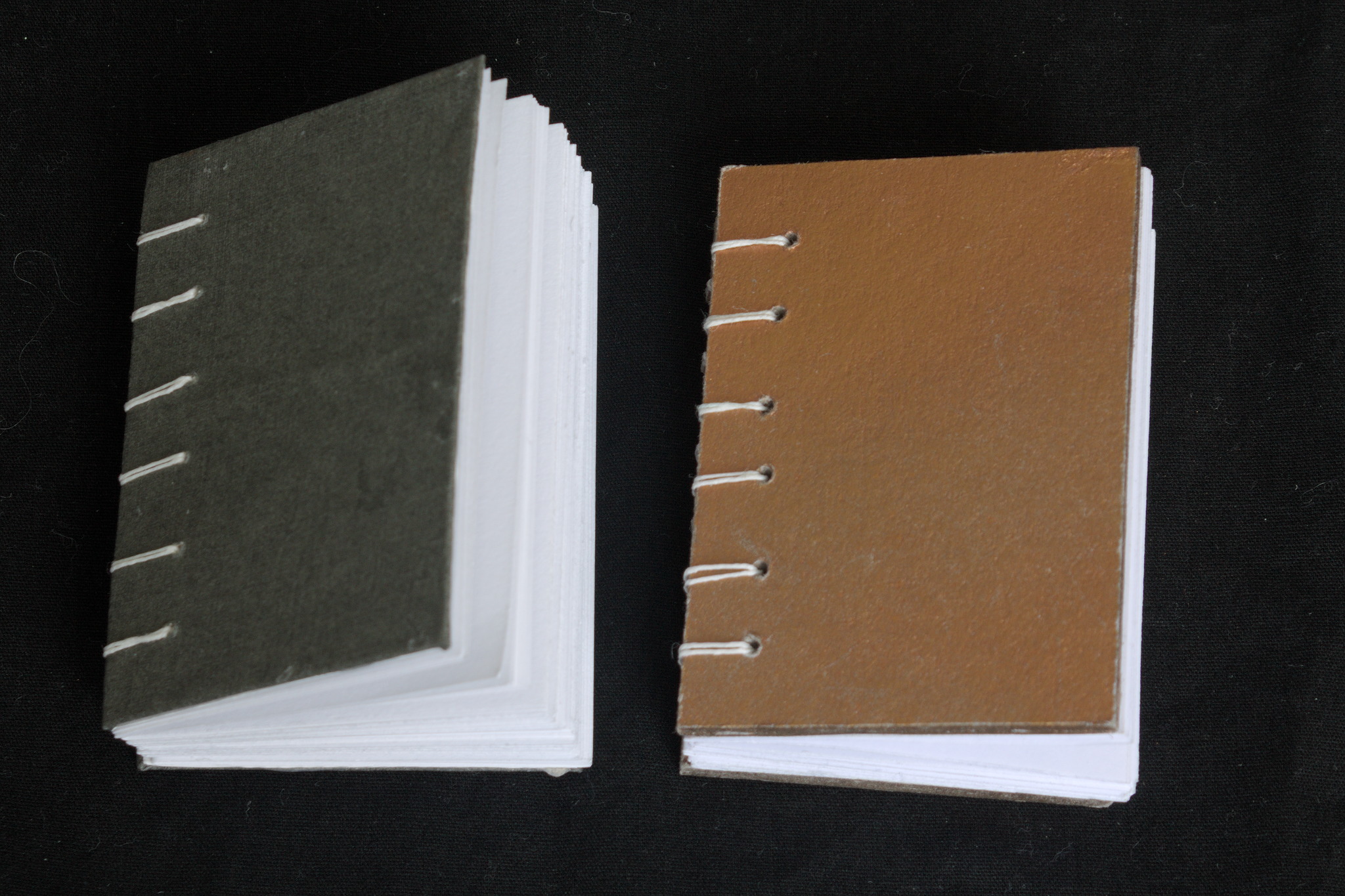

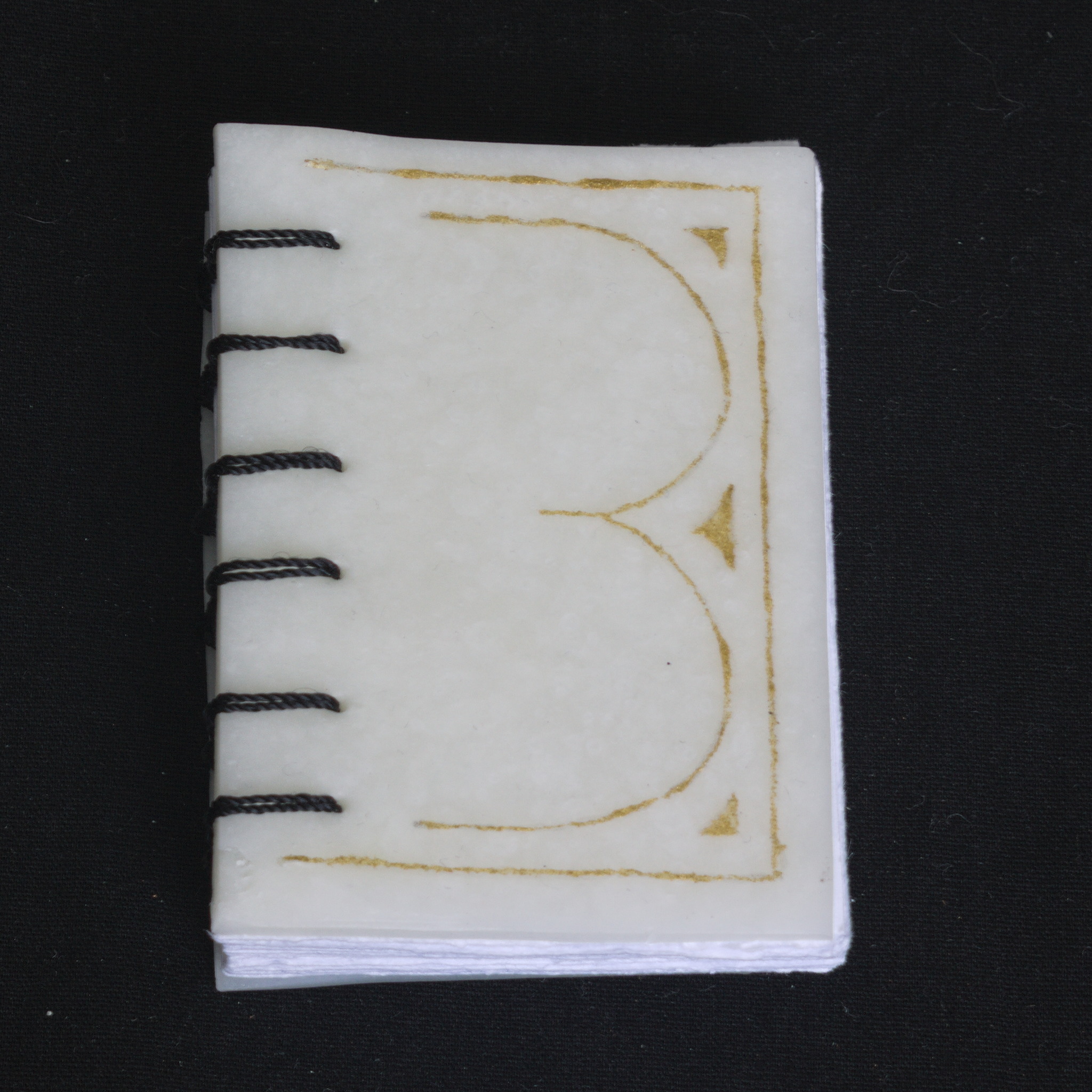

they had bought on a whim a cute tiny book years ago, and that it

had been a companion through hard times. Right now I can t find the

post, but it was pretty aaaaawwww.

In 2022 I read a post on the fediverse by somebody who mentioned that

they had bought on a whim a cute tiny book years ago, and that it

had been a companion through hard times. Right now I can t find the

post, but it was pretty aaaaawwww.

At the same time, I had discovered Coptic binding, and I wanted to do

some exercise to let my hands learn it, but apparently there is a limit

to the number of notebooks and sketchbooks a person needs (I m not 100%

sure I actually believe this, but I ve heard it is a thing).

At the same time, I had discovered Coptic binding, and I wanted to do

some exercise to let my hands learn it, but apparently there is a limit

to the number of notebooks and sketchbooks a person needs (I m not 100%

sure I actually believe this, but I ve heard it is a thing).

So I decided to start making minibooks with the intent to give them

away: I settled (mostly) on the A8 size, and used a combination of found

materials, leftovers from bigger projects and things I had in the Stash.

As for paper, I ve used a variety of the ones I have that are at the

very least good enough for non-problematic fountain pen inks.

So I decided to start making minibooks with the intent to give them

away: I settled (mostly) on the A8 size, and used a combination of found

materials, leftovers from bigger projects and things I had in the Stash.

As for paper, I ve used a variety of the ones I have that are at the

very least good enough for non-problematic fountain pen inks.

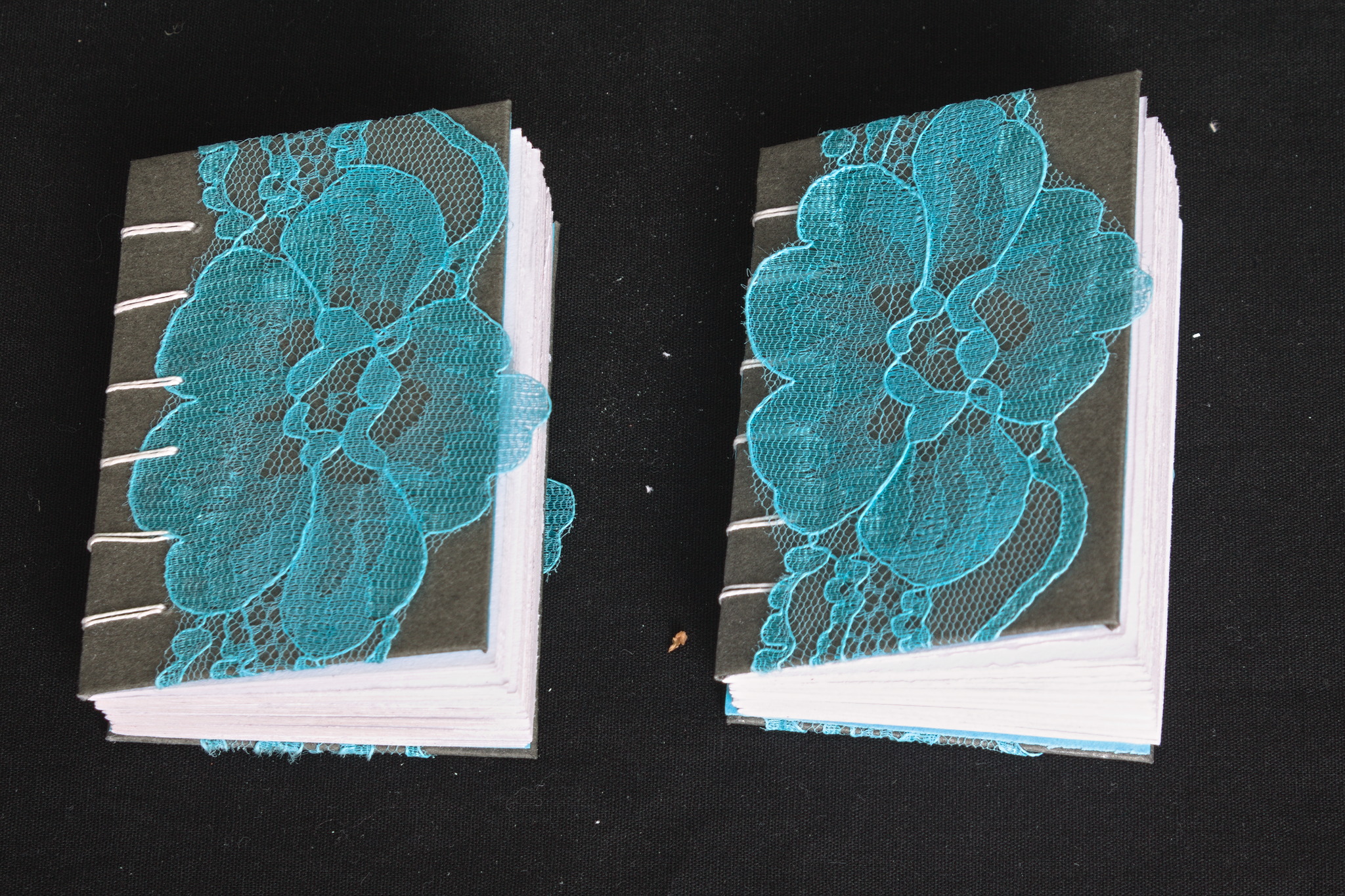

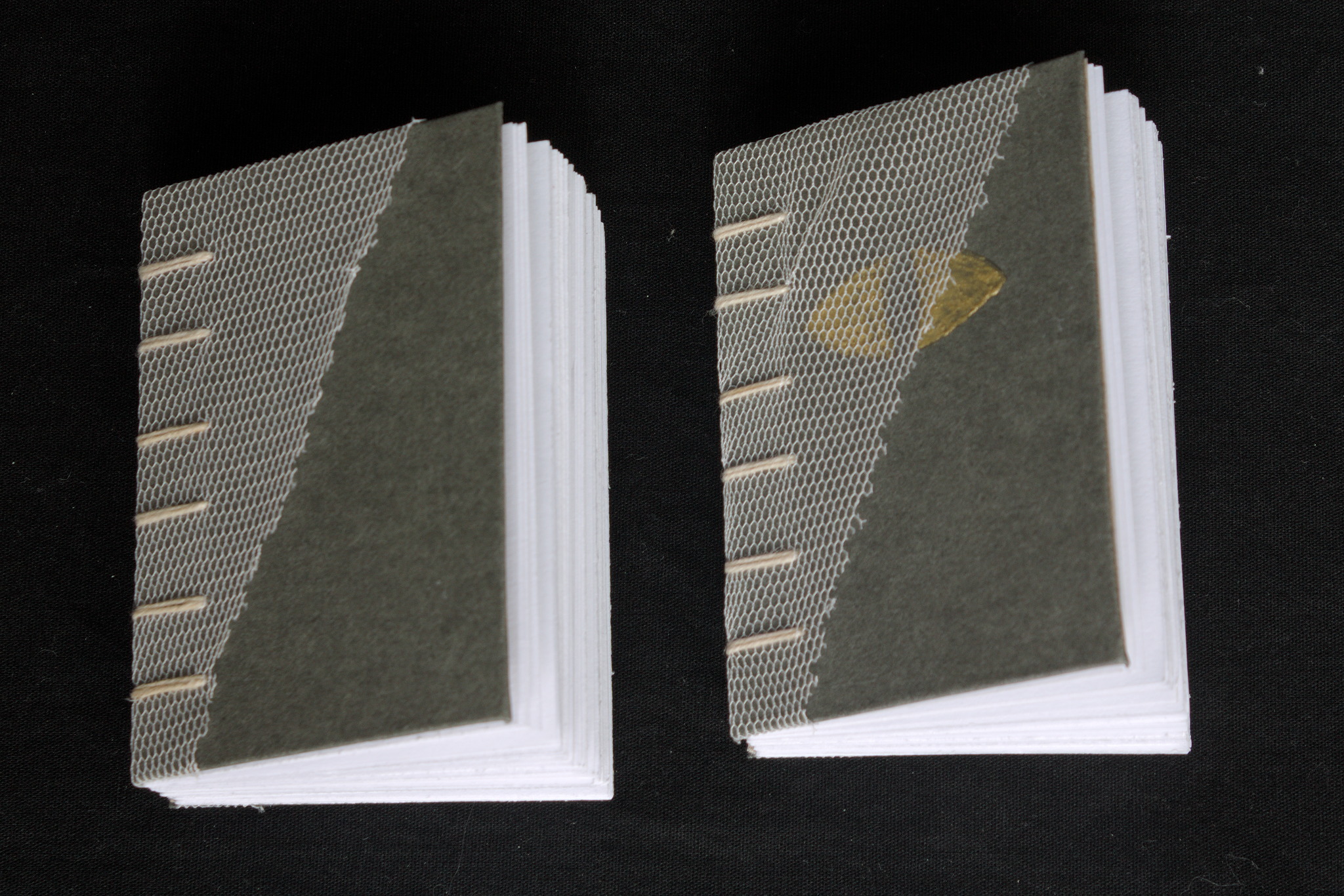

Thanks to the small size, and the way coptic binding works, I ve been

able to play around with the covers, experimenting with different styles

beyond the classic bookbinding cloth / paper covered cardboard,

including adding lace, covering food box cardboard with gesso and

decorating it with acrylic paints, embossing designs by gluing together

two layers of cardboard, one of which has holes, making covers

completely out of cernit, etc. Some of these I will probably also use in

future full-scale projects, but it s nice to find out what works and

what doesn t on a small scale.

Thanks to the small size, and the way coptic binding works, I ve been

able to play around with the covers, experimenting with different styles

beyond the classic bookbinding cloth / paper covered cardboard,

including adding lace, covering food box cardboard with gesso and

decorating it with acrylic paints, embossing designs by gluing together

two layers of cardboard, one of which has holes, making covers

completely out of cernit, etc. Some of these I will probably also use in

future full-scale projects, but it s nice to find out what works and

what doesn t on a small scale.

Now, after a year of sporadically making these I have to say that the

making went quite well: I enjoyed the making and the creativity in

making different covers. The giving away was a bit more problematic, as

I didn t really have a lot of chances to do so, so I believe I still

have most of them. In 2024 I ll try to look for more opportunities (and

if you live nearby and want one or a few feel free to ask!)

Now, after a year of sporadically making these I have to say that the

making went quite well: I enjoyed the making and the creativity in

making different covers. The giving away was a bit more problematic, as

I didn t really have a lot of chances to do so, so I believe I still

have most of them. In 2024 I ll try to look for more opportunities (and

if you live nearby and want one or a few feel free to ask!)

I think while developing Wayland-as-an-ecosystem we are now entrenched into narrow concepts of how a desktop should work. While discussing Wayland protocol additions, a lot of concepts clash, people from different desktops with different design philosophies debate the merits of those over and over again never reaching any conclusion (just as you will never get an answer out of humans whether sushi or pizza is the clearly superior food, or whether CSD or SSD is better). Some people want to use Wayland as a vehicle to force applications to submit to their desktop s design philosophies, others prefer the smallest and leanest protocol possible, other developers want the most elegant behavior possible. To be clear, I think those are all very valid approaches.

But this also creates problems: By switching to Wayland compositors, we are already forcing a lot of porting work onto toolkit developers and application developers. This is annoying, but just work that has to be done. It becomes frustrating though if Wayland provides toolkits with absolutely no way to reach their goal in any reasonable way. For Nate s Photoshop analogy: Of course Linux does not break Photoshop, it is Adobe s responsibility to port it. But what if Linux was missing a crucial syscall that Photoshop needed for proper functionality and Adobe couldn t port it without that? In that case it becomes much less clear on who is to blame for Photoshop not being available.

A lot of Wayland protocol work is focused on the environment and design, while applications and work to port them often is considered less. I think this happens because the overlap between application developers and developers of the desktop environments is not necessarily large, and the overlap with people willing to engage with Wayland upstream is even smaller. The combination of Windows developers porting apps to Linux and having involvement with toolkits or Wayland is pretty much nonexistent. So they have less of a voice.

I think while developing Wayland-as-an-ecosystem we are now entrenched into narrow concepts of how a desktop should work. While discussing Wayland protocol additions, a lot of concepts clash, people from different desktops with different design philosophies debate the merits of those over and over again never reaching any conclusion (just as you will never get an answer out of humans whether sushi or pizza is the clearly superior food, or whether CSD or SSD is better). Some people want to use Wayland as a vehicle to force applications to submit to their desktop s design philosophies, others prefer the smallest and leanest protocol possible, other developers want the most elegant behavior possible. To be clear, I think those are all very valid approaches.

But this also creates problems: By switching to Wayland compositors, we are already forcing a lot of porting work onto toolkit developers and application developers. This is annoying, but just work that has to be done. It becomes frustrating though if Wayland provides toolkits with absolutely no way to reach their goal in any reasonable way. For Nate s Photoshop analogy: Of course Linux does not break Photoshop, it is Adobe s responsibility to port it. But what if Linux was missing a crucial syscall that Photoshop needed for proper functionality and Adobe couldn t port it without that? In that case it becomes much less clear on who is to blame for Photoshop not being available.

A lot of Wayland protocol work is focused on the environment and design, while applications and work to port them often is considered less. I think this happens because the overlap between application developers and developers of the desktop environments is not necessarily large, and the overlap with people willing to engage with Wayland upstream is even smaller. The combination of Windows developers porting apps to Linux and having involvement with toolkits or Wayland is pretty much nonexistent. So they have less of a voice.

I will also bring my two protocol MRs to their conclusion for sure, because as application developers we need clarity on what the platform (either all desktops or even just a few) supports and will or will not support in future. And the only way to get something good done is by contribution and friendly discussion.

I will also bring my two protocol MRs to their conclusion for sure, because as application developers we need clarity on what the platform (either all desktops or even just a few) supports and will or will not support in future. And the only way to get something good done is by contribution and friendly discussion.

This post should have marked the beginning of my yearly roundups of the favourite books and movies I read and watched in 2023.

However, due to coming down with a nasty bout of flu recently and other sundry commitments, I wasn't able to undertake writing the necessary four or five blog posts In lieu of this, however, I will simply present my (unordered and unadorned) highlights for now. Do get in touch if this (or any of my previous posts) have spurred you into picking something up yourself

This post should have marked the beginning of my yearly roundups of the favourite books and movies I read and watched in 2023.

However, due to coming down with a nasty bout of flu recently and other sundry commitments, I wasn't able to undertake writing the necessary four or five blog posts In lieu of this, however, I will simply present my (unordered and unadorned) highlights for now. Do get in touch if this (or any of my previous posts) have spurred you into picking something up yourself

By the influencers on the famous proprietary video platform

By the influencers on the famous proprietary video platform Anyway, my brain suddenly decided that I needed a red wool dress, fitted

enough to give some bust support. I had already made a

Anyway, my brain suddenly decided that I needed a red wool dress, fitted

enough to give some bust support. I had already made a  I knew that I didn t have enough fabric to add a flounce to the hem, as

in the cotton dress, but then I remembered that some time ago I fell for

a piece of fringed trim in black, white and red. I did a quick check

that the red wasn t clashing (it wasn t) and I knew I had a plan for the

hem decoration.

Then I spent a week finishing other projects, and the more I thought

about this dress, the more I was tempted to have spiral lacing at the

front rather than buttons, as a nod to the kirtle inspiration.

It may end up be a bit of a hassle, but if it is too much I can always

add a hidden zipper on a side seam, and only have to undo a bit of the

lacing around the neckhole to wear the dress.

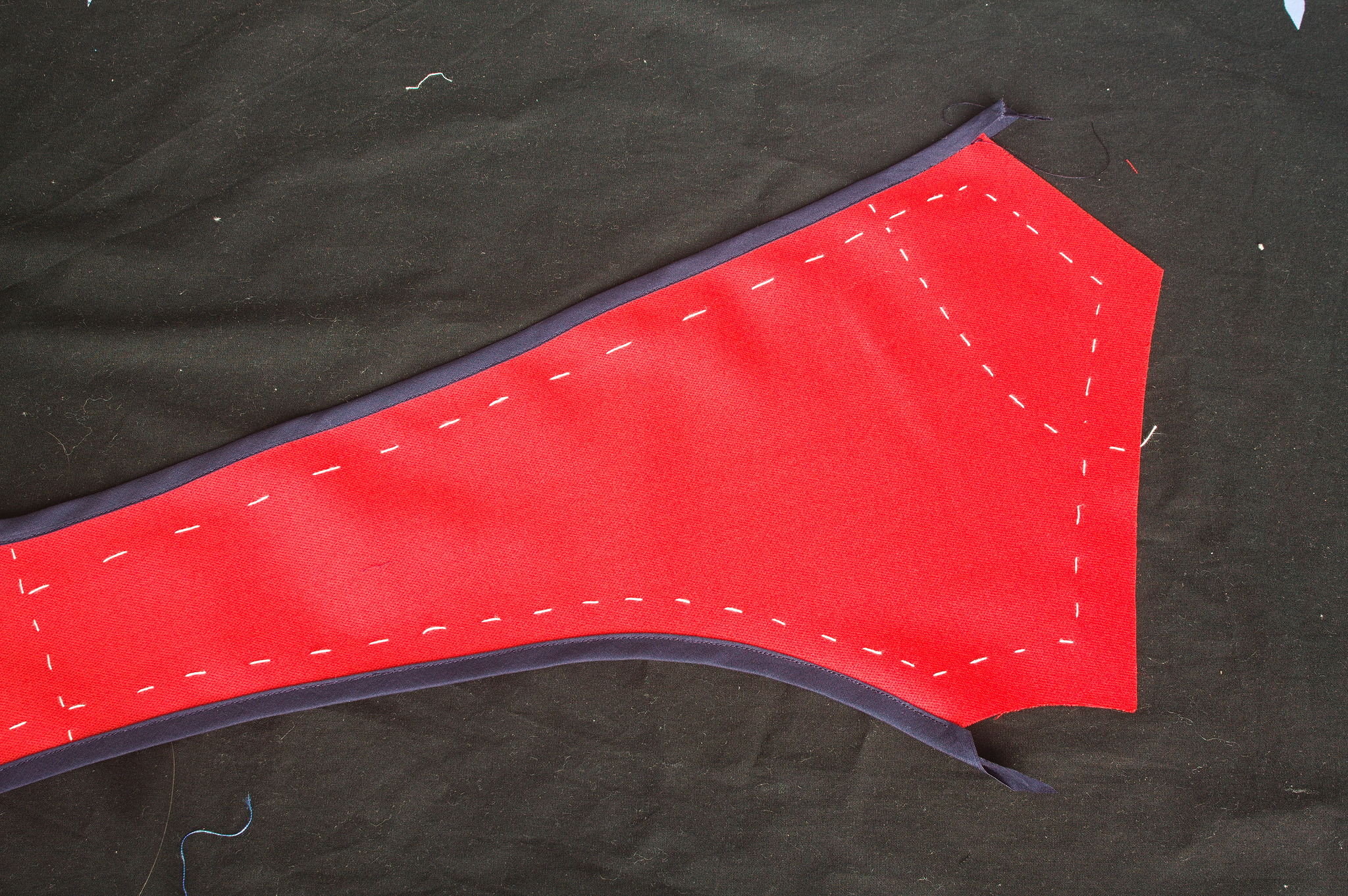

Finally, I could start working on the dress: I cut all of the main

pieces, and since the seam lines were quite curved I marked them with

tailor s tacks, which I don t exactly enjoy doing or removing, but are

the only method that was guaranteed to survive while manipulating this

fabric (and not leave traces afterwards).

I knew that I didn t have enough fabric to add a flounce to the hem, as

in the cotton dress, but then I remembered that some time ago I fell for

a piece of fringed trim in black, white and red. I did a quick check

that the red wasn t clashing (it wasn t) and I knew I had a plan for the

hem decoration.

Then I spent a week finishing other projects, and the more I thought

about this dress, the more I was tempted to have spiral lacing at the

front rather than buttons, as a nod to the kirtle inspiration.

It may end up be a bit of a hassle, but if it is too much I can always

add a hidden zipper on a side seam, and only have to undo a bit of the

lacing around the neckhole to wear the dress.

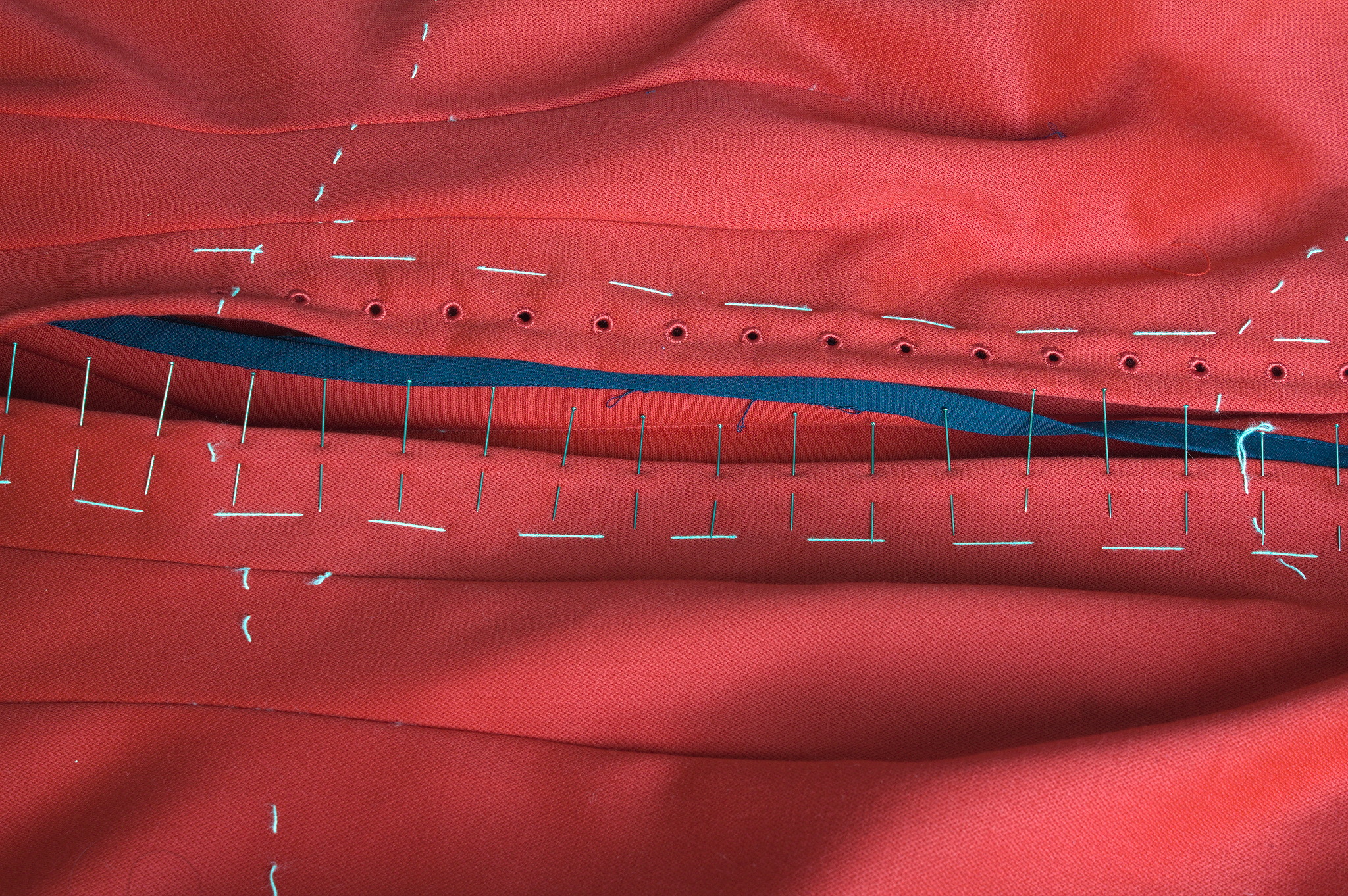

Finally, I could start working on the dress: I cut all of the main

pieces, and since the seam lines were quite curved I marked them with

tailor s tacks, which I don t exactly enjoy doing or removing, but are

the only method that was guaranteed to survive while manipulating this

fabric (and not leave traces afterwards).

While cutting the front pieces I accidentally cut the high neck line

instead of the one I had used on the cotton dress: I decided to go for

it also on the back pieces and decide later whether I wanted to lower

it.

Since this is a modern dress, with no historical accuracy at all, and I

have access to a serger, I decided to use some dark blue cotton voile

I ve had in my stash for quite some time, cut into bias strip, to bind

the raw edges before sewing. This works significantly better than bought

bias tape, which is a bit too stiff for this.

While cutting the front pieces I accidentally cut the high neck line

instead of the one I had used on the cotton dress: I decided to go for

it also on the back pieces and decide later whether I wanted to lower

it.

Since this is a modern dress, with no historical accuracy at all, and I

have access to a serger, I decided to use some dark blue cotton voile

I ve had in my stash for quite some time, cut into bias strip, to bind

the raw edges before sewing. This works significantly better than bought

bias tape, which is a bit too stiff for this.

For the front opening, I ve decided to reinforce the areas where the

lacing holes will be with cotton: I ve used some other navy blue cotton,

also from the stash, and added two lines of cording to stiffen the front

edge.

So I ve cut the front in two pieces rather than on the fold, sewn the

reinforcements to the sewing allowances in such a way that the corded

edge was aligned with the center front and then sewn the bottom of the

front seam from just before the end of the reinforcements to the hem.

For the front opening, I ve decided to reinforce the areas where the

lacing holes will be with cotton: I ve used some other navy blue cotton,

also from the stash, and added two lines of cording to stiffen the front

edge.

So I ve cut the front in two pieces rather than on the fold, sewn the

reinforcements to the sewing allowances in such a way that the corded

edge was aligned with the center front and then sewn the bottom of the

front seam from just before the end of the reinforcements to the hem.

The allowances are then folded back, and then they are kept in place

by the worked lacing holes. The cotton was pinked, while for the wool I

used the selvedge of the fabric and there was no need for any finishing.

Behind the opening I ve added a modesty placket: I ve cut a strip of red

wool, a strip of cotton, folded the edge of the strip of cotton to the

center, added cording to the long sides, pressed the allowances of the

wool towards the wrong side, and then handstitched the cotton to the

wool, wrong sides facing. This was finally handstitched to one side of

the sewing allowance of the center front.

I ve also decided to add real pockets, rather than just slits, and for

some reason I decided to add them by hand after I had sewn the dress, so

I ve left opening in the side back seams, where the slits were in the

cotton dress. I ve also already worn the dress, but haven t added the

pockets yet, as I m still debating about their shape. This will be fixed

in the near future.

Another thing that will have to be fixed is the trim situation: I like

the fringe at the bottom, and I had enough to also make a belt, but this

makes the top of the dress a bit empty. I can t use the same fringe

tape, as it is too wide, but it would be nice to have something smaller

that matches the patterned part. And I think I can make something

suitable with tablet weaving, but I m not sure on which materials to

use, so it will have to be on hold for a while, until I decide on the

supplies and have the time for making it.

Another improvement I d like to add are detached sleeves, both matching

(I should still have just enough fabric) and contrasting, but first I

want to learn more about real kirtle construction, and maybe start

making sleeves that would be suitable also for a real kirtle.

Meanwhile, I ve worn it on Christmas (over my 1700s menswear shirt with

big sleeves) and may wear it again tomorrow (if I bother to dress up to

spend New Year s Eve at home :D )

The allowances are then folded back, and then they are kept in place

by the worked lacing holes. The cotton was pinked, while for the wool I

used the selvedge of the fabric and there was no need for any finishing.

Behind the opening I ve added a modesty placket: I ve cut a strip of red

wool, a strip of cotton, folded the edge of the strip of cotton to the

center, added cording to the long sides, pressed the allowances of the

wool towards the wrong side, and then handstitched the cotton to the

wool, wrong sides facing. This was finally handstitched to one side of

the sewing allowance of the center front.

I ve also decided to add real pockets, rather than just slits, and for

some reason I decided to add them by hand after I had sewn the dress, so

I ve left opening in the side back seams, where the slits were in the

cotton dress. I ve also already worn the dress, but haven t added the

pockets yet, as I m still debating about their shape. This will be fixed

in the near future.

Another thing that will have to be fixed is the trim situation: I like

the fringe at the bottom, and I had enough to also make a belt, but this

makes the top of the dress a bit empty. I can t use the same fringe

tape, as it is too wide, but it would be nice to have something smaller

that matches the patterned part. And I think I can make something

suitable with tablet weaving, but I m not sure on which materials to

use, so it will have to be on hold for a while, until I decide on the

supplies and have the time for making it.

Another improvement I d like to add are detached sleeves, both matching

(I should still have just enough fabric) and contrasting, but first I

want to learn more about real kirtle construction, and maybe start

making sleeves that would be suitable also for a real kirtle.

Meanwhile, I ve worn it on Christmas (over my 1700s menswear shirt with

big sleeves) and may wear it again tomorrow (if I bother to dress up to

spend New Year s Eve at home :D )