Review:

Going Infinite, by Michael Lewis

| Publisher: |

W.W. Norton & Company |

| Copyright: |

2023 |

| ISBN: |

1-324-07434-5 |

| Format: |

Kindle |

| Pages: |

255 |

My first reaction when I heard that Michael Lewis had been embedded with

Sam Bankman-Fried working on a book when Bankman-Fried's cryptocurrency

exchange FTX collapsed into bankruptcy after losing billions of dollars of

customer deposits was "holy shit, why would you talk to

Michael

Lewis about your dodgy cryptocurrency company?" Followed immediately by

"I have to read this book."

This is that book.

I wasn't sure how Lewis would approach this topic. His normal (although

not exclusive) area of interest is financial systems and crises, and there

is lots of room for multiple books about cryptocurrency fiascoes using

someone like Bankman-Fried as a pivot. But

Going Infinite is not

like

The Big Short or Lewis's other

financial industry books. It's a nearly straight biography of Sam

Bankman-Fried, with just enough context for the reader to follow his life.

To understand what you're getting in

Going Infinite, I think it's

important to understand what sort of book Lewis likes to write. Lewis is

not exactly a reporter, although he does explain complicated things for a

mass audience. He's primarily a storyteller who collects people he finds

fascinating. This book was therefore never going to be like, say,

Carreyrou's

Bad Blood or Isaac's

Super Pumped. Lewis's interest is not

in a forensic account of how FTX or Alameda Research were structured. His

interest is in what makes Sam Bankman-Fried tick, what's going on inside

his head.

That's not a question Lewis directly answers, though. Instead, he shows

you Bankman-Fried as Lewis saw him and was able to reconstruct from

interviews and sources and lets you draw your own conclusions. Boy did I

ever draw a lot of conclusions, most of which were highly unflattering.

However, one conclusion I didn't draw, and had been dubious about even

before reading this book, was that Sam Bankman-Fried was some sort of

criminal mastermind who intentionally plotted to steal customer money.

Lewis clearly doesn't believe this is the case, and with the caveat that

my study of the evidence outside of this book has been spotty and

intermittent, I think Lewis has the better of the argument.

I am utterly fascinated by this, and I'm afraid this review is going to

turn into a long summary of my take on the argument, so here's the capsule

review before you get bored and wander off: This is a highly entertaining

book written by an excellent storyteller. I am also inclined to believe

most of it is true, but given that I'm not on the jury, I'm not that

invested in whether Lewis is too credulous towards the explanations of the

people involved. What I do know is that it's a fantastic yarn with

characters who are too wild to put in fiction, and I thoroughly enjoyed

it.

There are a few things that everyone involved appears to agree on, and

therefore I think we can take as settled. One is that Bankman-Fried, and

most of the rest of FTX and Alameda Research, never clearly distinguished

between customer money and all of the other money. It's not obvious that

their home-grown accounting software (written entirely by one person! who

never spoke to other people! in code that no one else could understand!)

was even capable of clearly delineating between their piles of money.

Another is that FTX and Alameda Research were thoroughly intermingled.

There was no official reporting structure and possibly not even a coherent

list of employees. The environment was so chaotic that lots of people,

including Bankman-Fried, could have stolen millions of dollars without

anyone noticing. But it was also so chaotic that they could, and did,

literally misplace millions of dollars by accident, or because

Bankman-Fried had problems with object permanence.

Something that was previously less obvious from news coverage but that

comes through very clearly in this book is that Bankman-Fried seriously

struggled with normal interpersonal and societal interactions. We know

from multiple sources that he was diagnosed with ADHD and depression

(Lewis describes it specifically as anhedonia, the inability to feel

pleasure). The ADHD in Lewis's account is quite severe and does not sound

controlled, despite medication; for example, Bankman-Fried routinely

played timed video games while he was having important meetings, forgot

things the moment he stopped dealing with them, was constantly on his

phone or seeking out some other distraction, and often stimmed (by

bouncing his leg) to a degree that other people found it distracting.

Perhaps more tellingly, Bankman-Fried repeatedly describes himself in

diary entries and correspondence to other people (particularly Caroline

Ellison, his employee and on-and-off secret girlfriend) as being devoid of

empathy and unable to access his own emotions, which Lewis supports with

stories from former co-workers. I'm very hesitant to diagnose someone via

a book, but, at least in Lewis's account, Bankman-Fried nearly walks down

the symptom list of antisocial personality disorder in his own description

of himself to other people. (The one exception is around physical

violence; there is nothing in this book or in any of the other reporting

that I've seen to indicate that Bankman-Fried was violent or physically

abusive.) One of the recurrent themes of this book is that Bankman-Fried

never saw the point in following rules that didn't make sense to him or

worrying about things he thought weren't important, and therefore simply

didn't.

By about a third of the way into this book, before FTX is even properly

started, very little about its eventual downfall will seem that

surprising. There was no way that Sam Bankman-Fried was going to be able

to run a successful business over time. He was extremely good at

probabilistic trading and spotting exploitable market inefficiencies, and

extremely bad at essentially every other aspect of living in a society

with other people, other than a hit-or-miss ability to charm that worked

much better with large audiences than one-on-one. The real question was

why anyone would ever entrust this man with millions of dollars or decide

to work for him for longer than two weeks.

The answer to those questions changes over the course of this story.

Later on, it was timing. Sam Bankman-Fried took the techniques of high

frequency trading he learned at Jane Street Capital and applied them to

exploiting cryptocurrency markets at precisely the right time in the

cryptocurrency bubble. There was far more money than sense, the most

ruthless financial players were still too leery to get involved, and a

rising tide was lifting all boats, even the ones that were piles of

driftwood. When cryptocurrency inevitably collapsed, so did his

businesses. In retrospect, that seems inevitable.

The early answer, though, was effective altruism.

A full discussion of effective altruism is beyond the scope of this

review, although Lewis offers a decent introduction in the book. The

short version is that a sensible and defensible desire to use stronger

standards of evidence in evaluating charitable giving turned into a

bizarre navel-gazing exercise in making up statistical risks to

hypothetical future people and treating those made-up numbers as if they

should be the bedrock of one's personal ethics. One of the people most

responsible for this turn is an Oxford philosopher named

Will MacAskill.

Sam Bankman-Fried was already obsessed with utilitarianism, in part due to

his parents' philosophical beliefs, and it was a presentation by Will

MacAskill that converted him to the effective altruism variant of extreme

utilitarianism.

In Lewis's presentation, this was like joining a cult. The impression I

came away with feels like something out of a

science fiction novel: Bankman-Fried knew there was some serious gap in

his thought processes where most people had empathy, was deeply troubled

by this, and latched on to effective altruism as the ethical framework to

plug into that hole. So much of effective altruism sounds like a con game

that it's easy to think the participants are lying, but Lewis clearly

believes Bankman-Fried is a true believer. He appeared to be sincerely

trying to make money in order to use it to solve existential threats to

society, he does not appear to be motivated by money apart from that goal,

and he was following through (in bizarre and mostly ineffective ways).

I find this particularly believable because effective altruism as a belief

system seems designed to fit Bankman-Fried's personality and justify the

things he wanted to do anyway. Effective altruism says that empathy is

meaningless, emotion is meaningless, and ethical decisions should be made

solely on the basis of expected value: how much return (usually in safety)

does society get for your investment. Effective altruism says that all

the things that Sam Bankman-Fried was bad at were useless and unimportant,

so he could stop feeling bad about his apparent lack of normal human

morality. The only thing that mattered was the thing that he was

exceptionally good at: probabilistic reasoning under uncertainty. And,

critically to the foundation of his business career, effective altruism

gave him access to investors and a recruiting pool of employees, things he

was entirely unsuited to acquiring the normal way.

There's a ton more of this book that I haven't touched on, but this review

is already quite long, so I'll leave you with one more point.

I don't know how true Lewis's portrayal is in all the details. He took

the approach of getting very close to most of the major players in this

drama and largely believing what they said happened, supplemented by

startling access to sources like Bankman-Fried's personal diary and

Caroline Ellis's personal diary. (He also seems to have gotten extensive

information from the personal psychiatrist of most of the people involved;

I'm not sure if there's some reasonable explanation for this, but based

solely on the material in this book, it seems to be a shocking breach of

medical ethics.) But Lewis is a storyteller more than he's a reporter,

and his bias is for telling a great story. It's entirely possible that

the events related here are not entirely true, or are skewed in favor of

making a better story. It's certainly true that they're not the complete

story.

But, that said, I think a book like this is a useful counterweight to the

human tendency to believe in moral villains. This is, frustratingly, a

counterweight extended almost exclusively to higher-class white people

like Bankman-Fried. This is infuriating, but that doesn't make it wrong.

It means we should extend that analysis to more people.

Once FTX collapsed, a lot of people became very invested in the idea that

Bankman-Fried was a straightforward embezzler. Either he intended from

the start to steal everyone's money or, more likely, he started losing

money, panicked, and stole customer money to cover the hole. Lots of

people in history have done exactly that, and lots of people involved in

cryptocurrency have tenuous attachments to ethics, so this is a believable

story. But people are complicated, and there's also truth in the maxim

that every villain is the hero of their own story. Lewis is after a less

boring story than "the crook stole everyone's money," and that leads to

some bias. But sometimes the less boring story is also true.

Here's the thing: even if Sam Bankman-Fried never intended to take any

money, he clearly did intend to mix customer money with Alameda Research

funds. In Lewis's account, he never truly believed in them as separate

things. He didn't care about following accounting or reporting rules; he

thought they were boring nonsense that got in his way. There is obvious

criminal intent here in any reading of the story, so I don't think Lewis's

more complex story would let him escape prosecution. He refused to follow

the rules, and as a result a lot of people lost a lot of money. I think

it's a useful exercise to leave mental space for the possibility that he

had far less obvious reasons for those actions than that he was a simple

thief, while still enforcing the laws that he quite obviously violated.

This book was great. If you like Lewis's style, this was some of the best

entertainment I've read in a while. Highly recommended; if you are at all

interested in this saga, I think this is a must-read.

Rating: 9 out of 10

I was working on what looked like a good pattern for a pair of

jeans-shaped trousers, and I knew I wasn t happy with 200-ish g/m

cotton-linen for general use outside of deep summer, but I didn t have a

source for proper denim either (I had been low-key looking for it for a

long time).

Then one day I looked at an article I had saved about fabric shops that

sell technical fabric and while window-shopping on one I found that they

had a decent selection of denim in a decent weight.

I decided it was a sign, and decided to buy the two heaviest denim they

had: a

I was working on what looked like a good pattern for a pair of

jeans-shaped trousers, and I knew I wasn t happy with 200-ish g/m

cotton-linen for general use outside of deep summer, but I didn t have a

source for proper denim either (I had been low-key looking for it for a

long time).

Then one day I looked at an article I had saved about fabric shops that

sell technical fabric and while window-shopping on one I found that they

had a decent selection of denim in a decent weight.

I decided it was a sign, and decided to buy the two heaviest denim they

had: a  The shop sent everything very quickly, the courier took their time (oh,

well) but eventually delivered my fabric on a sunny enough day that I

could wash it and start as soon as possible on the first pair.

The pattern I did in linen was a bit too fitting, but I was afraid I had

widened it a bit too much, so I did the first pair in the 100% cotton

denim. Sewing them took me about a week of early mornings and late

afternoons, excluding the weekend, and my worries proved false: they

were mostly just fine.

The only bit that could have been a bit better is the waistband, which

is a tiny bit too wide on the back: it s designed to be so for comfort,

but the next time I should pull the elastic a bit more, so that it stays

closer to the body.

The shop sent everything very quickly, the courier took their time (oh,

well) but eventually delivered my fabric on a sunny enough day that I

could wash it and start as soon as possible on the first pair.

The pattern I did in linen was a bit too fitting, but I was afraid I had

widened it a bit too much, so I did the first pair in the 100% cotton

denim. Sewing them took me about a week of early mornings and late

afternoons, excluding the weekend, and my worries proved false: they

were mostly just fine.

The only bit that could have been a bit better is the waistband, which

is a tiny bit too wide on the back: it s designed to be so for comfort,

but the next time I should pull the elastic a bit more, so that it stays

closer to the body.

I wore those jeans daily for the rest of the week, and confirmed that

they were indeed comfortable and the pattern was ok, so on the next

Monday I started to cut the elastic denim.

I decided to cut and sew two pairs, assembly-line style, using the

shaped waistband for one of them and the straight one for the other one.

I started working on them on a Monday, and on that week I had a couple

of days when I just couldn t, plus I completely skipped sewing on the

weekend, but on Tuesday the next week one pair was ready and could be

worn, and the other one only needed small finishes.

I wore those jeans daily for the rest of the week, and confirmed that

they were indeed comfortable and the pattern was ok, so on the next

Monday I started to cut the elastic denim.

I decided to cut and sew two pairs, assembly-line style, using the

shaped waistband for one of them and the straight one for the other one.

I started working on them on a Monday, and on that week I had a couple

of days when I just couldn t, plus I completely skipped sewing on the

weekend, but on Tuesday the next week one pair was ready and could be

worn, and the other one only needed small finishes.

And I have to say, I m really, really happy with the ones with a shaped

waistband in elastic denim, as they fit even better than the ones with a

straight waistband gathered with elastic. Cutting it requires more

fabric, but I think it s definitely worth it.

But it will be a problem for a later time: right now three pairs of

jeans are a good number to keep in rotation, and I hope I won t have to

sew jeans for myself for quite some time.

And I have to say, I m really, really happy with the ones with a shaped

waistband in elastic denim, as they fit even better than the ones with a

straight waistband gathered with elastic. Cutting it requires more

fabric, but I think it s definitely worth it.

But it will be a problem for a later time: right now three pairs of

jeans are a good number to keep in rotation, and I hope I won t have to

sew jeans for myself for quite some time.

I think that the leftovers of plain denim will be used for a skirt or

something else, and as for the leftovers of elastic denim, well, there

aren t a lot left, but what else I did with them is the topic for

another post.

Thanks to the fact that they are all slightly different, I ve started to

keep track of the times when I wash each pair, and hopefully I will be

able to see whether the elastic denim is significantly less durable than

the regular, or the added weight compensates for it somewhat. I m not

sure I ll manage to remember about saving the data until they get worn,

but if I do it will be interesting to know.

Oh, and I say I ve finished working on jeans and everything, but I still

haven t sewn the belt loops to the third pair. And I m currently wearing

them. It s a sewist tradition, or something. :D

I think that the leftovers of plain denim will be used for a skirt or

something else, and as for the leftovers of elastic denim, well, there

aren t a lot left, but what else I did with them is the topic for

another post.

Thanks to the fact that they are all slightly different, I ve started to

keep track of the times when I wash each pair, and hopefully I will be

able to see whether the elastic denim is significantly less durable than

the regular, or the added weight compensates for it somewhat. I m not

sure I ll manage to remember about saving the data until they get worn,

but if I do it will be interesting to know.

Oh, and I say I ve finished working on jeans and everything, but I still

haven t sewn the belt loops to the third pair. And I m currently wearing

them. It s a sewist tradition, or something. :D

This is a post I wrote in June 2022, but did not publish back then.

After first publishing it in December 2023, a perfectionist insecure

part of me unpublished it again. After receiving positive feedback, i

slightly amended and republish it now.

In this post, I talk about unpaid work in F/LOSS, taking on the example

of hackathons, and why, in my opinion, the expectation of volunteer work

is hurting diversity.

Disclaimer: I don t have all the answers, only some ideas and questions.

This is a post I wrote in June 2022, but did not publish back then.

After first publishing it in December 2023, a perfectionist insecure

part of me unpublished it again. After receiving positive feedback, i

slightly amended and republish it now.

In this post, I talk about unpaid work in F/LOSS, taking on the example

of hackathons, and why, in my opinion, the expectation of volunteer work

is hurting diversity.

Disclaimer: I don t have all the answers, only some ideas and questions.

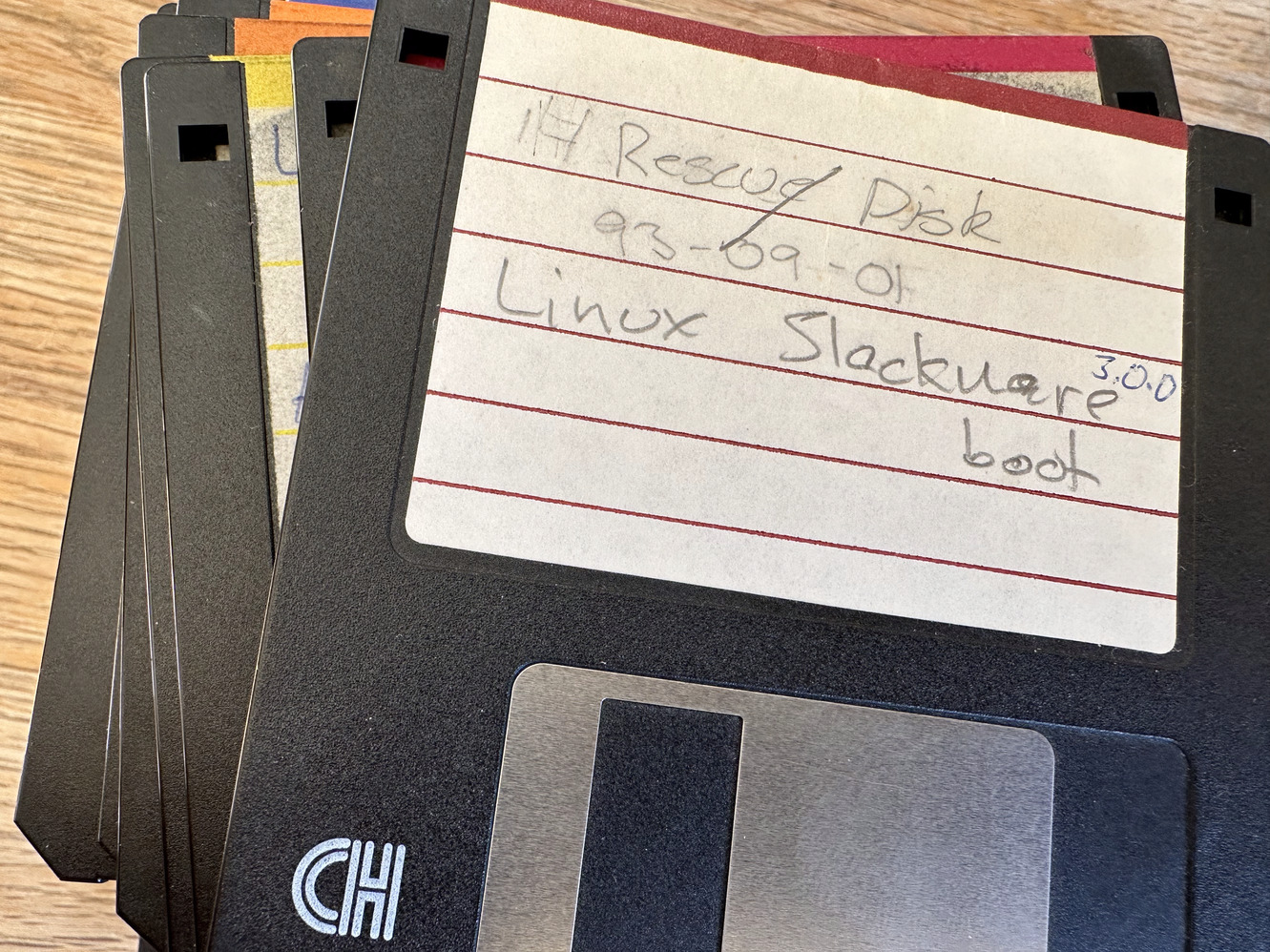

One of my earlier Slackware install disk sets, kept for nostalgic reasons.

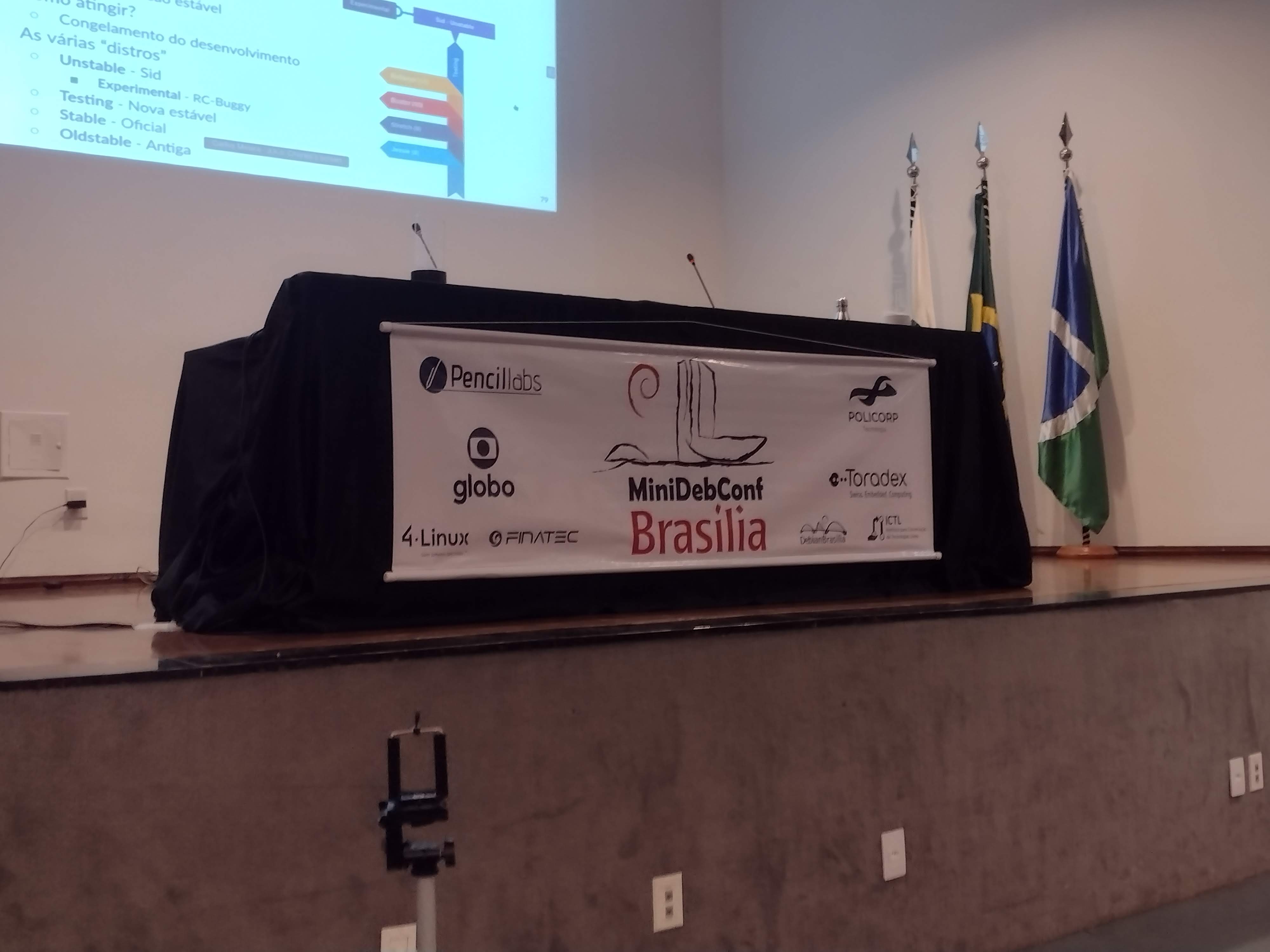

One of my earlier Slackware install disk sets, kept for nostalgic reasons. No per odo de 25 a 27 de maio, Bras lia foi palco da

No per odo de 25 a 27 de maio, Bras lia foi palco da

Atividades

A programa o da MiniDebConf foi intensa e diversificada. Nos dias 25 e 26

(quinta e sexta-feira), tivemos palestras, debates, oficinas e muitas atividades

pr ticas. J no dia 27 (s bado), ocorreu o Hacking Day, um momento especial em

que os(as) colaboradores(as) do Debian se reuniram para trabalhar em conjunto em

v rios aspectos do projeto. Essa foi a vers o brasileira da Debcamp, tradi o

pr via DebConf. Nesse dia, priorizamos as atividades pr ticas de contribui o

ao projeto, como empacotamento de softwares, tradu es, assinaturas de chaves,

install fest e a Bug Squashing Party.

Atividades

A programa o da MiniDebConf foi intensa e diversificada. Nos dias 25 e 26

(quinta e sexta-feira), tivemos palestras, debates, oficinas e muitas atividades

pr ticas. J no dia 27 (s bado), ocorreu o Hacking Day, um momento especial em

que os(as) colaboradores(as) do Debian se reuniram para trabalhar em conjunto em

v rios aspectos do projeto. Essa foi a vers o brasileira da Debcamp, tradi o

pr via DebConf. Nesse dia, priorizamos as atividades pr ticas de contribui o

ao projeto, como empacotamento de softwares, tradu es, assinaturas de chaves,

install fest e a Bug Squashing Party.

N meros da edi o

Os n meros do evento impressionam e demonstram o envolvimento da comunidade com

o Debian. Tivemos 236 inscritos(as), 20 palestras submetidas, 14 volunt rios(as)

e 125 check-ins realizados. Al m disso, nas atividades pr ticas, tivemos

resultados significativos, como 7 novas instala es do Debian GNU/Linux, a

atualiza o de 18 pacotes no reposit rio oficial do projeto Debian pelos

participantes e a inclus o de 7 novos contribuidores na equipe de tradu o.

Destacamos tamb m a participa o da comunidade de forma remota, por meio de

transmiss es ao vivo. Os dados anal ticos revelam que nosso site obteve 7.058

visualiza es no total, com 2.079 visualiza es na p gina principal (que contava

com o apoio de nossos patrocinadores), 3.042 visualiza es na p gina de

programa o e 104 visualiza es na p gina de patrocinadores. Registramos 922

usu rios(as) nicos durante o evento.

No

N meros da edi o

Os n meros do evento impressionam e demonstram o envolvimento da comunidade com

o Debian. Tivemos 236 inscritos(as), 20 palestras submetidas, 14 volunt rios(as)

e 125 check-ins realizados. Al m disso, nas atividades pr ticas, tivemos

resultados significativos, como 7 novas instala es do Debian GNU/Linux, a

atualiza o de 18 pacotes no reposit rio oficial do projeto Debian pelos

participantes e a inclus o de 7 novos contribuidores na equipe de tradu o.

Destacamos tamb m a participa o da comunidade de forma remota, por meio de

transmiss es ao vivo. Os dados anal ticos revelam que nosso site obteve 7.058

visualiza es no total, com 2.079 visualiza es na p gina principal (que contava

com o apoio de nossos patrocinadores), 3.042 visualiza es na p gina de

programa o e 104 visualiza es na p gina de patrocinadores. Registramos 922

usu rios(as) nicos durante o evento.

No  Fotos e v deos

Para revivermos os melhores momentos do evento, temos dispon veis fotos e v deos.

As fotos podem ser acessadas em:

Fotos e v deos

Para revivermos os melhores momentos do evento, temos dispon veis fotos e v deos.

As fotos podem ser acessadas em:  A MiniDebConf Bras lia 2023 foi um marco para a comunidade Debian, demonstrando

o poder da colabora o e do Software Livre. Esperamos que todas e todos tenham

desfrutado desse encontro enriquecedor e que continuem participando ativamente

das pr ximas iniciativas do Projeto Debian. Juntos, podemos fazer a diferen a!

A MiniDebConf Bras lia 2023 foi um marco para a comunidade Debian, demonstrando

o poder da colabora o e do Software Livre. Esperamos que todas e todos tenham

desfrutado desse encontro enriquecedor e que continuem participando ativamente

das pr ximas iniciativas do Projeto Debian. Juntos, podemos fazer a diferen a!

But I m drifting from the topic/movie.

But I m drifting from the topic/movie.

B chel Tivoli light that didn t work

B chel Tivoli light that didn t work Cable for the throttle port

Cable for the throttle port Axa 606 E6-48 light

Axa 606 E6-48 light Axa Spark light

Axa Spark light Front light

Front light Rear light

Rear light I have mentioned several times in this blog, as well as by other

communication means, that I am very happy with the laptop I bought

(used) about a year and a half ago:

I have mentioned several times in this blog, as well as by other

communication means, that I am very happy with the laptop I bought

(used) about a year and a half ago:  Yes, I knew from the very beginning that using this laptop would pose

a challenge to me in many ways, as full hardware support for ARM

laptops are nowhere as easy as for plain boring x86 systems. But the

advantages far outweigh the inconvenience (i.e.

Yes, I knew from the very beginning that using this laptop would pose

a challenge to me in many ways, as full hardware support for ARM

laptops are nowhere as easy as for plain boring x86 systems. But the

advantages far outweigh the inconvenience (i.e.