This is my report from the Netfilter Workshop 2022. The event was held on 2022-10-20/2022-10-21 in Seville, and the venue

was the offices of

Zevenet. We started on Thursday with Pablo Neira (head of the project) giving a short

welcome / opening speech. The previous iteration of this event was in

virtual fashion in 2020, two years ago.

In the year 2021 we were unable to meet either in person or online.

This year, the number of participants was just eight people, and this allowed the setup to be a bit more informal.

We had kind of an un-conference style meeting, in which whoever had something prepared just went ahead and opened

a topic for debate.

In the opening speech, Pablo did a quick recap on the legal problems the Netfilter project had a few years ago, a

topic that was

settled for good some months ago, in January 2022. There were no news in this front,

which was definitely a good thing.

Moving into the technical topics, the workshop proper, Pablo started to comment on the recent developments to

instrument a way to perform inner matching for tunnel protocols. The current implementation supports VXLAN, IPIP,

GRE and GENEVE. Using nftables you can match packet headers that are encapsulated inside these protocols.

He mentioned the design and the goals, that was to have a kernel space setup that allows adding more protocols by just

patching userspace. In that sense, more tunnel protocols will be supported soon, such as IP6IP, UDP, and ESP.

Pablo requested our opinion on whether if nftables should generate the matching dependencies. For example,

if a given tunnel is UDP-based, a dependency match should be there otherwise the rule won t work as expected. The

agreement was to assist the user in the setup when possible and if not, print clear error messages.

By the way, this inner thing is pure stateless packet filtering. Doing inner-conntracking is an open topic

that will be worked on in the future.

Pablo continued with the next topic: nftables automatic ruleset optimizations. The times of linear ruleset evaluation

are over, but some people have a hard time understanding / creating rulesets that leverage maps, sets, and

concatenations. This is where the ruleset optimizations kick in: it can transform a given ruleset to be more optimal

by using such advanced data structures. This is purely about optimizing the ruleset, not about validating

the usefulness of it, which could be another interesting project.

There were a couple of problems mentioned, however. The ruleset optimizer can be slow, O(n!) in worst case. And the

user needs to use nested syntax. More improvements to come in the future.

Next was Stefano Brivio s turn (Red Hat engineer). He had been involved lately in a couple of migrations to

nftables, in particular libvirt and KubeVirt. We were pointed to

https://libvirt.org/firewall.html, and Stefano walked us

through the 3 or 4 different virtual networks that libvirt can create. He evaluated some options to generate efficient

rulesets in nftables to instrument such networks, and commented on a couple of ideas: having a null

matcher in nftables set expression. Or perhaps having kind of subsets, something similar to a view in a SQL

database. The room spent quite a bit of time debating how the nft_lookup API could be extended to support such new

search operations.

We also discussed if having intermediate facilities such as

firewalld could provide the abstraction levels that

could make developers more comfortable. Using firewalld also may have the advantage that coordination between different

system components writing ruleset to nftables is handled by firewalld itself and developers are freed of the

responsibility of doing it right.

Next was Fernando F. Mancera (Red Hat engineer). He wanted to improve error reporting when deleting table/chain/rules

with nftables. In general, there are some inconsistencies on how tables can be deleted (or flushed). And there seems

to be no correct way to make a single table go away with all its content in a single command.

The room agreed in that the commands

destroy table and

delete table should be defined consistently, with

the following meanings:

- destroy: nuke the table, don t fail if it doesn t exist

- delete: delete the table, but the command will fail if it doesn t exist

This topic diverted into another: how to reload/replace a ruleset but keep stateful information (such as counters).

Next was Phil Sutter (Netfilter coreteam member and Red Hat engineer). He was interested in discussing options to

make iptables-nft backward compatible. The use case he brought was simple: What happens if a container running

iptables 1.8.7 creates a ruleset with features not supported by 1.8.6. A later container running 1.8.6 may fail to

operate.

Phil s first approach was to attach additional metadata into rules to assist older iptables-nft in decoding and

printing the ruleset. But in general, there are no obvious or easy solutions to this problem. Some people are

mixing different tooling version, and there is no way all cases can be predicted/covered. iptables-nft already

refuses to work in some of the most basic failure scenarios.

An other way to approach the issue could be to introduce some kind of support to print raw expressions in

iptables-nft, like

-m nft xyz. Which feels ugly, but may work. We also explored playing with the semantics of

release version numbers. And another idea: store strings in the nft rule userdata area with the equivalent

matching information for older iptables-nft.

In fact, what Phil may have been looking for is not backwards but forward compatibility. Phil was undecided which path

to follow, but perhaps the most common-sense approach is to fall back to a major release version bump (2.x.y)

and declaring compatibility breakage with older iptables 1.x.y.

That was pretty much it for the first day. We had dinner together and went to sleep for the next day.

The second day was opened by Florian Westphal (Netfilter coreteam member and Red Hat engineer). Florian has been

trying to improve nftables performance in kernels with RETPOLINE mitigations enabled. He commented that several

workarounds have been collected over the years to avoid the performance penalty of such mitigations.

The basic strategy is to avoid function indirect calls in the kernel.

Florian also described how BPF programs work around this more effectively. And actually, Florian tried translating

nf_hook_slow() to BPF. Some preliminary benchmarks results were showed, with about 2% performance improvement in

MB/s and PPS. The

flowtable infrastructure is specially benefited from this approach. The software

flowtable infrastructure already offers a 5x performance improvement with regards the classic forwarding path, and the

change being researched by Florian would be an addition on top of that.

We then moved into discussing

the meeting Florian had with Alexei in Zurich. My personal opinion was that

Netfilter offers interesting user-facing interfaces and semantics that BPF does not. Whereas BPF may be more performant

in certain scenarios. The idea of both things going hand in hand may feel natural for some people. Others also

shared my view, but no particular agreement was reached in this topic. Florian will probably continue exploring options

on that front.

The next topic was opened by Fernando. He wanted to discuss Netfilter involvement in Google Summer of Code and Outreachy.

Pablo had some personal stuff going on last year that prevented him from engaging in such projects. After all, GSoC is

not fundamental or a priority for Netfilter. Also, Pablo mentioned the lack of support from others in the project for

mentoring activities. There was no particular decision made here. Netfilter may be present again in such initiatives

in the future, perhaps under the umbrella of other organizations.

Again, Fernando proposed the next topic: nftables JSON support. Fernando shared his plan of going over all features

and introduce programmatic tests from them. He also mentioned that the nftables wiki was incomplete and couldn t be

used as a reference for missing tests. Phil suggested running the nftables python test-suite in JSON mode, which

should complain about missing features. The py test suite should cover pretty much all statements and variations on

how the nftables expression are invoked.

Next, Phil commented on nftables xtables support. This is, supporting legacy xtables extensions in nftables.

The most prominent problem was that some translations had some corner cases that resulted in a listed ruleset that

couldn t be fed back into the kernel. Also, iptables-to-nftables translations can be sloppy, and the resulting

rule won t work in some cases. In general,

nft list ruleset nft -f may fail in rulesets created by iptables-nft

and there is no trivial way to solve it.

Phil also commented on potential iptables-tests.py speed-ups. Running the test suite may take very long time

depending on the hardware. Phil will try to re-architect it, so it runs faster. Some alternatives had been

explored, including collecting all rules into a single iptables-restore run, instead of hundreds of individual

iptables calls.

Next topic was about documentation on the

nftables wiki. Phil is interested in having all nftables

code-flows documented, and presented some improvements in that front. We are trying to organize all

developer-oriented docs on a mediawiki portal, but the extension was not active yet. Since I worked at the

Wikimedia Foundation, all the room stared at me, so at the end I kind of committed to exploring and enabling the

mediawiki portal extension. Note to self: is this perhaps

https://www.mediawiki.org/wiki/Portals ?

Next presentation was by Pablo. He had a list of assorted topics for quick review and comment.

- We discussed nftables accept/drop semantics. People that gets two or more rulesets from different software are

requesting additional semantics here. A typical case is fail2ban integration. One option is quick accept (no further

evaluation if accepted) and the other is lazy drop (don t actually drop the packet, but delay decision until the

whole ruleset has been evaluated). There was no clear way to move forward with this.

- A debate on nft userspace memory usage followed. Some people are running nftables on low end devices with very

little memory (such as 128 MB). Pablo was exploring a potential solution: introducing

struct constant_expr, which

can reduce 12.5% mem usage.

- Next we talked about repository licensing (or better, relicensing to GPLv2+). Pablo went over a list of files in the

nftables tree which had diverging licenses. All people in the room agreed on this relicensing effort. A mention to

the libreadline situation was made.

- Another quick topic: a bogus EEXIST in nft_rbtree. Pablo & Stefano to work in a patch.

- Next one was conntrack early drop in flowtable. Pablo is studying use cases for some legitimate UDP unidirectional

flows (like RTP traffic).

- Pablo and Stefano discussed pipapo not being atomic on updates. Stefano already looked into it, and one of the ideas

was to introduce a new commit API for sets.

- The last of the quick topics was an idea to have a global table in nftables. Or some global items, like sets. Folk

in the community keep asking for this. Some ideas were discussed, like perhaps adding a family agnostic family. But

then there would be a challenge: nftables would need to generate byte code that works in any of the hooks.

There was no immediate way of addressing this. The idea of having templated tables/sets circulated again as a way

of reusing data across namespaces/families.

Following this, a new topic was introduced by Stefano. He wanted to talk about nft_set_pipapo, documentation, what

to do next, etc. He did a nice explanation of how the pipapo algorithm works for element inserts, lookups, and

deletion. The source code

is pretty well documented, by the way. He showed performance measurements of

different data types being stored in the structure. After some lengthly debate on how to introduce changes without

breaking usage for users, he declared some action items: writing more docs, addressing problems with non-atomic

set reloads and a potential rework of nft_rbtree.

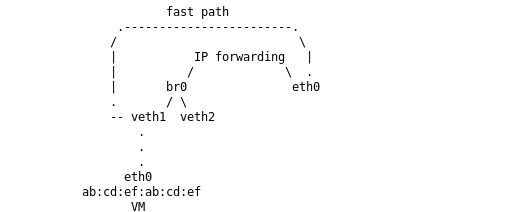

After that, the next topic was kubernetes & netfilter , also by Stefano. Actually, this topic was very similar

to what we already discussed regarding libvirt. Developers want to reduce packet matching effort, but also often

don t leverage nftables most performant features, like sets, maps or concatenations.

Some Red Hat developers are already working on replacing everything with native nftables & firewalld integrations.

But some rules generators are very bad. Kubernetes (kube-proxy) is a known case. Developers simply won t learn how

to code better ruleset generators. There was a good question floating around: What are people missing on first

encounter with nftables?

The Netfilter project doesn t have a training or marketing department or something like that. We cannot

force-educate developers on how to use nftables in the right way. Perhaps we need to create a set of dedicated

guidelines, or best practices, in the wiki for app developers that rely on nftables. Jozsef Kadlecsik

(Netfilter coreteam) supported this idea, and suggested going beyond: such documents should be written exclusively

from the nftables point of view: stop approaching the docs as a comparison to the old iptables semantics.

Related to that last topic, next was Laura Garc a (Zevenet engineer, and venue host). She shared the same information

as she presented in the Kubernetes network SIG in August 2020. She walked us through

nftlb and

kube-nftlb, a proof-of-concept replacement for kube-proxy based on nftlb that can outperform it.

For whatever reason, kube-nftlb wasn t adopted by the upstream kubernetes community.

She also covered latest changes to nftlb and some missing features, such as integration with nftables egress.

nftlb is being extended to be a full proxy service and a more robust overall solution for service abstractions.

In a nutshell, nftlb uses a templated ruleset and only adds elements to sets, which is exactly the right usage

of the nftables framework. Some other projects should follow its example. The performance numbers are impressive,

and from the early days it was clear that it was

outperforming classical LVS-DSR by 10x.

I used this opportunity to bring a topic that I wanted to discuss. I ve seen some SRE coworkers talking about

katran as a replacement for traditional LVS setups. This software is a XDP/BPF based solution for load

balancing. I was puzzled about what this software had to offer versus, for example, nftlb or any other

nftables-based solutions. I commented on the highlighs of katran, and we discussed the nftables equivalents.

nftlb is a simple daemon which does everything using a JSON-enabled REST API. It is already

packaged into Debian, ready to use, whereas katran feels more like a collection of steps that you

need to run in a certain order to get it working. All the hashing, caching, HA without state sharing, and backend

weight selection features of katran are already present in nftlb.

To work on a pure L3/ToR datacenter network setting, katran uses IPIP encapsulation. They can t just mangle the

MAC address as in traditional DSR because the backend server is on a different L3 domain. It turns out nftables

has a

nft_tunnel expression that can do this encapsulation for complete feature parity. It is only available in

the kernel, but it can be made available easily on the userspace utility too.

Also, we discussed some limitations of katran, for example, inability to handle IP fragmentation, IP options, and

potentially others not documented anywhere. This seems to be common with XDP/BPF programs, because handling all

possible network scenarios would over-complicate the BPF programs, and at that point you are probably better off by

using the normal Linux network stack and nftables.

In summary, we agreed that nftlb can pretty much offer the same as katran, in a more flexible way.

Finally, after many interesting debates over two days, the workshop ended. We all agreed on the need for extending

it to 3 days next time, since 2 days feel too intense and too short for all the topics worth discussing.

That s all on my side! I really enjoyed this Netfilter workshop round.

This blog post shares my thoughts on attending Kubecon and CloudNativeCon 2024 Europe in Paris. It was my third time at

this conference, and it felt bigger than last year s in Amsterdam. Apparently it had an impact on public transport. I

missed part of the opening keynote because of the extremely busy rush hour tram in Paris.

On Artificial Intelligence, Machine Learning and GPUs

Talks about AI, ML, and GPUs were everywhere this year. While it wasn t my main interest, I did learn about GPU resource

sharing and power usage on Kubernetes. There were also ideas about offering Models-as-a-Service, which could be cool for

Wikimedia Toolforge in the future.

See also:

On security, policy and authentication

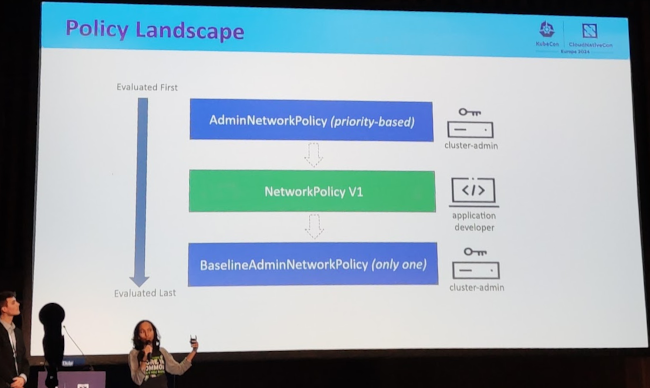

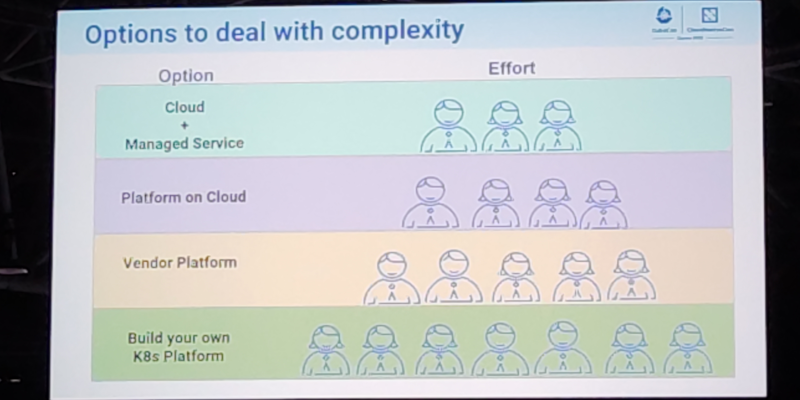

This was probably the main interest for me in the event, given Wikimedia Toolforge was about to migrate away from Pod

Security Policy, and we were currently evaluating different alternatives.

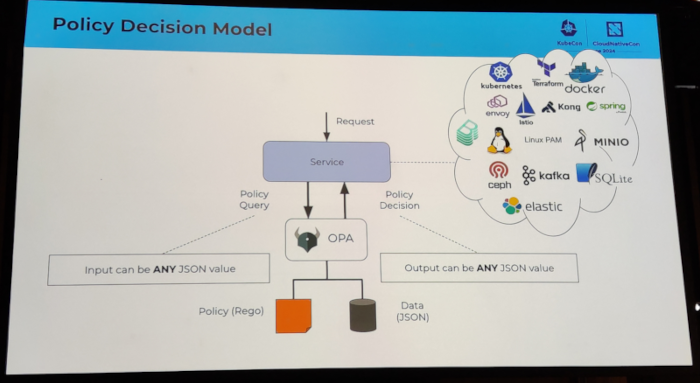

In contrast to my previous attendances to Kubecon, where there were three policy agents with presence in the program

schedule, Kyverno, Kubewarden and OpenPolicyAgent (OPA), this time only OPA had the most relevant sessions.

One surprising bit I got from one of the OPA sessions was that it could work to authorize linux PAM sessions. Could this

be useful for Wikimedia Toolforge?

This blog post shares my thoughts on attending Kubecon and CloudNativeCon 2024 Europe in Paris. It was my third time at

this conference, and it felt bigger than last year s in Amsterdam. Apparently it had an impact on public transport. I

missed part of the opening keynote because of the extremely busy rush hour tram in Paris.

On Artificial Intelligence, Machine Learning and GPUs

Talks about AI, ML, and GPUs were everywhere this year. While it wasn t my main interest, I did learn about GPU resource

sharing and power usage on Kubernetes. There were also ideas about offering Models-as-a-Service, which could be cool for

Wikimedia Toolforge in the future.

See also:

On security, policy and authentication

This was probably the main interest for me in the event, given Wikimedia Toolforge was about to migrate away from Pod

Security Policy, and we were currently evaluating different alternatives.

In contrast to my previous attendances to Kubecon, where there were three policy agents with presence in the program

schedule, Kyverno, Kubewarden and OpenPolicyAgent (OPA), this time only OPA had the most relevant sessions.

One surprising bit I got from one of the OPA sessions was that it could work to authorize linux PAM sessions. Could this

be useful for Wikimedia Toolforge?

I attended several sessions related to authentication topics. I discovered the keycloak software, which looks very

promising. I also attended an Oauth2 session which I had a hard time following, because I clearly missed some additional

knowledge about how Oauth2 works internally.

I also attended a couple of sessions that ended up being a vendor sales talk.

See also:

On container image builds, harbor registry, etc

This topic was also of interest to me because, again, it is a core part of Wikimedia Toolforge.

I attended a couple of sessions regarding container image builds, including topics like general best practices, image

minimization, and buildpacks. I learned about kpack, which at first sight felt like a nice simplification of how the

Toolforge build service was implemented.

I also attended a session by the Harbor project maintainers where they shared some valuable information on things

happening soon or in the future , for example:

I attended several sessions related to authentication topics. I discovered the keycloak software, which looks very

promising. I also attended an Oauth2 session which I had a hard time following, because I clearly missed some additional

knowledge about how Oauth2 works internally.

I also attended a couple of sessions that ended up being a vendor sales talk.

See also:

On container image builds, harbor registry, etc

This topic was also of interest to me because, again, it is a core part of Wikimedia Toolforge.

I attended a couple of sessions regarding container image builds, including topics like general best practices, image

minimization, and buildpacks. I learned about kpack, which at first sight felt like a nice simplification of how the

Toolforge build service was implemented.

I also attended a session by the Harbor project maintainers where they shared some valuable information on things

happening soon or in the future , for example:

I very recently missed some semantics for limiting the number of open connections per namespace, see Phabricator

T356164: [toolforge] several tools get periods of connection refused (104) when connecting to

wikis This functionality should be available in the lower level tools, I

mean Netfilter. I may submit a proposal upstream at some point, so they consider adding this to the Kubernetes API.

Final notes

In general, I believe I learned many things, and perhaps even more importantly I re-learned some stuff I had forgotten

because of lack of daily exposure. I m really happy that the cloud native way of thinking was reinforced in me, which I

still need because most of my muscle memory to approach systems architecture and engineering is from the old pre-cloud

days. That being said, I felt less engaged with the content of the conference schedule compared to last year. I don t

know if the schedule itself was less interesting, or that I m losing interest?

Finally, not an official track in the conference, but we met a bunch of folks from

Wikimedia Deutschland. We had a really nice time talking about how

wikibase.cloud uses Kubernetes, whether they could run in Wikimedia Cloud Services, and why

structured data is so nice.

I very recently missed some semantics for limiting the number of open connections per namespace, see Phabricator

T356164: [toolforge] several tools get periods of connection refused (104) when connecting to

wikis This functionality should be available in the lower level tools, I

mean Netfilter. I may submit a proposal upstream at some point, so they consider adding this to the Kubernetes API.

Final notes

In general, I believe I learned many things, and perhaps even more importantly I re-learned some stuff I had forgotten

because of lack of daily exposure. I m really happy that the cloud native way of thinking was reinforced in me, which I

still need because most of my muscle memory to approach systems architecture and engineering is from the old pre-cloud

days. That being said, I felt less engaged with the content of the conference schedule compared to last year. I don t

know if the schedule itself was less interesting, or that I m losing interest?

Finally, not an official track in the conference, but we met a bunch of folks from

Wikimedia Deutschland. We had a really nice time talking about how

wikibase.cloud uses Kubernetes, whether they could run in Wikimedia Cloud Services, and why

structured data is so nice.

In October 2023, I departed from the

In October 2023, I departed from the  I recently started playing with Terraform/

I recently started playing with Terraform/ Last month, in October 2023, I started a new job as a Senior Site Reliability Engineer at the Germany-headquartered technology company

Last month, in October 2023, I started a new job as a Senior Site Reliability Engineer at the Germany-headquartered technology company

During the weekend of 19-23 May 2023 I attended the

During the weekend of 19-23 May 2023 I attended the  Despite the sessions, the main purpose of the hackathon was, well, hacking. While I was in the hacking space for more than 12 hours each day, my

ability to get things done was greatly reduced by the constant conversations, help requests, and other social interactions with the folks. Don t get

me wrong, I embraced that reality with joy, because the social bonding aspect of it is perhaps the main reason why we gathered in person instead of

virtually.

That being said, this is a rough list of what I did:

Despite the sessions, the main purpose of the hackathon was, well, hacking. While I was in the hacking space for more than 12 hours each day, my

ability to get things done was greatly reduced by the constant conversations, help requests, and other social interactions with the folks. Don t get

me wrong, I embraced that reality with joy, because the social bonding aspect of it is perhaps the main reason why we gathered in person instead of

virtually.

That being said, this is a rough list of what I did:

It wasn t the first Wikimedia Hackathon for me, and I felt the same as in previous iterations: it was a welcoming space, and I was surrounded by

friends and nice human beings. I ended the event with a profound feeling of being privileged, because I was part of the Wikimedia movement, and

because I was invited to participate in it.

It wasn t the first Wikimedia Hackathon for me, and I felt the same as in previous iterations: it was a welcoming space, and I was surrounded by

friends and nice human beings. I ended the event with a profound feeling of being privileged, because I was part of the Wikimedia movement, and

because I was invited to participate in it.

This post serves as a report from my attendance to Kubecon and CloudNativeCon 2023 Europe that took place in

Amsterdam in April 2023. It was my second time physically attending this conference, the first one was in

Austin, Texas (USA) in 2017. I also attended once in a virtual fashion.

The content here is mostly generated for the sake of my own recollection and learnings, and is written from

the notes I took during the event.

The very first session was the opening keynote, which reunited the whole crowd to bootstrap the event and

share the excitement about the days ahead. Some astonishing numbers were announced: there were more than

10.000 people attending, and apparently it could confidently be said that it was the largest open source

technology conference taking place in Europe in recent times.

It was also communicated that the next couple iteration of the event will be run in China in September 2023

and Paris in March 2024.

More numbers, the CNCF was hosting about 159 projects, involving 1300 maintainers and about 200.000

contributors. The cloud-native community is ever-increasing, and there seems to be a strong trend in the

industry for cloud-native technology adoption and all-things related to PaaS and IaaS.

The event program had different tracks, and in each one there was an interesting mix of low-level and higher

level talks for a variety of audience. On many occasions I found that reading the talk title alone was not

enough to know in advance if a talk was a 101 kind of thing or for experienced engineers. But unlike in

previous editions, I didn t have the feeling that the purpose of the conference was to try selling me

anything. Obviously, speakers would make sure to mention, or highlight in a subtle way, the involvement of a

given company in a given solution or piece of the ecosystem. But it was non-invasive and fair enough for me.

On a different note, I found the breakout rooms to be often small. I think there were only a couple of rooms

that could accommodate more than 500 people, which is a fairly small allowance for 10k attendees. I realized

with frustration that the more interesting talks were immediately fully booked, with people waiting in line

some 45 minutes before the session time. Because of this, I missed a few important sessions that I ll

hopefully watch online later.

Finally, on a more technical side, I ve learned many things, that instead of grouping by session I ll group

by topic, given how some subjects were mentioned in several talks.

On gitops and CI/CD pipelines

Most of the mentions went to

This post serves as a report from my attendance to Kubecon and CloudNativeCon 2023 Europe that took place in

Amsterdam in April 2023. It was my second time physically attending this conference, the first one was in

Austin, Texas (USA) in 2017. I also attended once in a virtual fashion.

The content here is mostly generated for the sake of my own recollection and learnings, and is written from

the notes I took during the event.

The very first session was the opening keynote, which reunited the whole crowd to bootstrap the event and

share the excitement about the days ahead. Some astonishing numbers were announced: there were more than

10.000 people attending, and apparently it could confidently be said that it was the largest open source

technology conference taking place in Europe in recent times.

It was also communicated that the next couple iteration of the event will be run in China in September 2023

and Paris in March 2024.

More numbers, the CNCF was hosting about 159 projects, involving 1300 maintainers and about 200.000

contributors. The cloud-native community is ever-increasing, and there seems to be a strong trend in the

industry for cloud-native technology adoption and all-things related to PaaS and IaaS.

The event program had different tracks, and in each one there was an interesting mix of low-level and higher

level talks for a variety of audience. On many occasions I found that reading the talk title alone was not

enough to know in advance if a talk was a 101 kind of thing or for experienced engineers. But unlike in

previous editions, I didn t have the feeling that the purpose of the conference was to try selling me

anything. Obviously, speakers would make sure to mention, or highlight in a subtle way, the involvement of a

given company in a given solution or piece of the ecosystem. But it was non-invasive and fair enough for me.

On a different note, I found the breakout rooms to be often small. I think there were only a couple of rooms

that could accommodate more than 500 people, which is a fairly small allowance for 10k attendees. I realized

with frustration that the more interesting talks were immediately fully booked, with people waiting in line

some 45 minutes before the session time. Because of this, I missed a few important sessions that I ll

hopefully watch online later.

Finally, on a more technical side, I ve learned many things, that instead of grouping by session I ll group

by topic, given how some subjects were mentioned in several talks.

On gitops and CI/CD pipelines

Most of the mentions went to  On etcd, performance and resource management

I attended a talk focused on etcd performance tuning that was very encouraging. They were basically talking

about the

On etcd, performance and resource management

I attended a talk focused on etcd performance tuning that was very encouraging. They were basically talking

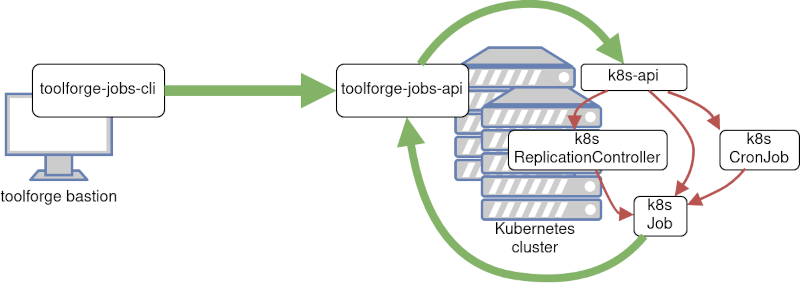

about the  On jobs

I attended a couple of talks that were related to HPC/grid-like usages of Kubernetes. I was truly impressed

by some folks out there who were using Kubernetes Jobs on massive scales, such as to train machine learning

models and other fancy AI projects.

It is acknowledged in the community that the early implementation of things like Jobs and CronJobs had some

limitations that are now gone, or at least greatly improved. Some new functionalities have been added as

well. Indexed Jobs, for example, enables each Job to have a number (index) and process a chunk of a larger

batch of data based on that index. It would allow for full grid-like features like sequential (or again,

indexed) processing, coordination between Job and more graceful Job restarts. My first reaction was: Is that

something we would like to enable in

On jobs

I attended a couple of talks that were related to HPC/grid-like usages of Kubernetes. I was truly impressed

by some folks out there who were using Kubernetes Jobs on massive scales, such as to train machine learning

models and other fancy AI projects.

It is acknowledged in the community that the early implementation of things like Jobs and CronJobs had some

limitations that are now gone, or at least greatly improved. Some new functionalities have been added as

well. Indexed Jobs, for example, enables each Job to have a number (index) and process a chunk of a larger

batch of data based on that index. It would allow for full grid-like features like sequential (or again,

indexed) processing, coordination between Job and more graceful Job restarts. My first reaction was: Is that

something we would like to enable in  I read somewhere a nice meme about Linux: Do you want an operating system or do you want an adventure? I love

it, because it is so true. What you are about to read is my adventure to set a usable screen resolution in a fresh

Debian testing installation.

The context is that I have two different Lenovo Thinkpad laptops with 16 screen and nvidia graphic cards. They are both

installed with the latest Debian testing. I use the closed-source nvidia drivers (they seem to work better than the nouveau

module). The desktop manager and environment that I use is lightdm + XFCE4. The monitor native resolution in both machines

is very high:

I read somewhere a nice meme about Linux: Do you want an operating system or do you want an adventure? I love

it, because it is so true. What you are about to read is my adventure to set a usable screen resolution in a fresh

Debian testing installation.

The context is that I have two different Lenovo Thinkpad laptops with 16 screen and nvidia graphic cards. They are both

installed with the latest Debian testing. I use the closed-source nvidia drivers (they seem to work better than the nouveau

module). The desktop manager and environment that I use is lightdm + XFCE4. The monitor native resolution in both machines

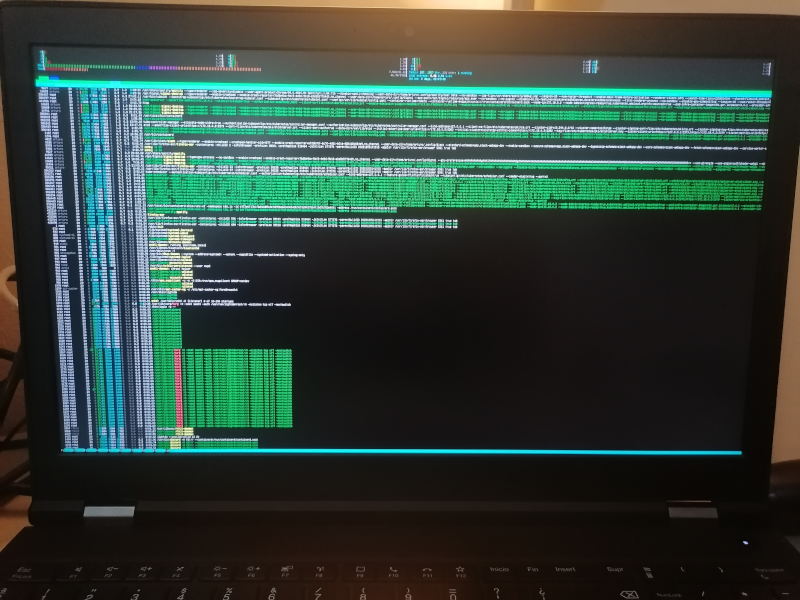

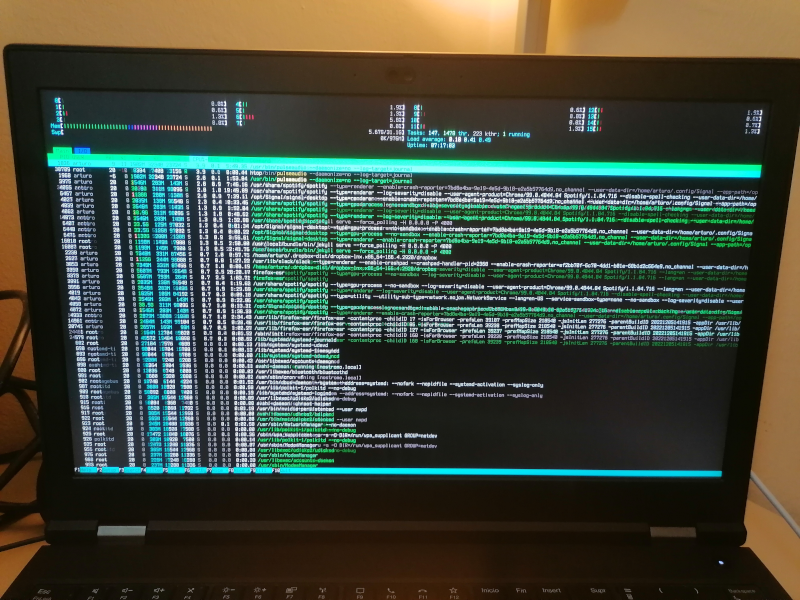

is very high:  Everything in the system is affected by this:

Everything in the system is affected by this:

The truth is the solution is satisfying only to a degree. I m a person with good eyesight and can work with

these bit larger fonts. I m not sure if I can get larger fonts using this method, honestly.

After some search, I discovered that some folks already managed to describe the problem in detail and

filed a proper bug report in Debian, see

The truth is the solution is satisfying only to a degree. I m a person with good eyesight and can work with

these bit larger fonts. I m not sure if I can get larger fonts using this method, honestly.

After some search, I discovered that some folks already managed to describe the problem in detail and

filed a proper bug report in Debian, see  A few days ago, my home network got a refresh that resulted in the enablement of some next-generation

technologies for me and my family. Well, next-generation or current-generation, depending on your point of view.

Per the ISP standards in Spain (my country), what I ll describe next is literally the most and latest you can get.

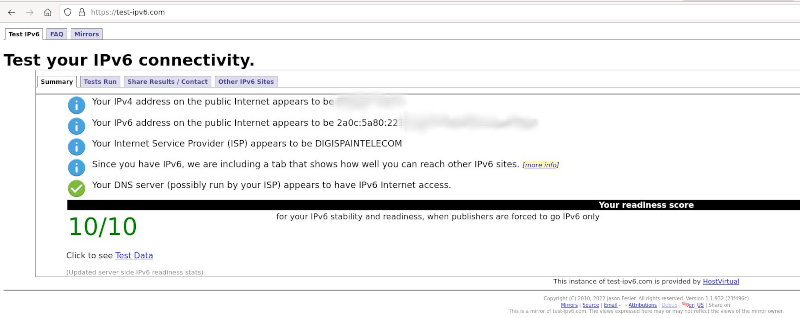

The post title spoiled it already. I have now 10G internet uplink and native IPv6 since I changed my ISP to

A few days ago, my home network got a refresh that resulted in the enablement of some next-generation

technologies for me and my family. Well, next-generation or current-generation, depending on your point of view.

Per the ISP standards in Spain (my country), what I ll describe next is literally the most and latest you can get.

The post title spoiled it already. I have now 10G internet uplink and native IPv6 since I changed my ISP to  If you are curious, this was the IPv6 prefix whois information:

If you are curious, this was the IPv6 prefix whois information:

I m trying to replace my old OpenPGP key with a new one. The old key wasn t compromised or lost or anything

bad. Is still valid, but I plan to get rid of it soon. It was created in 2013.

The new key id fingerprint is:

I m trying to replace my old OpenPGP key with a new one. The old key wasn t compromised or lost or anything

bad. Is still valid, but I plan to get rid of it soon. It was created in 2013.

The new key id fingerprint is:  This is my report from the Netfilter Workshop 2022. The event was held on 2022-10-20/2022-10-21 in Seville, and the venue

was the offices of

This is my report from the Netfilter Workshop 2022. The event was held on 2022-10-20/2022-10-21 in Seville, and the venue

was the offices of  The second day was opened by Florian Westphal (Netfilter coreteam member and Red Hat engineer). Florian has been

trying to improve nftables performance in kernels with RETPOLINE mitigations enabled. He commented that several

workarounds have been collected over the years to avoid the performance penalty of such mitigations.

The basic strategy is to avoid function indirect calls in the kernel.

Florian also described how BPF programs work around this more effectively. And actually, Florian tried translating

The second day was opened by Florian Westphal (Netfilter coreteam member and Red Hat engineer). Florian has been

trying to improve nftables performance in kernels with RETPOLINE mitigations enabled. He commented that several

workarounds have been collected over the years to avoid the performance penalty of such mitigations.

The basic strategy is to avoid function indirect calls in the kernel.

Florian also described how BPF programs work around this more effectively. And actually, Florian tried translating

Finally, after many interesting debates over two days, the workshop ended. We all agreed on the need for extending

it to 3 days next time, since 2 days feel too intense and too short for all the topics worth discussing.

That s all on my side! I really enjoyed this Netfilter workshop round.

Finally, after many interesting debates over two days, the workshop ended. We all agreed on the need for extending

it to 3 days next time, since 2 days feel too intense and too short for all the topics worth discussing.

That s all on my side! I really enjoyed this Netfilter workshop round.

This post was originally published in the

This post was originally published in the  This post was originally published in the

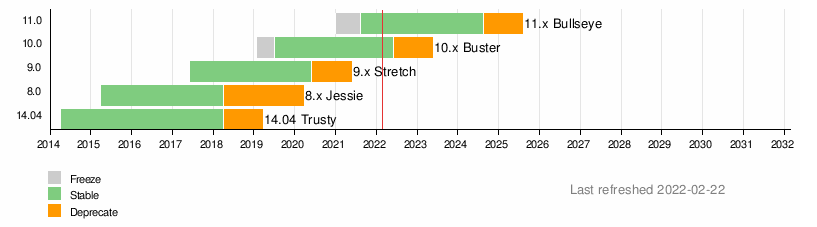

This post was originally published in the  Back then, our reasoning was that skipping to Debian 11 Bullseye would be more difficult for our users, especially because greater jump in version

numbers for the underlying runtimes. Additionally, all the migration work started before Debian 11 Bullseye was released. Our original intention was

for the migration to be completed before the release. For a couple of reasons the project was delayed, and when it was time to restart the project we

decided to continue with the original idea.

We had some work done to get Debian 10 Buster working correctly with the grid, and supporting Debian 11 Bullseye would require an additional effort. We

didn t even check if Grid Engine could be installed in the latest Debian release. For the grid, in general, the engineering effort to do a N+1 upgrade is

lower than doing a N+2 upgrade. If we had tried a N+2 upgrade directly, things would have been much slower and difficult for us, and for our users.

In that sense, our conclusion was to not skip Debian 10 Buster.

Back then, our reasoning was that skipping to Debian 11 Bullseye would be more difficult for our users, especially because greater jump in version

numbers for the underlying runtimes. Additionally, all the migration work started before Debian 11 Bullseye was released. Our original intention was

for the migration to be completed before the release. For a couple of reasons the project was delayed, and when it was time to restart the project we

decided to continue with the original idea.

We had some work done to get Debian 10 Buster working correctly with the grid, and supporting Debian 11 Bullseye would require an additional effort. We

didn t even check if Grid Engine could be installed in the latest Debian release. For the grid, in general, the engineering effort to do a N+1 upgrade is

lower than doing a N+2 upgrade. If we had tried a N+2 upgrade directly, things would have been much slower and difficult for us, and for our users.

In that sense, our conclusion was to not skip Debian 10 Buster.

This post was originally published in the

This post was originally published in the  This post was originally published in the

This post was originally published in the

In the last few months I presented several talks. Topics ranged from a round table on free

software, to sharing some of my work as SRE in the Cloud Services team at the Wikimedia Foundation.

For some of them the videos are now published, so I would like to write a reference here, mostly as

a way to collect such events for my own record. Isn t that what

In the last few months I presented several talks. Topics ranged from a round table on free

software, to sharing some of my work as SRE in the Cloud Services team at the Wikimedia Foundation.

For some of them the videos are now published, so I would like to write a reference here, mostly as

a way to collect such events for my own record. Isn t that what  Stefano Bravio commented on several open topics for nftables that he is interested on working on.

One of them, issues related to concatenations + vmap issues. He also addressed concerns with

people s expectations when migrating from ipset to nftables. There are several corner features in

ipset that aren t currently supported in nftables, and we should document them. Stefano is also

wondering about some tools to help in the migration. A translation layer like there is in place

for iptables. Eric Gaver commented there are a couple of semantics that will not be suitable for

translation, such as global sets, or sets of sets. But ipset is way simpler than iptables, so a

translation mechanism should probably be created. In any case, there was agreement that anything

that helps people migrate is more than welcome, even if it doesn t support 100% of the use cases.

Stefano is planning to write documentation in the

Stefano Bravio commented on several open topics for nftables that he is interested on working on.

One of them, issues related to concatenations + vmap issues. He also addressed concerns with

people s expectations when migrating from ipset to nftables. There are several corner features in

ipset that aren t currently supported in nftables, and we should document them. Stefano is also

wondering about some tools to help in the migration. A translation layer like there is in place

for iptables. Eric Gaver commented there are a couple of semantics that will not be suitable for

translation, such as global sets, or sets of sets. But ipset is way simpler than iptables, so a

translation mechanism should probably be created. In any case, there was agreement that anything

that helps people migrate is more than welcome, even if it doesn t support 100% of the use cases.

Stefano is planning to write documentation in the