Josselin Mouette is one the leaders of the pkg-gnome team, he takes sound technical decisions and doesn t fear writing code to work-around upstream issues. He deserves kudos for the work he has put into packaging GNOME over the years. He can also be very sarcastic (sometimes he even enjoys participating to flamewars on debian lists), and there are quite a few topics where we have long agreed to disagree. But this kind of diversity is also what makes Debian a so interesting place

Read on to learn more about the pkg-gnome team, its plans for Wheezy, Josselin s opinion on the GNOME 3 switch, and much more.

Raphael: Who are you?

Josselin: I am a 31 years old Linux systems engineer. I started in life with physics, which I studied at the ENS Lyon. I started a thesis on experimental and numerical models for optoelectronics, but when it became clear that research was not for me, I abandoned it and accepted a job at the CEA, which holds the largest computing center in Europe. Working on these machines has been the most awesome job ever (except for it being near Paris). After that I worked a bit on system monitoring technologies.

I am married, currently living in Lyon, and working for EDF (the French historical electricity company) on scientific workstations using Debian. EDF is using Debian on more than a thousand workstations and holds the fastest Debian supercomputer in the world (200 Tflops), which makes it another obvious place for Debian developers.

Raphael: How did you start contributing to Debian?

Josselin: I discovered Debian in 1999 while studying at the ENS, which is one of the biggest nests of Debian developers while being a small place, it is producing almost one Debian developer per year on average. After wondering for a while what it could be useful for, hacking on a slink snapshot made me think that it was for, well, everything except for gaming. Later, in 2002, when I was working on optoelectronics computing codes, I started to package them for Debian in order to make them easier to install, for us as well as other labs over the world. I started the NM process, and it was going smoothly but also going to take time. However, at that moment, the frozen-bubble game went out and made quite some buzz. Since I knew a guy who knew the game s developer, he asked me to package it. The package found 3 sponsors in a very short time and was fast-tracked into the archive at a speed that was unseen before. After which the NM process was completed very quickly.

At that time, I was a heavy WindowMaker user, but I didn t like the direction the project was taking (actually, I wonder if there was one). GNOME was starting to become attractive, but its packaging in Debian was very ineffective, with many inconsistent packages maintained by people who didn t ever talk to each other some of them didn t speak English, and some of them didn t talk at all. Together with awesome people, among which Jordi Mallach, Gustavo Noronha Silva, JHM Dassen, Ross Burton and S bastien Bacher, we started the GNOME team in 2003, introducing consistent packaging practices, and initiating synchronized uploads. Releasing a completely integrated GNOME 2.8 in sarge was a considerable achievement; proving (together with the Perl team) that a team was the best way to maintain large package sets changed the way people work on Debian.

Proving [ ] that a team was the best way to maintain large package sets changed the way people work on Debian.

Raphael: You re one of the most active contributors of the team which is packaging GNOME for Debian. What would you suggest to a new contributor who would like to help the team?

Josselin: There are several ways to contact the team, but the recommended one has always been IRC. We hang on #debian-gnome on the OFTC network, so just come around and ask for us. The real question is what you want to do in the team. Of course, most new volunteers want to help packaging the latest and greatest version of GNOME into unstable as soon as possible, but unless they already have Debian background, this is not the easiest task. Since there are already people working on this, the big packages are usually waiting on dependencies.

I used to direct newcomers towards bug triage, but it is a tedious task and I m now convinced that our huge bug backlog will never be dealt with. The most useful thing to do for newcomers now is probably to find a GNOME or GNOME-related package that needs improvement or is lagging behind, and simply try to work on it. You can also come and fix the bugs you find annoying. Find a patch on the GNOME bugzilla, or cook it yourself, propose it, and if it s worthy enough you ll soon get commit access.

Our huge bug backlog will never be dealt with.

At this point I feel worth mentioning that if no one answers in 10 minutes, it doesn t mean that no one will answer in 2 hours, so please stay on the channel after asking.

Raphael: There s been some controversy about GNOME 3 and the direction that the project is taking. What s your personal stance on GNOME 3? And what s the position of the pkg-gnome team?

Josselin: The controversy is not new to GNOME 3, but the large-scale changes made with it have put it more prominently. The criticism usually boils down to a few categories:

- General lack of configurability

- Strange design decisions

- Red Hat centric development

- Hardware requirements

- Change resistance

The lack of configuration options has been an ongoing criticism since GNOME 2.0 has decided to rip off most of them. Of course, when the control center was redesigned again for 3.0, there was a surge of horrified exclamations from people who missed their favorite buttons. On this topic, I fully concur with GNOME developers. The configuration option that is useful for you is not necessarily useful for someone else. Of course, sometimes developers go a bit too far, but the general direction is right. At work, we found that only a minority of users actually configure anything on their desktops: they just want something that works to launch their applications. Apple and Google have sold millions of devices by making them the simplest possible and without any configuration.

Design decisions are, on the contrary, individual decisions, and each of them, while having reasons behind it, can be questioned. I remember seeing a lot of complaints when the OK and Cancel buttons were reversed in dialog boxes, something that nobody questions anymore. GNOME Shell is full of such changes; some are easy to get accustomed with, some others just make eyebrows raise. The most obvious example is the user menu in GNOME 3.2, which contains an entry to configure your Google account, but no entry to shutdown the computer. Both decisions were taken independently, each of them with (good or bad) reasons, but the result is simply ridiculous. The default configuration in Debian will contain an extension to make it a bit better, but on the whole we don t intend to diverge from the upstream design, on which a lot of good work has been done.

On the whole we don t intend to diverge from the upstream design, on which a lot of good work has been done.

Point 3 is more complex. Red Hat being the company spending the most on GNOME, it is obvious that their employees work on making things work for their distribution. An example is the recurring discussions about relying on system services that are currently only implemented by systemd. Since there is a lot of (mostly unjustified) resistance against systemd in Debian, and since it won t work on kFreeBSD anyway, someone needs to develop an alternative implementation of these services for upstart and sysvinit. Everything is in place for someone else to do the job but it has to be done, and this can be frustrating. Especially since it can also be hard to integrate changes needed for other distributions .

Hardware requirements are mostly a consequence of the previous criticism: there s hardware that most distributions just don t want to bother supporting. We ve seen it in squeeze with the introduction of a hard dependency on PulseAudio. The Debian GNOME team (together with the Gentoo maintainers) made this dependency optional, carrying heavy patches, in order to cover the cases where it does not work. Now that it has gained more maturity, making this effort obsolete, the new tendency is to require 3D acceleration. For various reasons, it is not available to everyone . On this matter, the position of the Debian GNOME team has always been to support as much different configurations as possible with reasonable effort. Thanks to efforts from the incredible Vincent Untz, upstream supports a so-called fallback mode , which is the GNOME panel from 2.x with a lot of its bugs fixed. We intend to support this mode for as long as reasonably possible in Debian, possibly even after upstream ends up dropping it. However, other applications are going to require 3D because GStreamer is moving to clutter too, affecting video playback performance on non-accelerated systems . For epiphany this is not a problem; only embedded video will be affected. But for totem, this is a major issue; because of that we will probably keep totem 3.0 in wheezy.

Finally, there is a natural human tendency to dislike change (I have it too), and it applies a lot to desktop users habits. Needless to say a change of such a scale as introducing GNOME Shell can trigger reactions. However, I don t think it is reasonable, because of this resistance, to keep gnome-panel 2.x in Debian. This would be a lot of work on obsolete technology, and would prevent the upcoming removal of a lot of deprecated libraries. This time is much better spent improving gnome-panel 3.x in Debian and keeping the fallback mode great. One of the change that was made in Debian was to make it easier to find, being available as GNOME Classic directly from the login manager, instead of having to find it in an obscure configuration panel. In all cases, I would recommend to actually try GNOME Shell for a few hours before ditching it. I had never been accustomed to a new environment as quickly ever before.

In all cases, I would recommend to actually try GNOME Shell for a few hours before ditching it.

Having seen several of my GDM patches reverted without a warning, I know we are not finished with carrying patches in Debian packages.

Scientific workstations are a non-trivial example, since there is a measurable effect of using 3D in the window manager on heavy 3D applications.

On the other hand, on accelerated systems, this feature should end up improving performance a lot.

Raphael: What are your plans for Debian Wheezy?

Josselin: The first goal of the GNOME team is, of course, to provide again a great desktop environment to work on. For wheezy it will probably be based on GNOME 3.4. There also needs to be some work on package management interfaces. Upstream bases everything on PackageKit, but it is not as featureful as the aptdaemon Ubuntu technology. If I have time, I would also like to improve HTTP proxy support, since currently it is based on a stack of terrible hacks.

Raphael: If you could spend all your time on Debian, what would you work on?

Josselin: Obviously I would like to make GNOME in Debian even better. That would imply working on underneath dependencies (what we now like to call

plumbing) to make sure everything is working great. This would also imply working more as GNOME upstream to make it more suitable for our needs.

I would also work on large-scale improvements on the distribution, like conditional recommends which I d love to see implemented , or automatic build-dependency generation. I would also work on the installer to make it better for desktops machines.

The idea is to automatically install language packs, or glues between two packages when both packages are installed.

Raphael: What s the biggest problem of Debian?

Josselin: The obvious answer is the same as the one most people you interviewed before gave: not enough members in core teams. A lot of developers join Debian to work on a small number of pet packages, and don t necessarily want to be involved with existing teams. It is probably still not obvious enough that the primary way to start contributing to Debian is to join an existing team.

But if there is one thing that is preventing Debian from gaining more momentum now, it is a completely different one: the too short support timeframe. 3 years is really not enough for corporate users. One year to migrate from one version to another is too short, and it is not possible to skip a release. It is definitely possible to change that with reasonable effort: the long-term support after 3 years doesn t have to cover the same perimeter as the short-term one. For example, we could upgrade the kernel to the version in the current stable release, and stop fixing all non-remote security holes. The important thing is to cover the most basic needs: companies are ready to take the risk of having less support if it allows skipping a version, but not the risk of having no support at all. And even more important is to say that you do something. Red Hat says they support a release for 10 years, but of course after 5 years the supported perimeter is extremely small.

3 years [of support] is really not enough for corporate users.

Long-term support will not magically fix all problems in Debian, but it will bring more corporate users into the picture. And with corporate users come paid Debian developers, who can work on critical pieces of the system. Debian was built on the synergy between individuals and companies, and in recent years perhaps as a reaction against what happened with Ubuntu we ve kind of forgot the latter. A lot of individuals have joined the project, and they are actively working, for example, on shortening the release cycle, which goes against the interest of professionals. We should embrace again such users and developers, and that means adapting to the current needs of larger entities.

Raphael: You re the maintainer of python-support, a packaging helper that was competing with python-central. Both helpers are now deprecated in favor of dh_python2. Does this mean that the situation of Python in Debian is now sane? Or are there remaining problems?

Josselin: dh_python2 (and the Python3 version, dh_python3) has a sane enough design. It fixes a lot of issues in python-central and also python-support, at the expense of somehow reduced functionality for developers. However, just like the previous tools, it merely works around design mistakes in the Python interpreter. For example it is not possible to split binary modules, pure-Python modules and byte-compiled modules in different directory trees, like Perl does although PEP 3147 introduces a way to do so. There is still no sane and standardized way to deal with module versions. There is no difference made between the module (which is a part of language semantics) and the file containing it (an information which depends on the implementation). Developers heavily rely on introspection features and make assumptions based on the implementation, that make it impossible to work around problems with module files.

Such problems are not restricted to Python. Those who fought against Ruby gems could tell even worse stories. While introducing GObject introspection packages in Debian (they can be used in JavaScript and Python to provide modules based on GObject libraries), I was pleased to see a clear distinction between file and module, but I was again struck by the fact you are not forced to declare API versions in your Python/JS code. In all cases, there is no reliable way to detect runtime dependencies in a given Python or JavaScript file, which leaves the maintainer to declare them by hand, and of course, often be wrong about them. Add to that the fact that most errors cannot be detected before runtime. For all these reasons, and while still being fond of Python for scripts and prototyping, I ve become really skeptical of using purely interpreted languages to write real applications. Some GNOME developers are moving away from Python and JavaScript, mostly towards Vala; I can only approve of that move and hope the same happens to other projects.

Raphael: Is there someone in Debian that you admire for their contributions?

Of course there is the never-sleeping, never-stopping, Michael Biebl who can upload a whole GNOME release in a single week-end. But there are a lot of awesome people who make Debian something that simply works. I could talk about Cyril Brulebois from the X strike force, Julien Cristau from the release team, Sjoerd Simons for his sound advice and work on plumbing, Luca Falavigna who is so fast at processing NEW, to quote only a few of those I work with frequently. And of course, Jordi and Sam for their humor.

Thank you to Josselin for the time spent answering my questions. I hope you enjoyed reading his answers as I did. Note that you can find older interviews on

http://wiki.debian.org/PeopleBehindDebian.

Subscribe to my newsletter to get my monthly summary of the Debian/Ubuntu news and to not miss further interviews. You can also follow along on Identi.ca, Google+, Twitter and Facebook

.

8 comments Liked this article? Click here. My blog is Flattr-enabled.

Apart from being somewhat slow, one of the downsides of the

original Raspberry Pi SoC was that it had an old ARM11 core which

implements the ARMv6 architecture. This was particularly

unfortunate as most common distributions (Debian, Ubuntu, Fedora,

etc) standardized on the ARMv7-A architecture as a minimum for

their ARM hardfloat ports. Which is one of the reasons for Raspbian

and the various other RPI specific distributions.

Happily, with the new Raspberry Pi

2 using Cortex-A7 Cores (which implement the ARMv7-A

architecture) this issue is out of the way, which means that a a

standard Debian hardfloat userland will run just fine. So the

obvious first thing to do when an RPI 2 appeared on my desk was to

put together a quick Debian Jessie image for it.

The result of which can be found at: https://images.collabora.co.uk/rpi2/

Login as root with password debian (Obviously do change the

password and create a normal user after booting). The image is 3G,

so should fit on any SD card marketed as 4G or bigger. Using

bmap-tools

for flashing is recommended, otherwise you'll be waiting for 2.5G

of zeros to be written to the card, which tends to be rather

boring. Note that the image is really basic and will just get you

to a login prompt on either serial or hdmi, batteries are very much

not included, but can be apt-getted :).

Technically, this image is simply a Debian Jessie debootstrap

with a extra packages for hardware support. Unlike Raspbian the

first partition (which contains the firmware & kernel files to

boot the system) is mounted on /boot/firmware rather then on /boot.

This is because the VideoCore expects the first partition to be a

FAT filesystem, but mounting FAT on /boot really doesn't work right

on Debian systems as it contains files managed by dpkg (e.g. the

kernel package) which requires a POSIX compatible filesystem.

Essentially the same reason why Debian is using /boot/efi for the

ESP partition on Intel systems rather the mounting it on /boot

directly.

For reference, the RPI2 specific packages in this image are from

https://repositories.collabora.co.uk/debian/

in the jessie distribution and rpi2 component (this repository is

enabled by default on the image). The relevant packages there

are:

Apart from being somewhat slow, one of the downsides of the

original Raspberry Pi SoC was that it had an old ARM11 core which

implements the ARMv6 architecture. This was particularly

unfortunate as most common distributions (Debian, Ubuntu, Fedora,

etc) standardized on the ARMv7-A architecture as a minimum for

their ARM hardfloat ports. Which is one of the reasons for Raspbian

and the various other RPI specific distributions.

Happily, with the new Raspberry Pi

2 using Cortex-A7 Cores (which implement the ARMv7-A

architecture) this issue is out of the way, which means that a a

standard Debian hardfloat userland will run just fine. So the

obvious first thing to do when an RPI 2 appeared on my desk was to

put together a quick Debian Jessie image for it.

The result of which can be found at: https://images.collabora.co.uk/rpi2/

Login as root with password debian (Obviously do change the

password and create a normal user after booting). The image is 3G,

so should fit on any SD card marketed as 4G or bigger. Using

bmap-tools

for flashing is recommended, otherwise you'll be waiting for 2.5G

of zeros to be written to the card, which tends to be rather

boring. Note that the image is really basic and will just get you

to a login prompt on either serial or hdmi, batteries are very much

not included, but can be apt-getted :).

Technically, this image is simply a Debian Jessie debootstrap

with a extra packages for hardware support. Unlike Raspbian the

first partition (which contains the firmware & kernel files to

boot the system) is mounted on /boot/firmware rather then on /boot.

This is because the VideoCore expects the first partition to be a

FAT filesystem, but mounting FAT on /boot really doesn't work right

on Debian systems as it contains files managed by dpkg (e.g. the

kernel package) which requires a POSIX compatible filesystem.

Essentially the same reason why Debian is using /boot/efi for the

ESP partition on Intel systems rather the mounting it on /boot

directly.

For reference, the RPI2 specific packages in this image are from

https://repositories.collabora.co.uk/debian/

in the jessie distribution and rpi2 component (this repository is

enabled by default on the image). The relevant packages there

are:

There s a lot of discussion / moaning /arguing at this time, so I thought I d post something about how LAVA got into Debian Jessie, the work involved and the lessons I ve learnt. Hopefully, it will help someone avoid the disappointment of having their package missing the migration into a future stable release. This was going to be a talk at the Minidebconf-uk in Cambridge but I decided to put this out as a permanent blog entry in the hope that it will be a useful reference for the future, not just Jessie.

Context

LAVA relies on a number of dependencies which were at the time all this started NEW to Debian as well as many others already in Debian. I d been running LAVA using packages on my own system for a few months before the packages were ready for use on the main servers (I never actually installed LAVA using the old virtualenv method on my own systems, except in a VM). I did do quite a lot of this on my own but I also had a team supporting the effort and valuing the benefits of moving to a packaged system.

At the time, LAVA was based on Ubuntu (12.04 LTS Precise Pangolin) and a new Ubuntu LTS was close (Trusty Tahr 14.04) but I started work on this in 2013. By the time my packages were ready for general usage, it was winter 2013 and much too close to get anything into Ubuntu in time for Trusty. So I started a local repo using space provided by Linaro. At the same time, I started uploading the dependencies to Debian. json-schema-validator, django-testscenarios and others arrived in April and May 2014. (Trusty was released in April). LAVA arrived in NEW in May, being accepted into unstable at the end of June. LAVA arrived in testing for the first time in July 2014.

Upstream development continued apace and a regular monthly upload, with some hotfixes in between, continued until close to the freeze.

At this point, note that although upstream is a medium sized team, the Debian packaging also has a team but all the uploads were made by me. I planned ahead. I knew that I would be going to Macau for Linaro Connect in February a critical stage in the finalisation of the packages and the migration of existing instances from the old methods. I knew that I would be on vacation from August through to the end of September 2014 including at least two weeks with absolutely no connectivity of any kind.

Right at this time, Django1.7 arrived in experimental with the intent to go into unstable and hence into Jessie. This was a headache for me, I initially sought to delay the migration until after Jessie. However, we discussed it upstream, allocated time within the busy schedule and also sought help from within Debian with the RFH tag. Rapha l Hertzog contributed patches for django1.7 support and we worked on those patches upstream, once I was back from vacation. (The final week of my vacation was a work conference, so we had everyone together at one hacking table.)

Still there was more to do, the django1.7 patches allowed the unit tests to complete but broke other parts of the lava-server package and needed subsequent tweaks and fixes.

Even with all this, the auto-removal from testing for packages affected by RC bugs in their dependencies became very important to monitor (it still is). It would be useful if some packages had less complex dependency chains (I m looking at you, uwsgi) as the auto-removal also covers build-depends. This led to some more headaches with libmatheval. I m not good with functional programming languages, I did have some exposure to Scheme when working on Gnucash upstream but it wasn t pleasant. The thought of fixing a scheme problem in the test suite of libmatheval was daunting. Again though, asking for help, I found people in the upstream team who wanted to refresh their use of scheme and were able to help out. The fix migrated into testing in October.

Just for added complications, lava-server gained a few RC bugs of it s own during that time too fixed upstream but awkward nonetheless.

Achievement unlocked

So that s how a complex package like lava-server gets into stable. With a lot of help. The main problem with top-level packages like this is the sheer weight of the dependency chain. Something seemingly unrelated (like libmatheval) can seriously derail the migrations. The package doesn t use the matheval support provided by uwsgi. The bug in matheval wasn t in the parts of matheval used by uwsgi. It wasn t in a language I am at all comfortable in fixing but it s my name on the changelog of the NMU. That happened because I asked for help. OK, when django1.7 was scheduled to arrive in Debian unstable and I knew that lava was not ready, I reacted out of fear and anxiety. However, I sought help, help was provided and that help was enough to get upstream to a point where the side-effects of the required changes could be fixed.

Maintaining a top-level package in Debian is becoming more like maintaining a core package in Debian and that is a good thing. When your package has a lot of dependencies, those dependencies become part of the maintenance workload of your package. It doesn t matter if those are install time dependencies, build dependencies or reverse dependencies. It doesn t actually matter if the issues in those packages are in languages you would personally wish to be expunged from the archive. It becomes your problem but not yours alone.

Debian has a lot of flames right now and Enrico encouraged us to look at what else is actually happening in Debian besides those arguments. Well, on top of all this with lava, I also did what I could to help the arm64 port along and I m very happy that this has been accepted into Jessie as an official release architecture. That s a much bigger story than LAVA yet LAVA was and remains instrumental in how arm64 gained the support in the kernel and various upstreams which allowed patches to be accepted and fixes to be incorporated into Debian packages.

So a roll call of helpers who may otherwise not have been recognised via changelogs, in no particular order:

There s a lot of discussion / moaning /arguing at this time, so I thought I d post something about how LAVA got into Debian Jessie, the work involved and the lessons I ve learnt. Hopefully, it will help someone avoid the disappointment of having their package missing the migration into a future stable release. This was going to be a talk at the Minidebconf-uk in Cambridge but I decided to put this out as a permanent blog entry in the hope that it will be a useful reference for the future, not just Jessie.

Context

LAVA relies on a number of dependencies which were at the time all this started NEW to Debian as well as many others already in Debian. I d been running LAVA using packages on my own system for a few months before the packages were ready for use on the main servers (I never actually installed LAVA using the old virtualenv method on my own systems, except in a VM). I did do quite a lot of this on my own but I also had a team supporting the effort and valuing the benefits of moving to a packaged system.

At the time, LAVA was based on Ubuntu (12.04 LTS Precise Pangolin) and a new Ubuntu LTS was close (Trusty Tahr 14.04) but I started work on this in 2013. By the time my packages were ready for general usage, it was winter 2013 and much too close to get anything into Ubuntu in time for Trusty. So I started a local repo using space provided by Linaro. At the same time, I started uploading the dependencies to Debian. json-schema-validator, django-testscenarios and others arrived in April and May 2014. (Trusty was released in April). LAVA arrived in NEW in May, being accepted into unstable at the end of June. LAVA arrived in testing for the first time in July 2014.

Upstream development continued apace and a regular monthly upload, with some hotfixes in between, continued until close to the freeze.

At this point, note that although upstream is a medium sized team, the Debian packaging also has a team but all the uploads were made by me. I planned ahead. I knew that I would be going to Macau for Linaro Connect in February a critical stage in the finalisation of the packages and the migration of existing instances from the old methods. I knew that I would be on vacation from August through to the end of September 2014 including at least two weeks with absolutely no connectivity of any kind.

Right at this time, Django1.7 arrived in experimental with the intent to go into unstable and hence into Jessie. This was a headache for me, I initially sought to delay the migration until after Jessie. However, we discussed it upstream, allocated time within the busy schedule and also sought help from within Debian with the RFH tag. Rapha l Hertzog contributed patches for django1.7 support and we worked on those patches upstream, once I was back from vacation. (The final week of my vacation was a work conference, so we had everyone together at one hacking table.)

Still there was more to do, the django1.7 patches allowed the unit tests to complete but broke other parts of the lava-server package and needed subsequent tweaks and fixes.

Even with all this, the auto-removal from testing for packages affected by RC bugs in their dependencies became very important to monitor (it still is). It would be useful if some packages had less complex dependency chains (I m looking at you, uwsgi) as the auto-removal also covers build-depends. This led to some more headaches with libmatheval. I m not good with functional programming languages, I did have some exposure to Scheme when working on Gnucash upstream but it wasn t pleasant. The thought of fixing a scheme problem in the test suite of libmatheval was daunting. Again though, asking for help, I found people in the upstream team who wanted to refresh their use of scheme and were able to help out. The fix migrated into testing in October.

Just for added complications, lava-server gained a few RC bugs of it s own during that time too fixed upstream but awkward nonetheless.

Achievement unlocked

So that s how a complex package like lava-server gets into stable. With a lot of help. The main problem with top-level packages like this is the sheer weight of the dependency chain. Something seemingly unrelated (like libmatheval) can seriously derail the migrations. The package doesn t use the matheval support provided by uwsgi. The bug in matheval wasn t in the parts of matheval used by uwsgi. It wasn t in a language I am at all comfortable in fixing but it s my name on the changelog of the NMU. That happened because I asked for help. OK, when django1.7 was scheduled to arrive in Debian unstable and I knew that lava was not ready, I reacted out of fear and anxiety. However, I sought help, help was provided and that help was enough to get upstream to a point where the side-effects of the required changes could be fixed.

Maintaining a top-level package in Debian is becoming more like maintaining a core package in Debian and that is a good thing. When your package has a lot of dependencies, those dependencies become part of the maintenance workload of your package. It doesn t matter if those are install time dependencies, build dependencies or reverse dependencies. It doesn t actually matter if the issues in those packages are in languages you would personally wish to be expunged from the archive. It becomes your problem but not yours alone.

Debian has a lot of flames right now and Enrico encouraged us to look at what else is actually happening in Debian besides those arguments. Well, on top of all this with lava, I also did what I could to help the arm64 port along and I m very happy that this has been accepted into Jessie as an official release architecture. That s a much bigger story than LAVA yet LAVA was and remains instrumental in how arm64 gained the support in the kernel and various upstreams which allowed patches to be accepted and fixes to be incorporated into Debian packages.

So a roll call of helpers who may otherwise not have been recognised via changelogs, in no particular order:

What Zappa was working on will likely always remain a

mystery.

What Zappa was working on will likely always remain a

mystery.

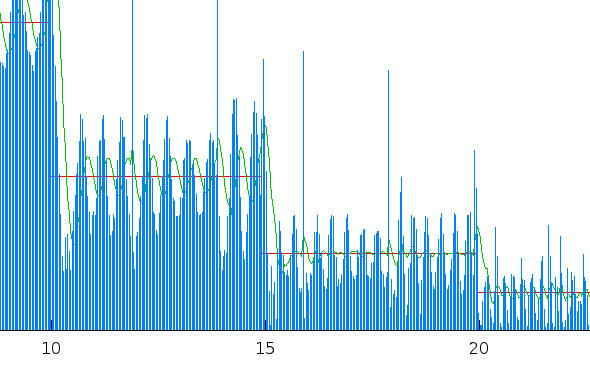

The blue impulses is the per-frame size (scaled), the green line

the bitrate average over 10 frames and the red line the target

bitrate. Clearly this isn't an ideal picture, we will need to do

some more tweaks to our default settings to make x264 behave the

way we want. The same will be true for all other codecs and codec

elements, at the moment the GStreamer VP8 element doesn't handle

run-time bitrate changes at all and the Theora element acts it its

very own interesting way (

The blue impulses is the per-frame size (scaled), the green line

the bitrate average over 10 frames and the red line the target

bitrate. Clearly this isn't an ideal picture, we will need to do

some more tweaks to our default settings to make x264 behave the

way we want. The same will be true for all other codecs and codec

elements, at the moment the GStreamer VP8 element doesn't handle

run-time bitrate changes at all and the Theora element acts it its

very own interesting way ( The screenshot isn't very exciting, which is exactly how it

should be. In the world i would like to live in people don't have

to care about video codecs, whether it is for video calls, playing

online media or whatever else they want to do with video. Looking

at the list of

The screenshot isn't very exciting, which is exactly how it

should be. In the world i would like to live in people don't have

to care about video codecs, whether it is for video calls, playing

online media or whatever else they want to do with video. Looking

at the list of  A Belorussian translation of this article is available

A Belorussian translation of this article is available  I can recommend some feline assistance whenever installing

things on an ExoPC.

I can recommend some feline assistance whenever installing

things on an ExoPC.

Oh well, guess it's time for a nice walk to get some fresh new

inner tubes.

Oh well, guess it's time for a nice walk to get some fresh new

inner tubes.

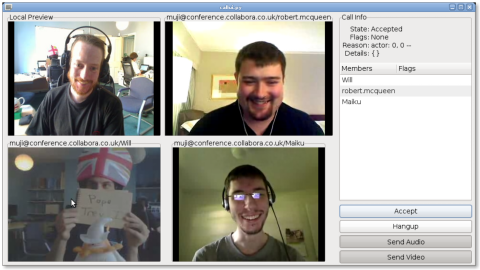

The screenshot is featuring myself in Collabora's UK office

(top-left), Robert McQueen from a hotelroom in Taipei (top-right),

Will Thompson and Magical trevor also from the Collabora UK office

(bottom-left) and Mike Ruprecht from somewhere in Missouri. So we

were quite spread out over our little planet. Both audio and video

quality was quite good, even though the network in robs hotel was a

bit flaky from time to time.

In case you want to try it out have a look at the

The screenshot is featuring myself in Collabora's UK office

(top-left), Robert McQueen from a hotelroom in Taipei (top-right),

Will Thompson and Magical trevor also from the Collabora UK office

(bottom-left) and Mike Ruprecht from somewhere in Missouri. So we

were quite spread out over our little planet. Both audio and video

quality was quite good, even though the network in robs hotel was a

bit flaky from time to time.

In case you want to try it out have a look at the  As you all know by now, exciting moves from Google on the

As you all know by now, exciting moves from Google on the  We’re going to polish this up into an activity you can install, and also Sjoerd Simons has been working with us on

We’re going to polish this up into an activity you can install, and also Sjoerd Simons has been working with us on