Riku Voipio: Adguard DNS, or how to reduce ads without apps/extensions

Looking at the options for blocking ads, people usually first look at browser extensions. Google's plan is to disable adblock extensions in 2024. The alternative is usually an app (on phones) or a "VPN" that does filtering for you. All these methods are quite heavyweight, and require installing software on your phone or PC. What is less known, is that you can you DNS-over-TLS or DNS-over-HTTPS for ad blocking.

What is DNS-over-TLS and DNS-over-HTTPS

Since Android 9, Google has provided a setting calledPrivate DNS. Traditional DNS is unencrypted UDP so anyone can monitor your requests and/or return false records. With private DNS, DNS-over-TLS or DNS-over-HTTPS is used to guarantee the DNS request is sent to the server you configured. Which Google hopes is of course Google's own public servers. If you do so, your ISP and hotspot providers no longer can monitor, monetize and enshittify your DNS requests - only Google can do so.

Subverting private DNS for ad blocking

This is where AdGuard DNS comes useful. By setting the AdGuard DNS server as your "private DNS" server following the instructions,you can start blocking right away. Note, on PC you can also configure the Adguard DNS server on the Browser settings (Firefox -> Enable secure DNS and Chrome -> Use Secure DNS) instead of configuring a system-wide DNS server. Blocking via DNS, of course, limits effectiveness to ads distributed from 3rd party servers.

Other uses for AdGuard DNS

If you register for Adguard DNS, you get your "own", customizable DNS server address to point to. You can, for example, create your own /etc/hosts style records that are now available to all you devices you have connected to the Adguard DNS server - whether your a are home or not. Of course, you choose to use the personal DNS server, your DNS query privacy is in the hands of AdGuard.

Going further

What else is ruining the web than Ads? Well commercial social media. An article ("Ei n in! Algoritmi hky") from the latest Finnish Magazine SKROLLI (mainos: jos luet suomeksi, Tilaa skrolli!) hit a chord for me. The algorithms of social media sites are designed not to serve you, but to addict you. For example, If you stop to watch a hateful meme image, the algorithm will record "The user spent time watching this, show more of the same!". It doesn't help block or mute - yeah that spefic hate engager will be blocked, but all the dozens similar hate pages will still be shown to you. Worse, the social media sites are being overrun by AI-generated crap. Unfortunately the addictive nature of the algorithms works. You reload in vain, hoping this time the algorithmic god will show something your friends share. How do you cure addiction? By blocking yourself out:

Epilogue

I didn't block myself out of Fediverse - yet. It's not engineered to be addictive, which is also probably why it isn't as popular as the commercial alternatives...

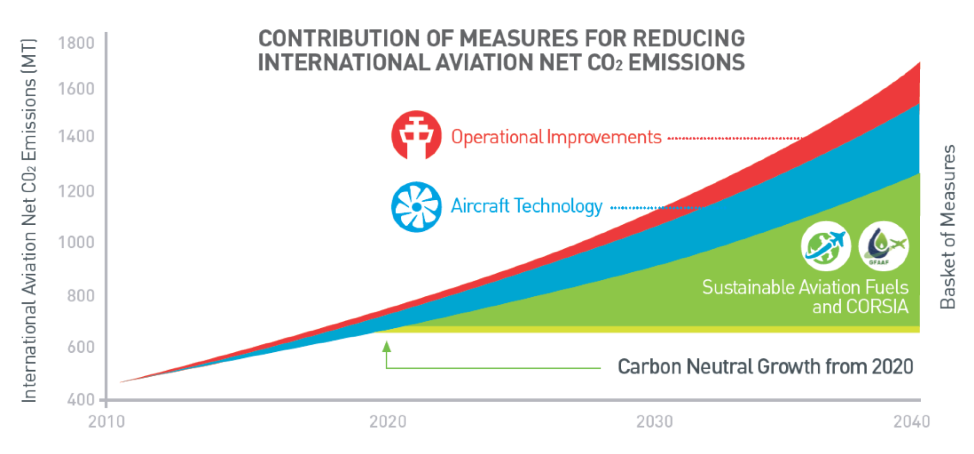

If you have to choose one year when you won't fly, this year, 2020, is the one to choose. Why? Because CORSIA. What the heck is CORSIA?

If you have to choose one year when you won't fly, this year, 2020, is the one to choose. Why? Because CORSIA. What the heck is CORSIA?  What does it have to do with *this* Year? The first phase of CORSIA will start next year, so the emissions are frozen to year 2020 levels. Due to certain recent events, lots of flights have already been cancelled - which means the reference year aviation emissions are already a lot less than the aviation industry was expecting. By avoiding flying this year, the aviation emissions are going to be frozen at an even lower level. This will increase cost of co2 offsetting for airlines, and the joke is going to be on them. So consider skipping business travel and taking your holiday trip this year with something else than a plane. Wouldn't recommend a cruise ship, tho...

What does it have to do with *this* Year? The first phase of CORSIA will start next year, so the emissions are frozen to year 2020 levels. Due to certain recent events, lots of flights have already been cancelled - which means the reference year aviation emissions are already a lot less than the aviation industry was expecting. By avoiding flying this year, the aviation emissions are going to be frozen at an even lower level. This will increase cost of co2 offsetting for airlines, and the joke is going to be on them. So consider skipping business travel and taking your holiday trip this year with something else than a plane. Wouldn't recommend a cruise ship, tho...

Debian Jessie has been released on April 25th, 2015. This has opened the

Stretch development cycle. Reactions to the idea of making Debian

Debian Jessie has been released on April 25th, 2015. This has opened the

Stretch development cycle. Reactions to the idea of making Debian

The USB relay is driven with a short script, hard-reset-1

The USB relay is driven with a short script, hard-reset-1

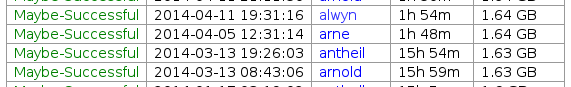

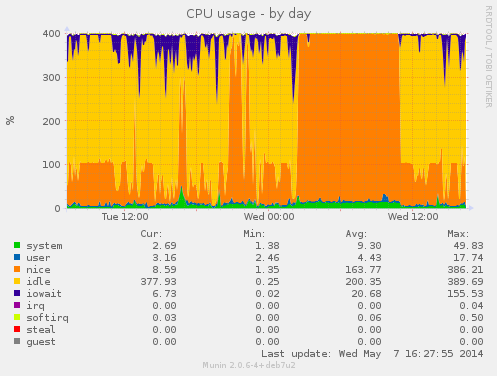

During this buildd cpu usage graph, we see most time only one CPU is consumed. So for fast package build times.. make sure your packages supports parallel building. For developers, abel.debian.org is porter machine with Armada XP. It has schroot's for both armel and armhf. set "DEB_BUILD_OPTIONS=parallel=4" and off you go. Finally I'd like to thank Thomas Petazzoni, Maen Suleiman, Hector Oron, Steve McIntyre, Adam Conrad and Jon Ward for making the upgrade happen. Meanwhile, we have unrelated trouble - a bunch of disks have broken within a few days apart. I take the warranty just run out... [1] only from Linux's point of view. - mv78200 has actually 2 cores, just not SMP or coherent. You could run an RTOS on the other core while you run Linux on the other.

During this buildd cpu usage graph, we see most time only one CPU is consumed. So for fast package build times.. make sure your packages supports parallel building. For developers, abel.debian.org is porter machine with Armada XP. It has schroot's for both armel and armhf. set "DEB_BUILD_OPTIONS=parallel=4" and off you go. Finally I'd like to thank Thomas Petazzoni, Maen Suleiman, Hector Oron, Steve McIntyre, Adam Conrad and Jon Ward for making the upgrade happen. Meanwhile, we have unrelated trouble - a bunch of disks have broken within a few days apart. I take the warranty just run out... [1] only from Linux's point of view. - mv78200 has actually 2 cores, just not SMP or coherent. You could run an RTOS on the other core while you run Linux on the other.